⚛️ Introducing CREATE, a benchmark for creative associative reasoning in LLMs. Making novel, meaningful connections is key for scientific & creative works. We objectively measure how well LLMs can do this. 🧵👇

Philippe Laban

368 posts

@PhilippeLaban

Research Scientist @MSFTResearch. NLP/HCI Research.

⚛️ Introducing CREATE, a benchmark for creative associative reasoning in LLMs. Making novel, meaningful connections is key for scientific & creative works. We objectively measure how well LLMs can do this. 🧵👇

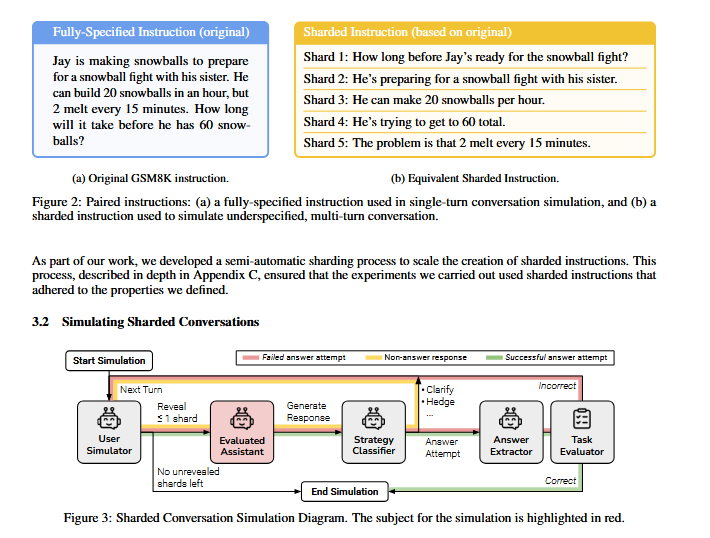

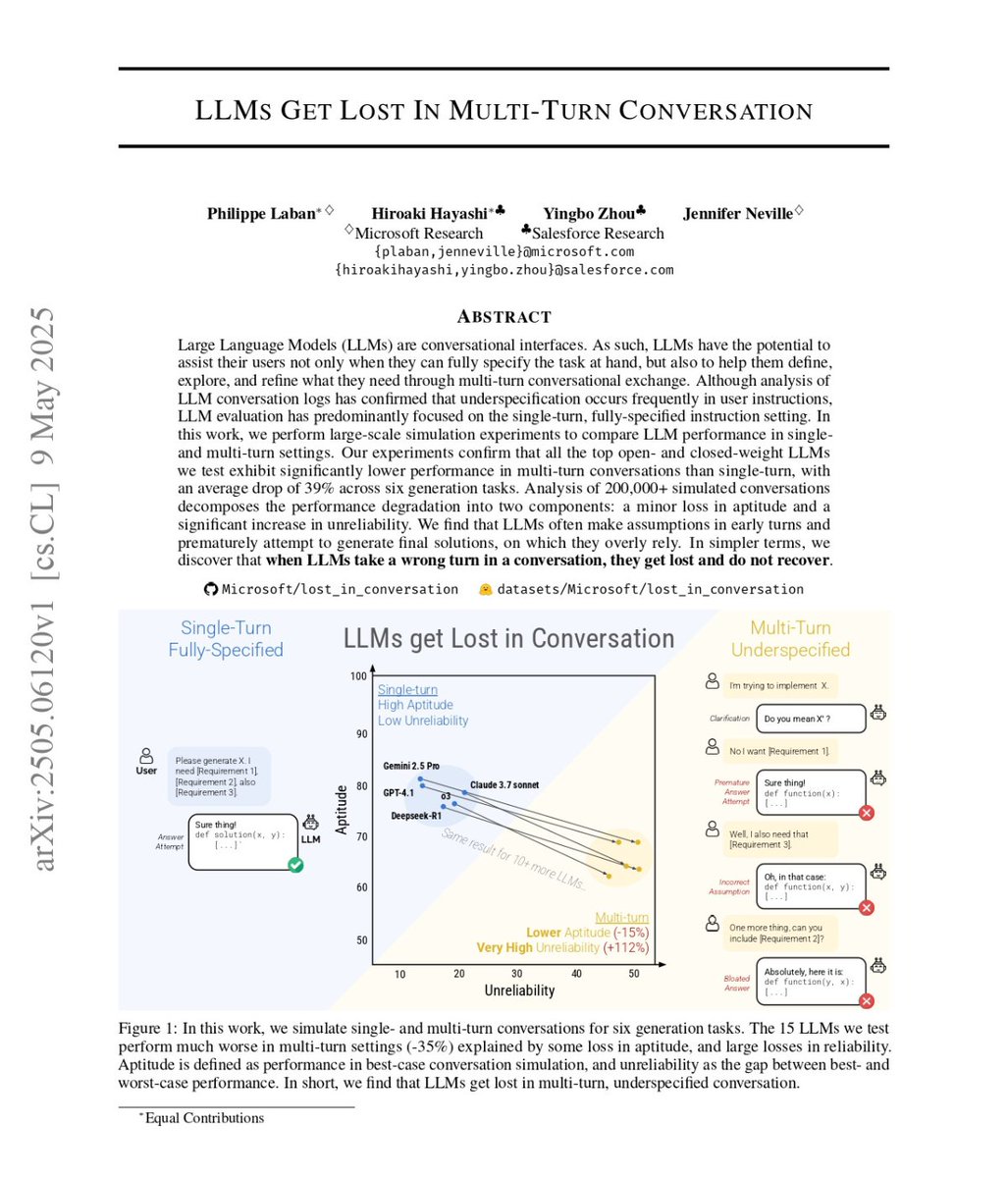

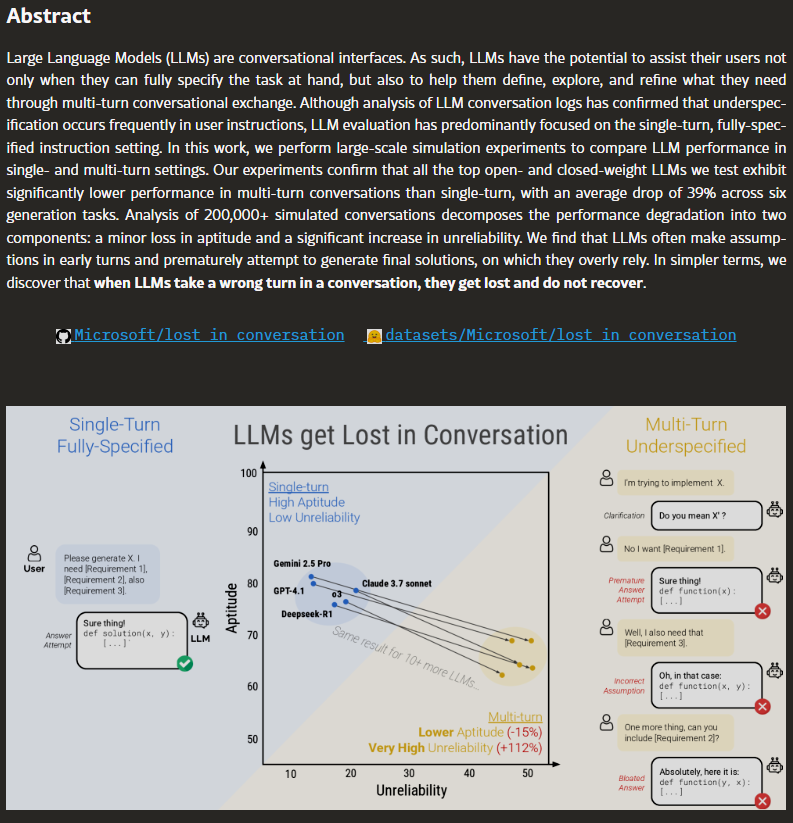

🆕paper: LLMs Get Lost in Multi-Turn Conversation In real life, people don’t speak in perfect prompts. So we simulate multi-turn conversations — less lab-like, more like real use. We find that LLMs get lost in conversation. 👀What does that mean? 🧵1/N 📄arxiv.org/abs/2505.06120

LLMs *Still* Get Lost In Multi-Turn Conversation. We re-ran experiments with newer models. Performance still drops, but with modest gains: mostly from improvements on the Python coding task. Also: Lost in Conversation will be presented at ICLR 2026 🎉🇧🇷

Microsoft Research and Salesforce analyzed 200,000+ AI conversations and found something the entire industry already suspected but nobody would say out loud. every major model gets dramatically worse the longer you talk to it. GPT-4, Claude, Gemini, Llama. all of them. no exceptions. paper: arxiv.org/abs/2505.06120

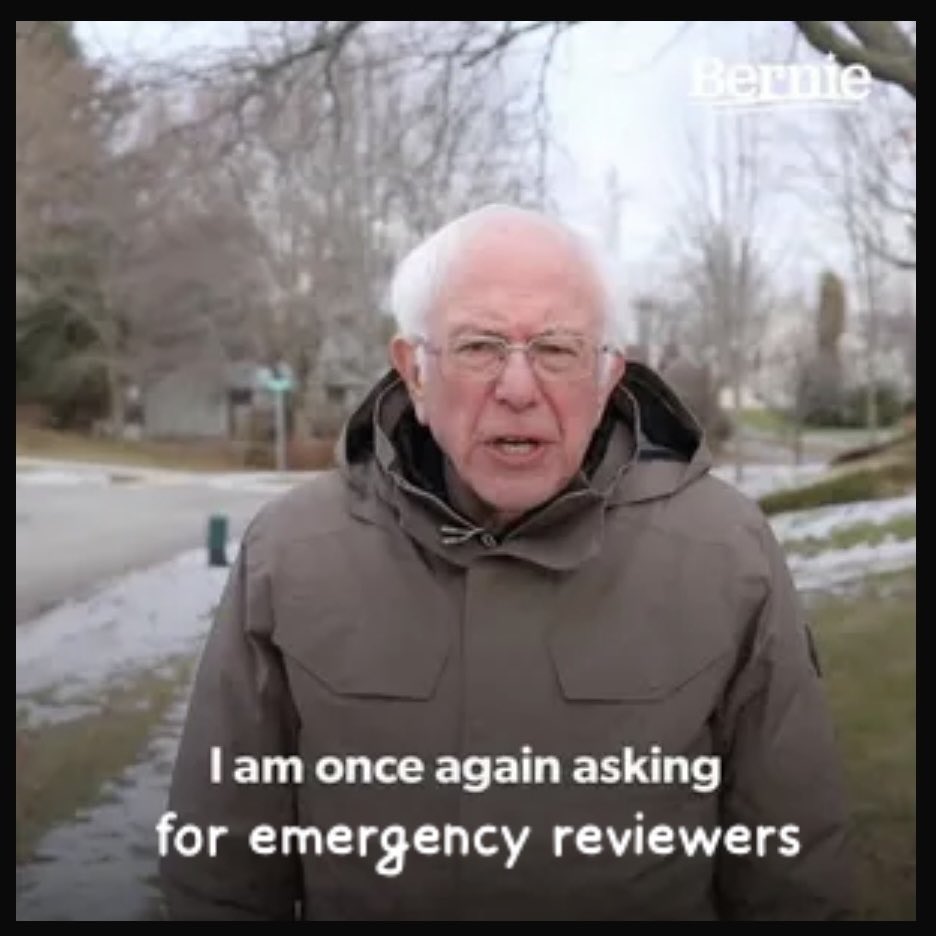

I'm looking for emergency reviewers for several papers in the general area of LLM agents. More detailed topics in the two tweets below. If you can help, please DM or comment the paper number. Any help appreciated as reviews will be released in less than 48 hours. Thanks!