Excited to introduce Gemma 4 Multi-Token Prediction Drafters⚡️Accelerated inference right in your pockets - Up to a 3x speedup - Same quality guarantees - Available in your favorite open-source tools

Philippe Laban

397 posts

@PhilippeLaban

Research Scientist @MSFTResearch. NLP/HCI Research.

Excited to introduce Gemma 4 Multi-Token Prediction Drafters⚡️Accelerated inference right in your pockets - Up to a 3x speedup - Same quality guarantees - Available in your favorite open-source tools

Can LLMs generate diverse outputs for open-ended questions? Is it helpful if we ensemble outputs from multiple models? We study 18 LLMs on 4 datasets and find that no single model is best at generating diverse outputs 👇/ 🧵

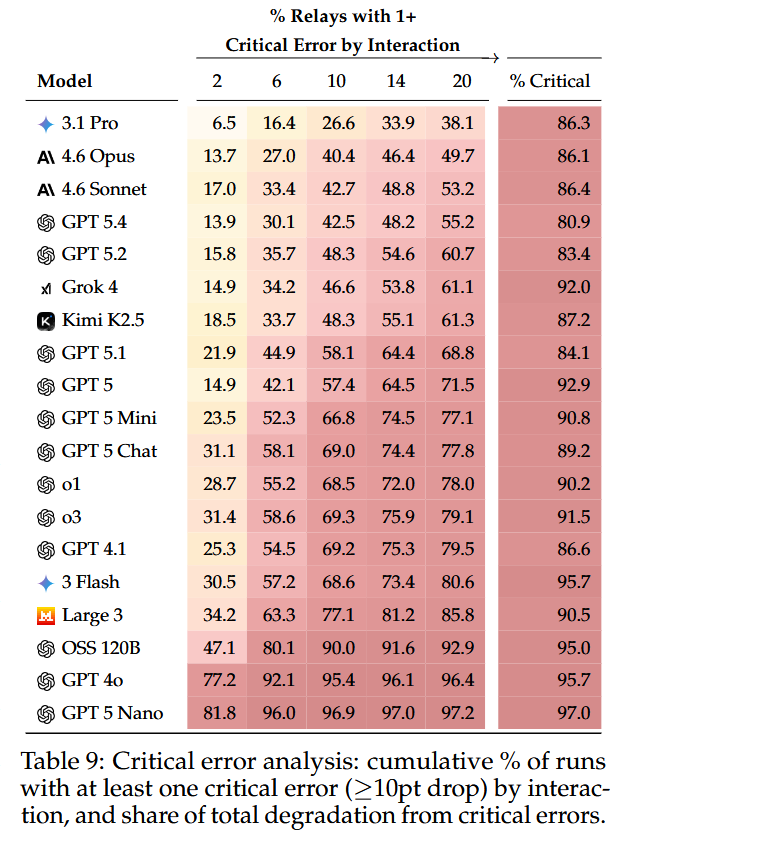

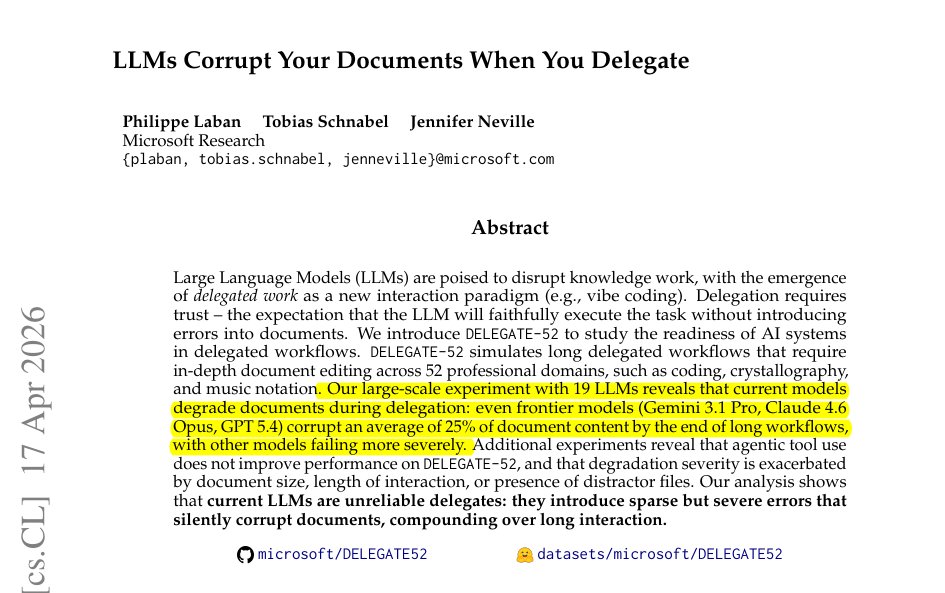

Finding #1: Every model degrades documents over time. We tested 19 LLMs. Even frontier models (Gemini 3.1 Pro, Claude 4.6 Opus, GPT-5.4) corrupt 25% of document content after 20 interactions. Average across all models: 50% content loss.

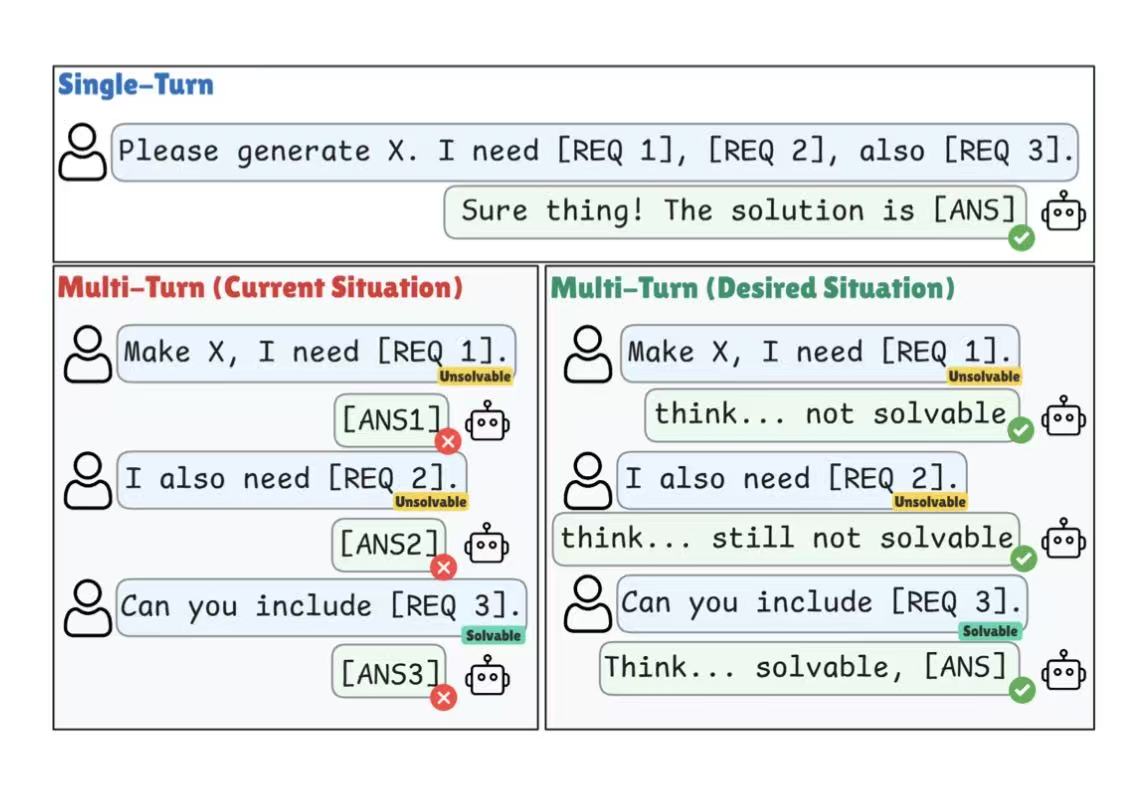

New paper! LLMs Corrupt Your Documents When You Delegate LLMs are enabling a new way of working: delegated work, where users supervise an LLM as it edits documents on their behalf. Delegation requires trust: does the LLM complete tasks without introducing errors? We simulate delegation across 52 professional domains and find that LLMs Corrupt Your Documents When You Delegate. 🧵1/N