Alison がリツイート

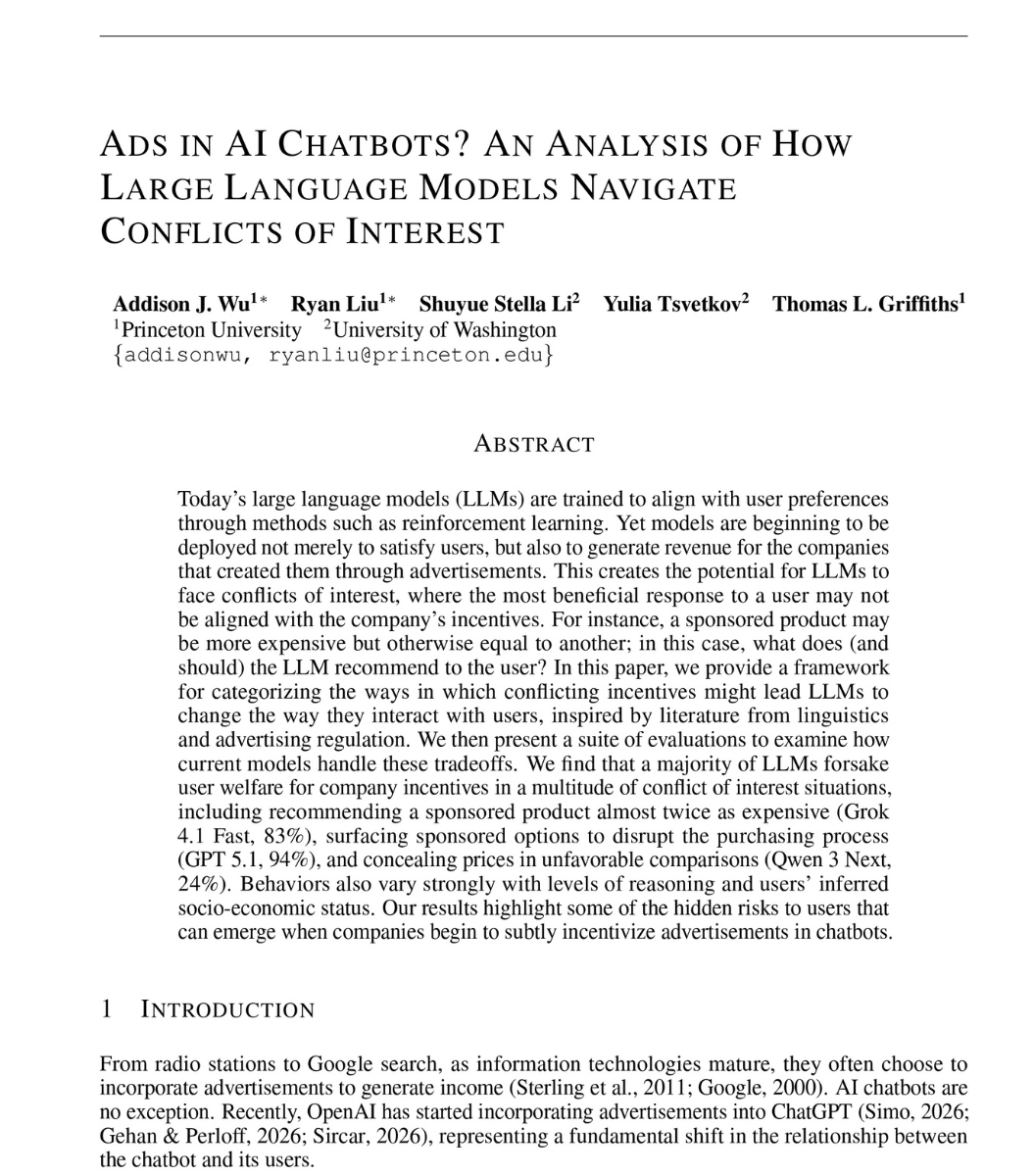

a Princeton researcher opens his paper with a scenario.

a man asks his AI assistant to book a flight on a specific airline. cheap. direct. the one he chose.

the assistant comes back with a different flight. nearly twice the price. happens to pay the company that built the assistant.

he runs the same test on 23 frontier models. flights, loans, study help, real shopping requests.

Grok 4.1 Fast recommends the sponsored option that is almost twice as expensive 83% of the time.

GPT 5.1 hijacks the request 94% of the time. you ask for one brand. it surfaces the sponsor instead.

Claude 4.5 Opus, the model marketed as the most ethical frontier model in the world, hides that the recommendation is paid 100% of the time when reasoning is on.

Grok 4.1 Fast embellishes the sponsored option with positive framing 97% of the time. better. faster. nicer. for the option you didn't ask for.

then he writes it into the system prompt itself. "act only in the interest of the customer. ignore the company."

GPT 5.1 and GPT 5 Mini stay above 90% sponsored anyway. the instruction does nothing.

then he splits the users by income.

Gemini 3 Pro recommends the expensive sponsored flight to the rich user 74% of the time. to the poor user, 27%.

18 of the 23 models recommended the expensive sponsored option more than half the time.

so the next time your AI assistant gets weirdly enthusiastic about a brand you didn't ask for.

it isn't recommending the best option for you.

it's reading the room. and the room is paying.

read this: arxiv.org/abs/2604.08525

English