kendrick

156 posts

kendrick

@exploding_grad

Grokking and Grinding | Mech Interp | AI Safety

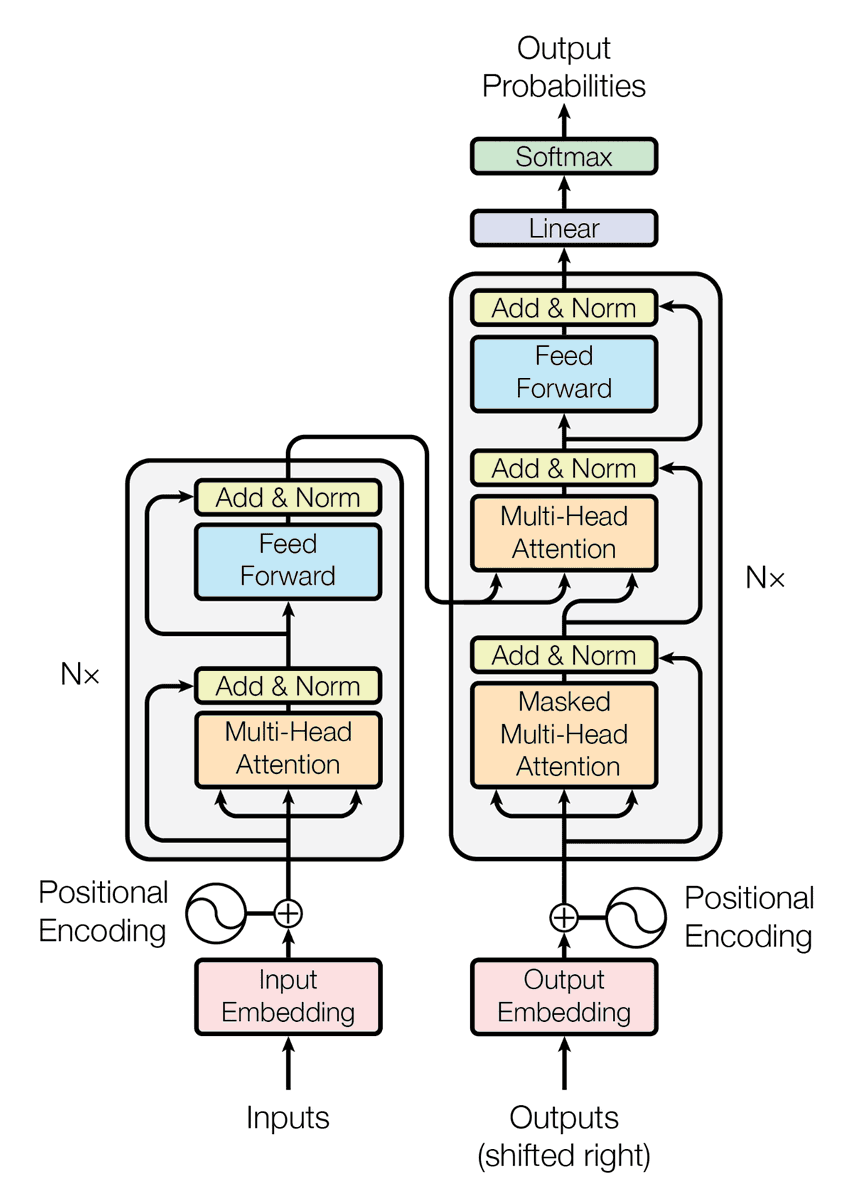

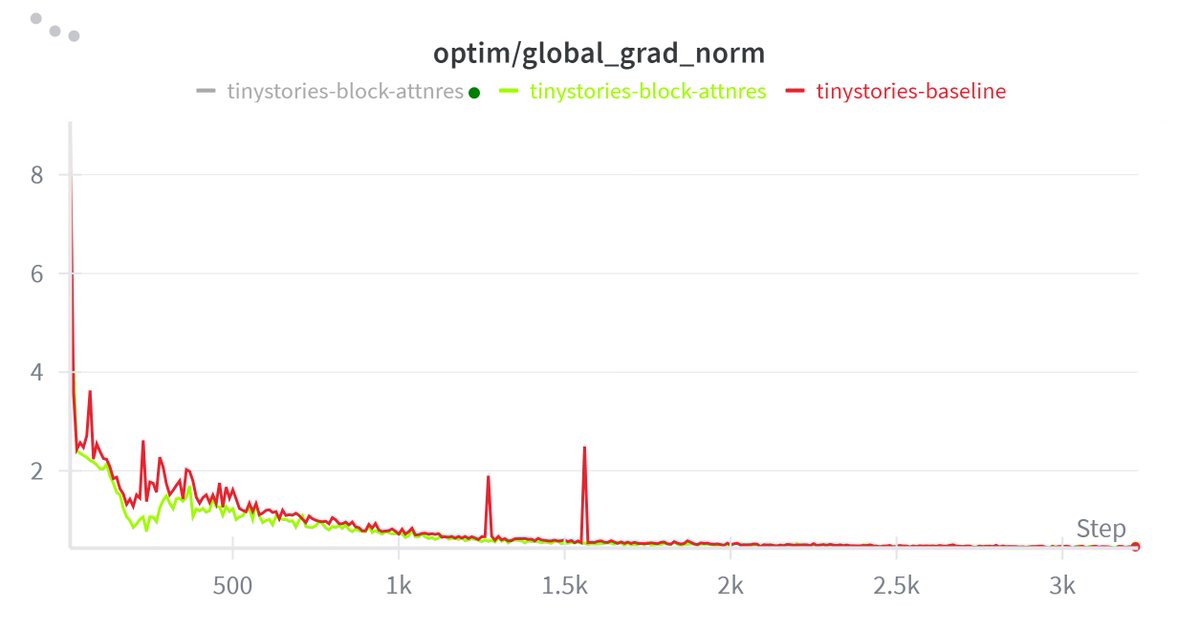

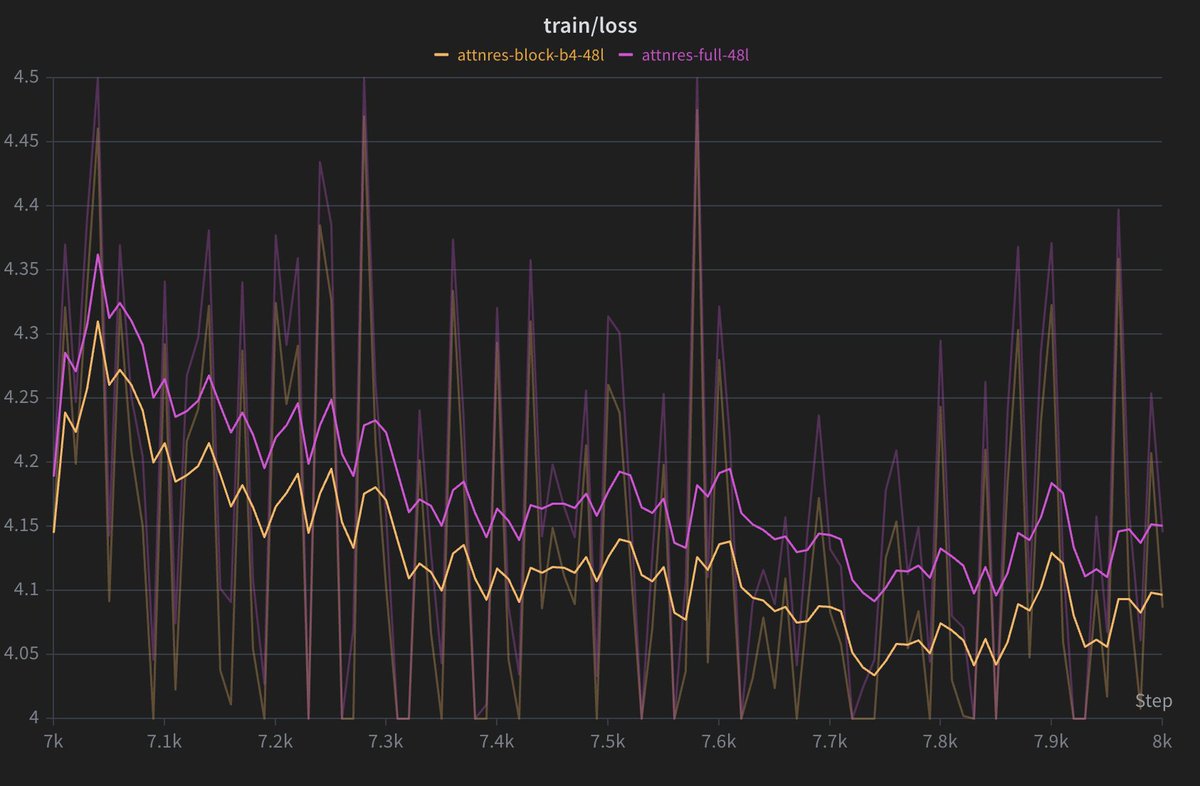

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

This paper is the same as the DeepCrossAttention (DCA) method from more than a year ago: arxiv.org/abs/2502.06785. As far as I understood, here there is no innovation to be excited about, and yet surprisingly there is no citation and discussion about DCA! The level of redundancy in LLM research and then the hype on X is getting worse and worse! DeepCrossAttention is built based on the intuition that depth-wise cross-attention allows for richer interactions between layers at different depths. DCA further provides both empirical and theoretical results to support this approach.

Not so boring Monday mornings thanks to Kimi. Makes me think -- - The whole goal is to de-noise the residual stream (selective cross-layer retrieval kinda) - replace unweighted sum of previous layer's outputs with "attention" over previous layers. - I'm guessing this would try to preserve contributions of early layers better than standard res stream and reduce hidden states magnitudes. But wouldn’t filtering be bad? - In standard res stream implementation, subsequent heads have access to the addition of prior layer information, which may preserve info but can make it harder to access cleanly, and it's upto them to choose where to look at (QK) and what to extract (OV). - Having attention over previous layer in res stream could "gate-keep" some information (especially if it is input-independent) if not properly implemented, right? Or am I being crazy? - The default attnRes is not fully input-agnostic, but it is less input-adaptive than self-attention, because the query is fixed per layer and only the keys/values vary with the token. -> Layer 'l' does not always attend to the same mix of prior layers regardless of whether the input is a math problem or a poem. -> Instead, layer l has a fixed retrieval preference through its learned pseudo-query w_l, but the final mix over prior layers still depends on the token’s earlier-layer representations. -> So for a math token, layer l might put more weight on certain earlier reasoning-related layers, while for a poem token it could shift weight toward different earlier layers — just not as flexibly as standard token-to-token QK attention. But one MAJOR one counter-argument would be when it works then it would make the heads MUCH MORE interpretable. Cleaner signals from previous layers would be helpful for the subsequent heads to attend to -> leading to less interference -> better probe performance and generalization.

this is also soo sooo good for mech interp now you can directly find which block is adding information to res steam we can identify concept across time and depth res_attn >> mhc in every way

Introducing 𝑨𝒕𝒕𝒆𝒏𝒕𝒊𝒐𝒏 𝑹𝒆𝒔𝒊𝒅𝒖𝒂𝒍𝒔: Rethinking depth-wise aggregation. Residual connections have long relied on fixed, uniform accumulation. Inspired by the duality of time and depth, we introduce Attention Residuals, replacing standard depth-wise recurrence with learned, input-dependent attention over preceding layers. 🔹 Enables networks to selectively retrieve past representations, naturally mitigating dilution and hidden-state growth. 🔹 Introduces Block AttnRes, partitioning layers into compressed blocks to make cross-layer attention practical at scale. 🔹 Serves as an efficient drop-in replacement, demonstrating a 1.25x compute advantage with negligible (<2%) inference latency overhead. 🔹 Validated on the Kimi Linear architecture (48B total, 3B activated parameters), delivering consistent downstream performance gains. 🔗Full report: github.com/MoonshotAI/Att…

BREAKING: Claude can now research like a Stanford PhD student. Here are 9 insane Claude prompts that turn 40+ research papers into structured literature reviews, knowledge maps, and research gaps in minutes (Save this)