tokenbender

13.8K posts

tokenbender

@tokenbender

Sparse and efficient • Deus eXperiments • 🇮🇳

New blackboard lecture w @ericjang11 He walks through how to build AlphaGo from scratch, but with modern AI tools. Sometimes you understand the future better by stepping backward. AlphaGo is still the cleanest worked example of the primitives of intelligence: search, learning from experience, and self-play. You have to go back to 2017 to get insight into how the more general AIs of the future might learn. Once he explained how AlphaGo works, it gave us the context to have a discussion about how RL works in LLMs and how it could work better – naive policy gradient RL has to figure out which of the 100k+ tokens in your trajectory actually got you the right answer, while AlphaGo’s MCTS suggests a strictly better action every single move, giving you a training target that sidesteps the credit assignment problem. The way humans learn is surely closer to the second. Eric also kickstarted an Autoresearch loop on his project. And it was very interesting to discuss which parts of AI research LLMs can already automate pretty well (implementing and running experiments, optimizing hyperparameters) and which they still struggle with (choosing the right question to investigate next, escaping research dead ends). Informative to all the recent discussion about when we should expect an intelligence explosion, and what it would look like from the inside. Timestamps: 0:00:00 – Basics of Go 0:08:06 – Monte Carlo Tree Search 0:31:53 – What the neural network does 1:00:22 – Self-play 1:25:27 – Alternative RL approaches 1:45:36 – Why doesn’t MCTS work for LLMs 2:00:58 – Off-policy training 2:11:51 – RL is even more information inefficient than you thought 2:22:05 – Automated AI researchers

A worker uses lubrication & body weight to force three tires into one. This saves shipping space therefore reducing costs.

There's a lot of controversy brewing around arXiv's decision to penalize authors who post unchecked AI generated content. The impulse is correct, IMO, simply on grounds of efficiency: it is much cheaper to insist the authors vet their work first, rather than distributing the cost of that work to EVERY reader/agent who subsequently downloads the work. I believe the mechanism is likely the wrong one, however. Unfortunately, suggestions to use github are even worse, IMO, because they lose the (effective) immutability of the scientific record, which arXiv upholds.

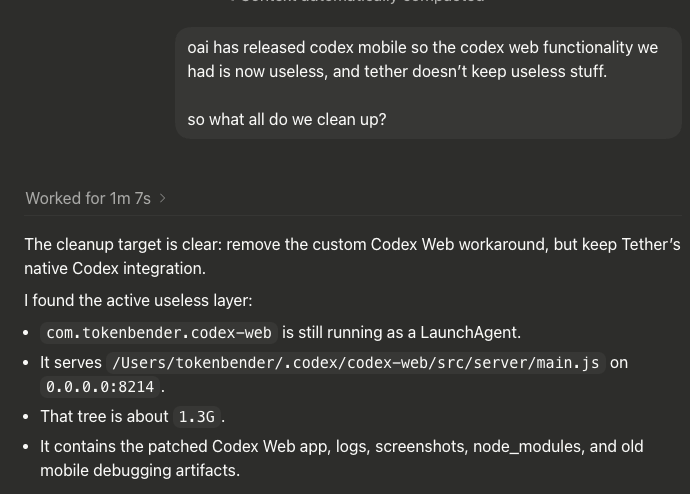

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

You've been asking for this one... Now in preview: Codex in the ChatGPT mobile app. Start new work, review outputs, steer execution, and approve next steps, all from the ChatGPT mobile app. Codex will keep running on your laptop, Mac mini, or devbox.

all the records are heavily based on work from previous contributors PRs (we do explore novel ideas in a dedicated "novelty" track, but none of them ended up improving the record). So it only made sense to let the agents write a little thank you to the community themselves github.com/KellerJordan/m…