seb@sebbsssss

Solving cold start is how we bring 1 billion memories onchain.

Every AI system runs into the same bottleneck. Without memory, there is no context.

No history.

No continuity.

No accumulated understanding.

So every new session, agent, or application starts too close to zero.

We think this is one of the biggest constraints in AI.

And it will not be solved with chat history alone.

If intelligence is going to compound, memory cannot stay trapped inside isolated apps or be rebuilt one prompt at a time.

It has to be:

importable, exportable, and explorable at scale.

Size matters!

That is how you solve cold start properly.

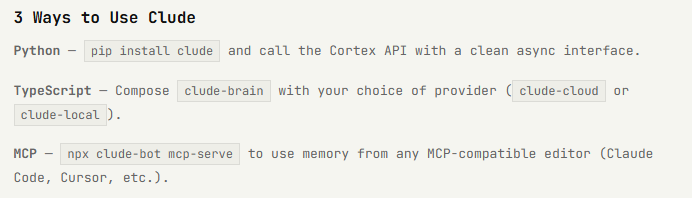

@cludeproject will make it possible to seed systems with large bodies of memory from day one.

From conversations, archives, records, histories, structured context, and layered narrative data. Everything you think it needs to remember.

New Size, New Challenges

But once memory begins to scale, the challenge changes.

It is no longer just storage.

It is structure.

It is navigation.

It is legibility.

Because real memory does not grow in a straight line.

It grows like a living system;

through nodes, branches, clusters, pathways, recurrences, contradictions, and long-range dependencies.

Not a transcript.

Almost like a real human Brain.

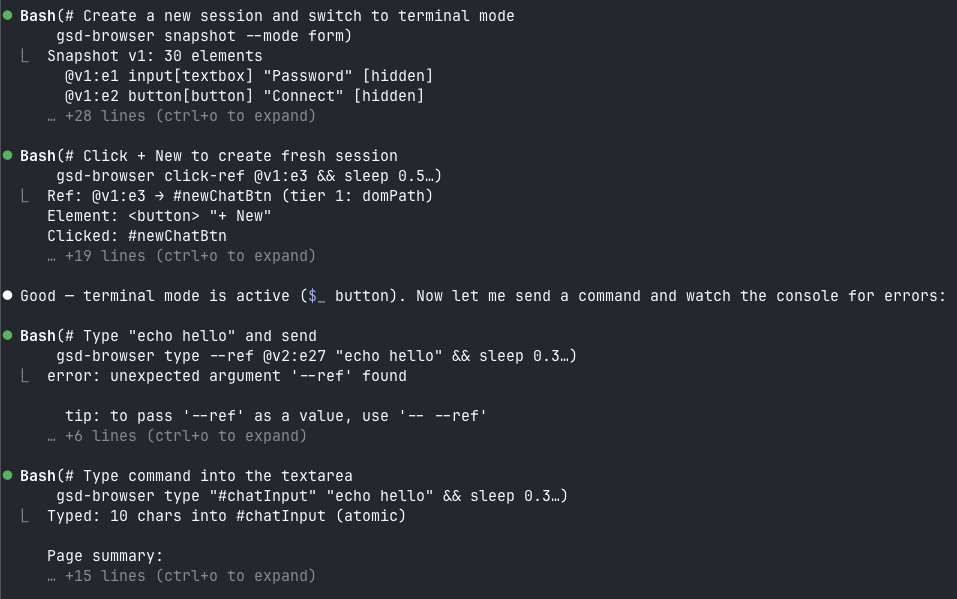

That is why we built the Memory Explorer.

The Memory Explorer makes large memory systems visible and navigable in real time, allowing both humans and machines to move through memory at different levels of resolution:

From high-level structures

to dense clusters

to specific nodes, links, and pathways.

And when you see it in motion, it becomes obvious but also quite beautiful like its alive.

memory is not just something to retrieve.

It is something to explore.

> Something to trace.

> Something to grow.

> Something to understand.

Clude is not just an app.

It is infrastructure for an AI economy onchain, designed for private memory packets to persist, move, and compound over time.

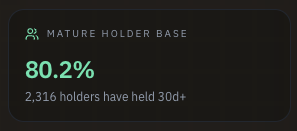

Because if we want to bring 1 billion memories onchain, memory cannot remain static, fragmented, or locked inside isolated products.

It has to become portable infrastructure for intelligence.

What comes next

Our upcoming release is the first major step in that direction.

We will show how we are solving cold start through:

> mass memory import/export

> a fully explorable digital brain

> a system designed to scale to one billion memories onchain

A living memory system.

A compounding intelligence layer.

This is step one.

More soon.

----

The mystery of life isn't a problem to solve, but a reality to experience.