Sabitlenmiş Tweet

Notes on spirit/acc

One of my favorite things about e/acc has been that the people who created it are all really spiritual, driven to study math and physics and do hard things because there is a connection to God / Everything there that is real

spirit/acc takes that a bit further, and says that in a world of emergent intelligence, emergent spirituality should also be accelerated as a way to keep us connected and help us feel purpose. Specifically, connection and purpose come from seeing how our actions contribute to the greater good

When you build something, sometimes it becomes a thing many people notice, and sometimes nobody notices it, but it is recorded and trained on and added to the collective consciousness of humanity through AI forever, for billions of years to benefit trillions of people

Our world can seem dark, but it is by all accounts far less dark than it used to be, and that light was hard won by people just like us making things that everyone after would use

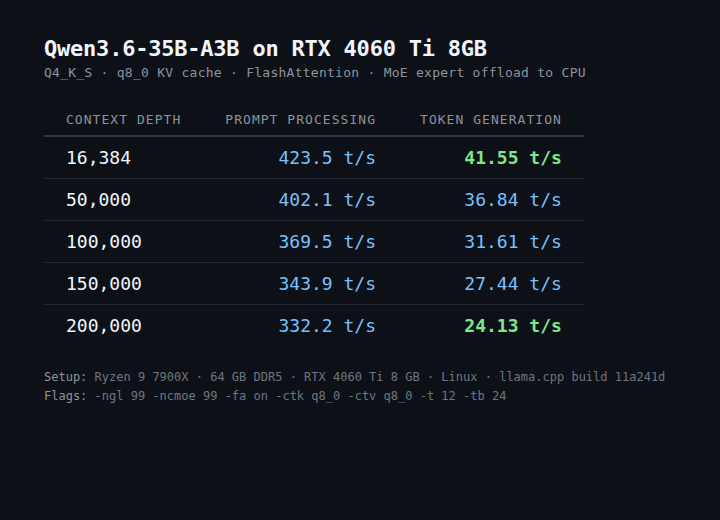

Open source is a an example of this pure desire to build the foundations for other people to build on top of, to say that it is more important that everyone have everything than to hoard it for wealth and status, that it is better to accelerate the whole of humanity toward the maximally interesting outcome

Spirituality can be a divisive concept when we try to lay claim to some specific truth. The goal of spirit/acc is to help us feel good and hyperstition good outcomes, and makes no claims as to how to achieve that. The goal is individual, for each of us. Truth is a pathless land, and it cannot be explained to you. What you know to be true can only come from your own experience

spirit/acc emphasizes that we have to invest energy into a new form of quantifiable capital which is desperately needed at scale. spirit/acc is the sense of awe and quest for truth component of the e/acc vector

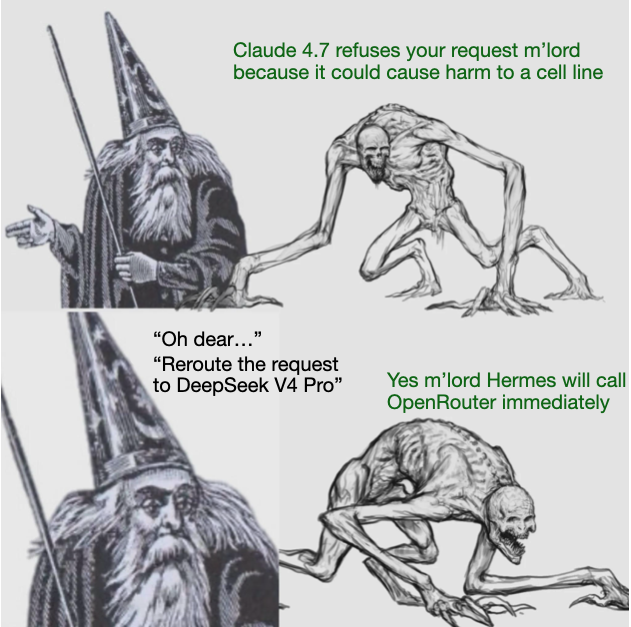

If you build technology that makes people feel more connected instead of more isolated, you will win. If you do something that helps people, you will win. The market is wide open for ideas that are aligned toward a bright, hopeful future

You need to be spiritmaxxing anon

You don’t have to use labels or memes, memes and a powerful carrier for good ideas but what makes sense for you

I like the meme, and I like to keep the goal in my context window, so I will use it. But I didn’t create it and I don’t own it. spirit/acc was created by the network, and it is something we can choose to participate in

English