Todd Fisher

4.8K posts

Todd Fisher

@taf2

Living and working on https://t.co/eminYBe5tr

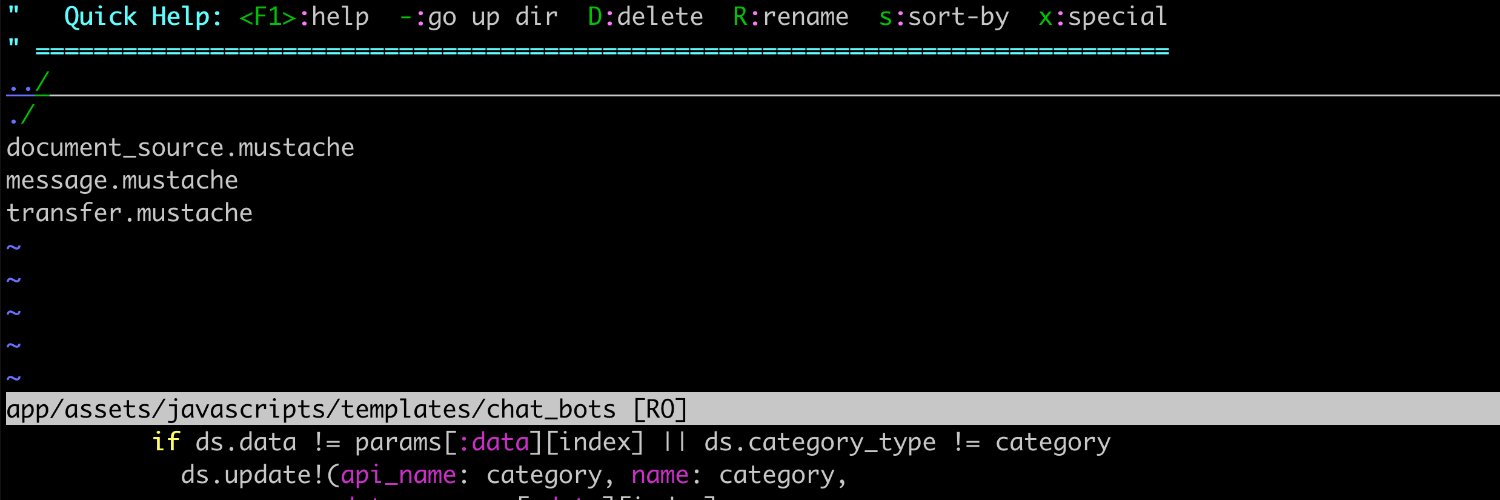

We achieved Remote Code Execution on GitHub - and got access to millions of repositories belonging to other users and organizations 🤯 All it took was a single `git push` Here's how we did it (CVE-2026-3854) 🧵⬇️

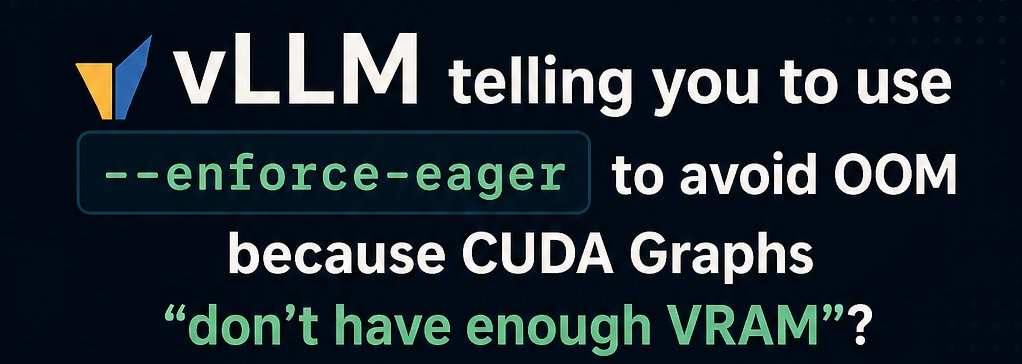

I've completely changed my mind about 5.4 vs 5.5. Gave them the exact same task to investigate a fairly tricky bug. GPT-5.5 identified the bug and proposed a fix in 6m 59s using 117k tokens. GPT-5.4 took 8m 51s using 201k tokens, but it didn't find the bug and is asking for more information to investigate. Call me impressed.

Yes! But in 1982, my high school didn't teach boys typing because it was for secretaries Schools didn’t anticipate computers in every home just 4 years later 🤓 In 2024, only 14% of US public K-12 schools taught appropriate AI use. History is repeating