固定されたツイート

New Article from the From Lab to Life collection: Are We Becoming Obsolete, or Finally Free?

"After 30 years in tech and research, I've reached a profound conclusion about our AI future.

'The future belongs to those who can effectively collaborate with artificial intelligence, not those who fear or ignore it.'

But here's what most people miss: this isn't about becoming obsolete. It's about becoming more human.

In my latest article from the 'From Lab to Life' collection, I break down complex AI developments into language everyone can understand. Because the future of AI shouldn't be decided by tech experts alone - it affects all of us.

The transformation ahead offers an unexpected gift: the possibility of returning to what makes us most human. Meaningful conversations, empathy, community support - what I call the 'human touch' - this remains irreplaceable.

AI may process information faster than any human, but it cannot offer the warmth of genuine human connection or the deep satisfaction that comes from meaningful relationships.

I believe we're heading toward a world where humans become curators of experience, facilitators of connection, and guardians of meaning in an increasingly automated world.

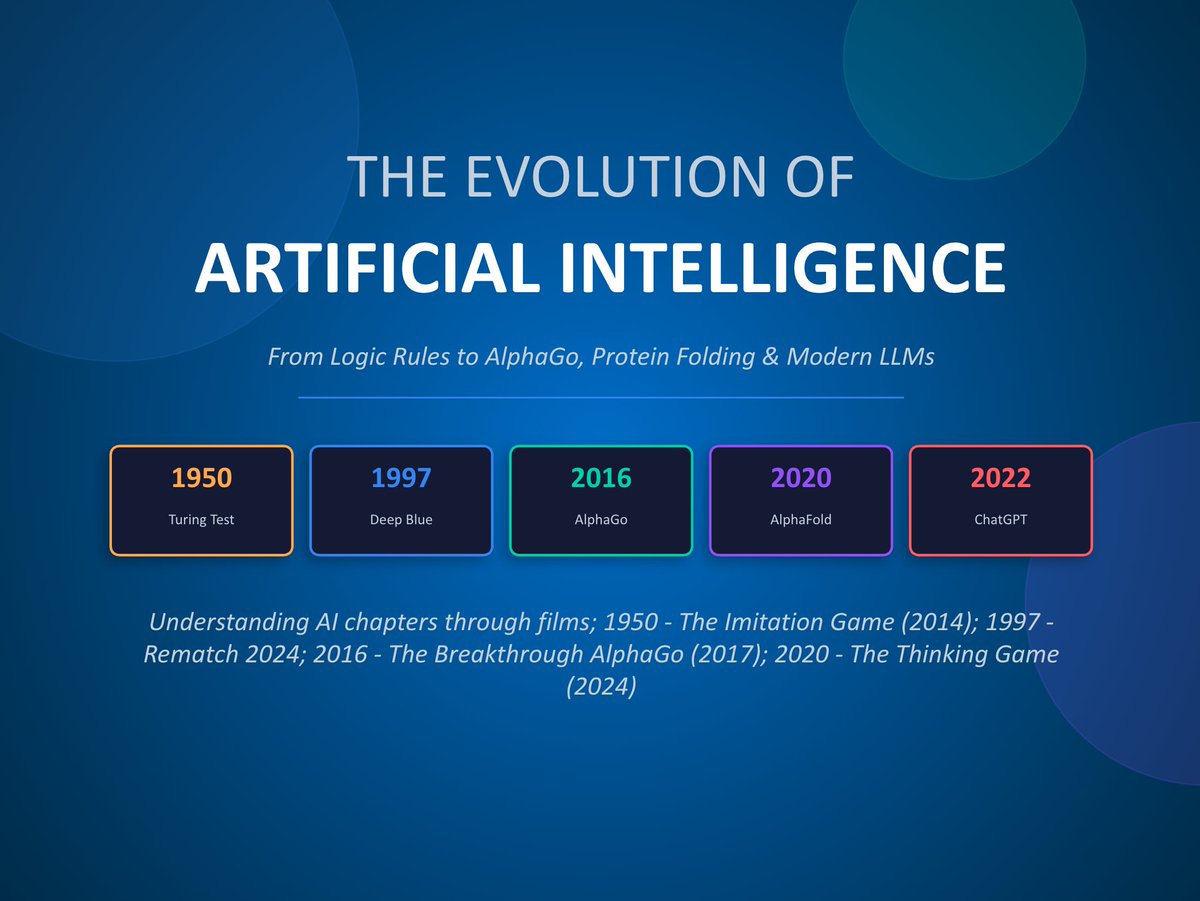

This is part of my #FromLabToLife series - simplifying complex technology so everyone can understand what's coming and how to prepare.

Are we becoming obsolete or finally free? Read my full article: @talirezun/are-we-becoming-obsolete-or-finally-free-ed66a2ddc9a4" target="_blank" rel="nofollow noopener">medium.com/@talirezun/are…

#AI #FromLabToLife #FutureOfHumanity #Technology #HumanPotential"

English