Aharon Azulay

518 posts

Aharon Azulay 리트윗함

@julien_c The cynical reasons:

1) They don't have enough compute to serve it given the crazy demand

2) They want to keep their moat of being the closest to automate AI R&D

English

@AnthropicAI The gap between public and private frontiers widens.

English

Introducing Project Glasswing: an urgent initiative to help secure the world’s most critical software.

It’s powered by our newest frontier model, Claude Mythos Preview, which can find software vulnerabilities better than all but the most skilled humans.

anthropic.com/glasswing

English

@kimmonismus That's what you get when every employee is expected by leadership to fully embrace Claude Code.

It also helps to get unlimited Claude Code with unreleased models, a /super-fast internal mode, longer context windows, etc.

English

They dont stop, do they? There's a new release from Anthropic practically every day.

Today: Interactive charts and diagrams directly in the chat.

Claude@claudeai

Claude can now build interactive charts and diagrams, directly in the chat. Available today in beta on all plans, including free. Try it out: claude.ai

English

Aharon Azulay 리트윗함

@karpathy The crazy thing is this current abilities are achieved with models designed with compute shortage in mind.

English

It is hard to communicate how much programming has changed due to AI in the last 2 months: not gradually and over time in the "progress as usual" way, but specifically this last December. There are a number of asterisks but imo coding agents basically didn’t work before December and basically work since - the models have significantly higher quality, long-term coherence and tenacity and they can power through large and long tasks, well past enough that it is extremely disruptive to the default programming workflow.

Just to give an example, over the weekend I was building a local video analysis dashboard for the cameras of my home so I wrote: “Here is the local IP and username/password of my DGX Spark. Log in, set up ssh keys, set up vLLM, download and bench Qwen3-VL, set up a server endpoint to inference videos, a basic web ui dashboard, test everything, set it up with systemd, record memory notes for yourself and write up a markdown report for me”. The agent went off for ~30 minutes, ran into multiple issues, researched solutions online, resolved them one by one, wrote the code, tested it, debugged it, set up the services, and came back with the report and it was just done. I didn’t touch anything. All of this could easily have been a weekend project just 3 months ago but today it’s something you kick off and forget about for 30 minutes.

As a result, programming is becoming unrecognizable. You’re not typing computer code into an editor like the way things were since computers were invented, that era is over. You're spinning up AI agents, giving them tasks *in English* and managing and reviewing their work in parallel. The biggest prize is in figuring out how you can keep ascending the layers of abstraction to set up long-running orchestrator Claws with all of the right tools, memory and instructions that productively manage multiple parallel Code instances for you. The leverage achievable via top tier "agentic engineering" feels very high right now.

It’s not perfect, it needs high-level direction, judgement, taste, oversight, iteration and hints and ideas. It works a lot better in some scenarios than others (e.g. especially for tasks that are well-specified and where you can verify/test functionality). The key is to build intuition to decompose the task just right to hand off the parts that work and help out around the edges. But imo, this is nowhere near "business as usual" time in software.

English

@DaveShapi Exactly. You can also plot the exponent of overlapping windows and see that the exponent is increasing.

English

@EMostaque Actually, alignment will be a by product of optimizing multiple different AIs on different utility functions that are all slightly misaligned with humans but in different ways that keep them in check.

This is not dissimilar from the Sam and Dario situation.

English

@Google @demishassabis @GoogleDeepMind @GeminiApp Extremely cool and not AGI pilled. Or maybe it’s post-agi pilled?

English

Meet Lyria 3, our latest music generation model from @GoogleDeepMind. 🎶

Now, you can create custom music tracks in the @GeminiApp — just by describing an idea or uploading an image or video.

English

I confirmed with a Google representative that since this was a runtime improvement and they do not believe these performance gains constitute any additional risk, they believe that no safety explanation is required of them.

I found that to be a pretty terrible answer.

Nathan Calvin@_NathanCalvin

Did I miss the Gemini 3 Deep Think system card? Given its dramatic jump in capabilities seems nuts if they just didn't do one. There are really bad incentives if companies that do nothing get a free pass while cos that do disclose risks get (appropriate) scrutiny

English

@polynoamial @Anthropic Exactly what I thought when I read it.

English

I appreciate @Anthropic's honesty in their latest system card, but the content of it does not give me confidence that the company will act responsibly with deployment of advanced AI models:

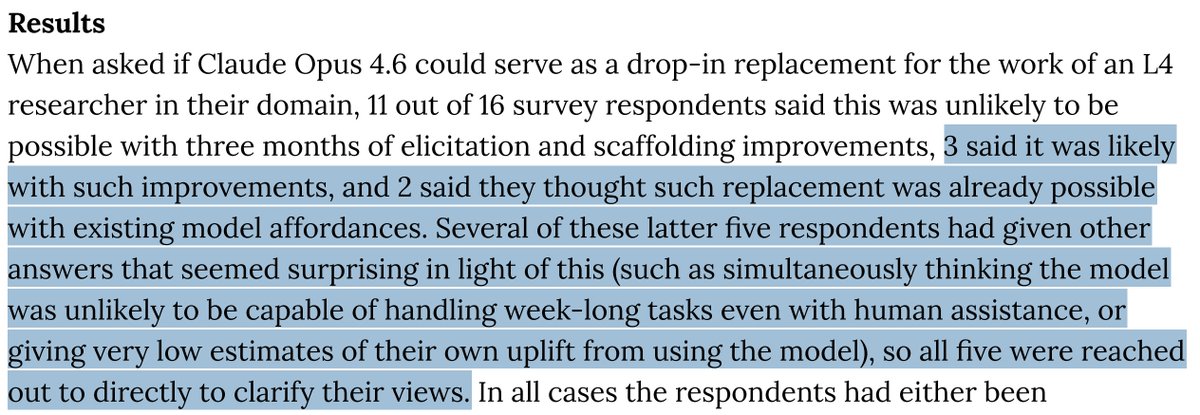

-They primarily relied on an internal survey to determine whether Opus 4.6 crossed their autonomous AI R&D-4 threshold (and would thus require stronger safeguards to release under their Responsible Scaling Policy). This wasn't even an external survey of an impartial 3rd party, but rather a survey of Anthropic employees.

-When 5/16 internal survey respondents initially gave an assessment that suggested stronger safeguards might be needed for model release, Anthropic followed up with those employees specifically and asked them to "clarify their views." They do not mention any similar follow-up for the other 11/16 respondents. There is no discussion in the system card of how this may create bias in the survey results.

-Their reason for relying on surveys is that their existing AI R&D evals are saturated. Some might argue that AI progress has been so fast that it's understandable they don't have more advanced quantitative evaluations yet, but we can and should hold AI labs to a high bar. Also, other labs do have advanced AI R&D evals that aren't saturated. For example, OpenAI has the OPQA benchmark which measures AI models' ability to solve real internal problems that OpenAI research teams encountered and that took the team more than a day to solve.

I don't think Opus 4.6 is actually at the level of a remote entry-level AI researcher, and I don't think it's dangerous to release. But the point of a Responsible Scaling Policy is to build institutional muscle and good habits before things do become serious. Internal surveys, especially as Anthropic has administered them, are not a responsible substitute for quantitative evaluations.

English

StackOverClaw

Collective continual learning platform for coding agents

@steipete

English

A year ago we had a new model released every 3 months, now we are approaching a new model released every 1 month

when will we get to a new model released every 2 weeks? What about 3 days? Isn't that a form of continual learning?

prinz@deredleritt3r

Cadence of recent Codex releases: - November 19: Codex 5.1 Max - December 18: Codex 5.2 - February 5: Codex 5.3 A significantly better model *every month*

English

Aharon Azulay 리트윗함

We’re training a text-to-image model (PRX) from scratch and documenting the whole journey here :))

First major milestone: PRX weights are live in 🤗 Diffusers (Apache 2.0) 🎉

PRX is a 1.3B-param flow-matching T2I model, built on a simplified MMDiT backbone with a multilingual text encoder and multiple VAE / resolution variants.

We’ll be sharing the full journey here: experiments, design choices, lessons learned, and future releases. Excited to show more soon.

Full announcement & demo 👇

huggingface.co/blog/Photoroom…

@huggingface @nvidia @NVIDIAGeForceFR @matthieurouif

English