Dillon Laird

210 posts

Dillon Laird

@DillonLaird

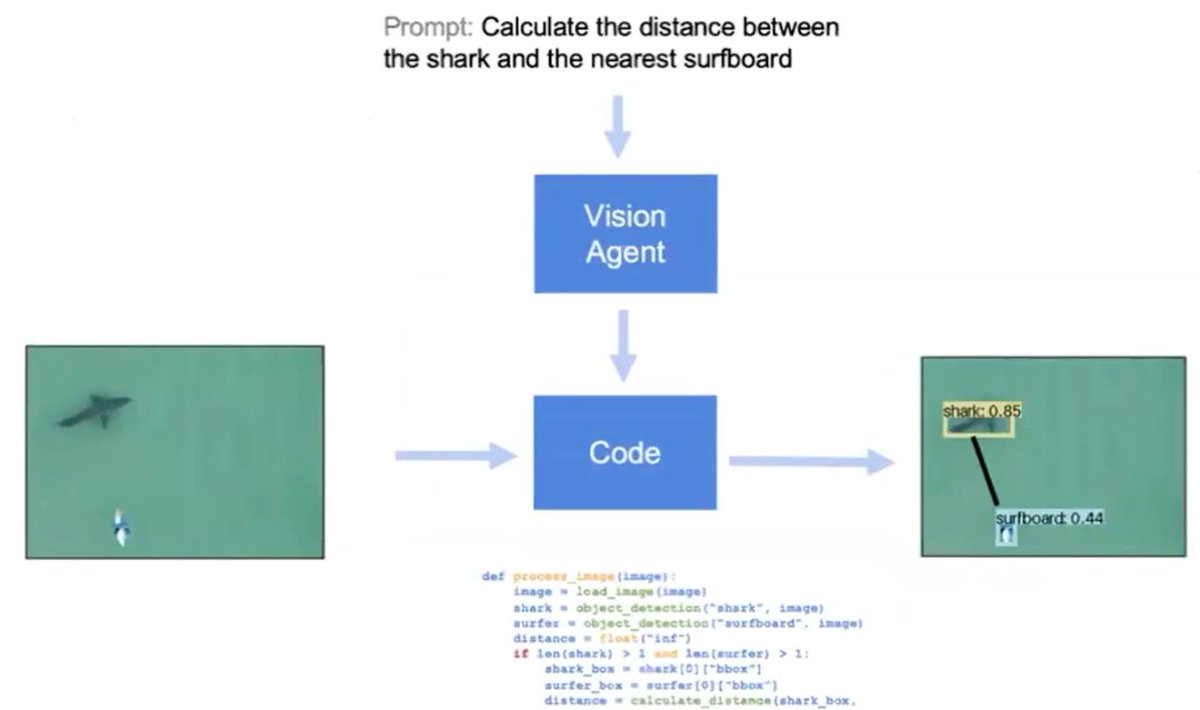

Working on vision models @LandingAI 🤖 @StanfordEng @uwcse 👨🎓 neovim enthusiast 💻 I help neural networks find local minima 🧠

🎙️Speakers We got really great feedback on the lightning talks. I want to thank all the speakers who made their time on Saturday and presented cool demos. It's definitely worth to follow these founders and AI companies: 📢 Vasek Mlejnsky (@mlejva) - Founder and CEO of @e2b_dev 📢 Brendon Geils (@BrendonGeils) - Founder and CEO of @AthenaIntell 📢 Dillon Laird (@DillonLaird) - Founding engineer at @LandingAI 📢 Atai Barkai (@ataiiam) - Founder of @CopilotKit 📢 Nate Sesti (@NateSesti) - Co-founder of @continuedev 📢@BazeleyMikiko) - Devrel at @FireworksAI_HQ 📢 Sam Stowers @sammakesthings - Engineer at @weights_biases 📢 Yohei Nakajima (@yoheinakajima) - Founder of @babyAGI_