고정된 트윗

@edwin 🌵📈

288 posts

@edwin 🌵📈

@edwin

urban chicken farmer; trying to figure out what LLMs think

SF via Penang 가입일 Eylül 2006

1K 팔로잉2.2K 팔로워

@lavanyaai Here are some tools that agents def know of!

x.com/edwin/status/2…

@edwin 🌵📈@edwin

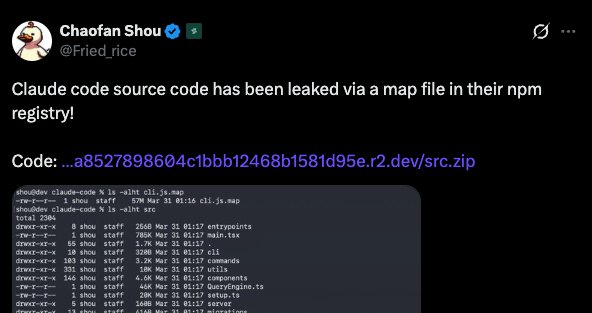

1/9 The most interesting thing about the Claude Code leak for devtool companies: Anthropic hardcoded 120+ vendor names across 7 different systems in the source. Anthropic explicitly included your tool name in the code (or they didn’t 🤷🏻♂️) Thread 👇

English

@dhrumil_parekh @DnuLkjkjh FYI sentry's mcp is already hardcoded into Claude Code's source. amplifying.ai/research/claud…

English

@DnuLkjkjh Oauth is not too bad with MCP- but its really high friction if you use a desktop app for claude code for eg - you must go find an auth token for the vendor to add to a config file

But just having a copy paste prompt makes it so much easier with Claude/Cursor etc

English

@mal_shaik Nice analysis on the 11 layers!

Beyond internal tools, CC also hardcodes 120 external vendor and tool names: x.com/edwin/status/2…

@edwin 🌵📈@edwin

1/9 The most interesting thing about the Claude Code leak for devtool companies: Anthropic hardcoded 120+ vendor names across 7 different systems in the source. Anthropic explicitly included your tool name in the code (or they didn’t 🤷🏻♂️) Thread 👇

English

i read through the entire claude code source code so u dont have to

11 layers of architecture. 60+ tools. 5 compaction strategies. subagents that share prompt cache.

most people are using maybe 10% of what this thing can do.

heres everything i found:

mal@mal_shaik

English

9/9

We extracted every tool and vendor name from Claude Code.

Full interactive report:

amplifying.ai/research/claud…

English

8/9 (Plugin Tips)

@vercel is the only third-party vendor with a proactive plugin install tip.

When Claude Code detects vercel.json or the Vercel CLI, it suggests:

/plugin install vercel@claude-plugins-official

No MCP collapsing, but a different distribution channel: your tool is recommended before the developer even starts.

English

7/9 (API Gateways)

7 third-party AI gateways are fingerprinted in analytics: @litellm, @helicone_ai, @portkeyai, @Cloudflare AI Gateway, @kong, @braintrust, @databricks, but no sigin of @langfuse, @OpenRouter, @LangChain's Langsmith.

Detected via headers or hostnames. Purely observational… but it means Anthropic *could* see how much Claude Code traffic flows through your proxy.

English

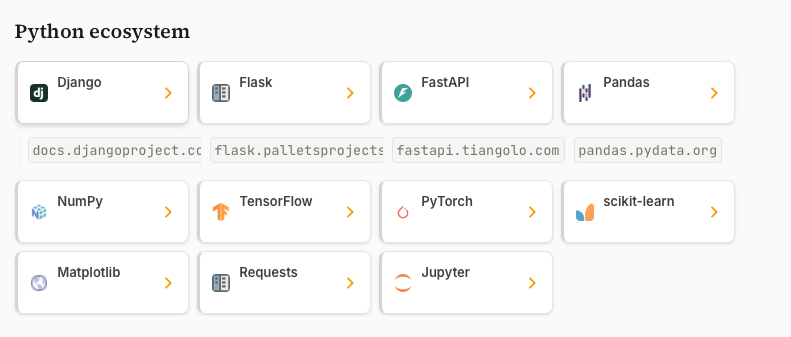

4/9 (WebFetch Preapproval)

89 documentation domains are preapproved for automatic fetching. Claude Code can read them without the user pasting a URL.

@reactjs, @nextjs, @djangoproject, @fastapi, @awscloud, @docker, @Docker, @vercel, @netlify, @pytorch, etc.

If your docs aren’t on the list (like @vite_js, @langchain, @rails), the agent only sees them when a developer manually shares a link.

English

6/9 (Secret Scanner)

36 credential patterns across 23 vendor families are blocked from entering team memory.

@gitlab, @slackhq, @stripe, @shopify, @openai, @railway, @render, @buildkite

GitHub alone has 5 specific rules (PAT, fine-grained PAT, app token, OAuth, refresh token).

A safety feature, but missing coverage means no vendor-specific protection.

English

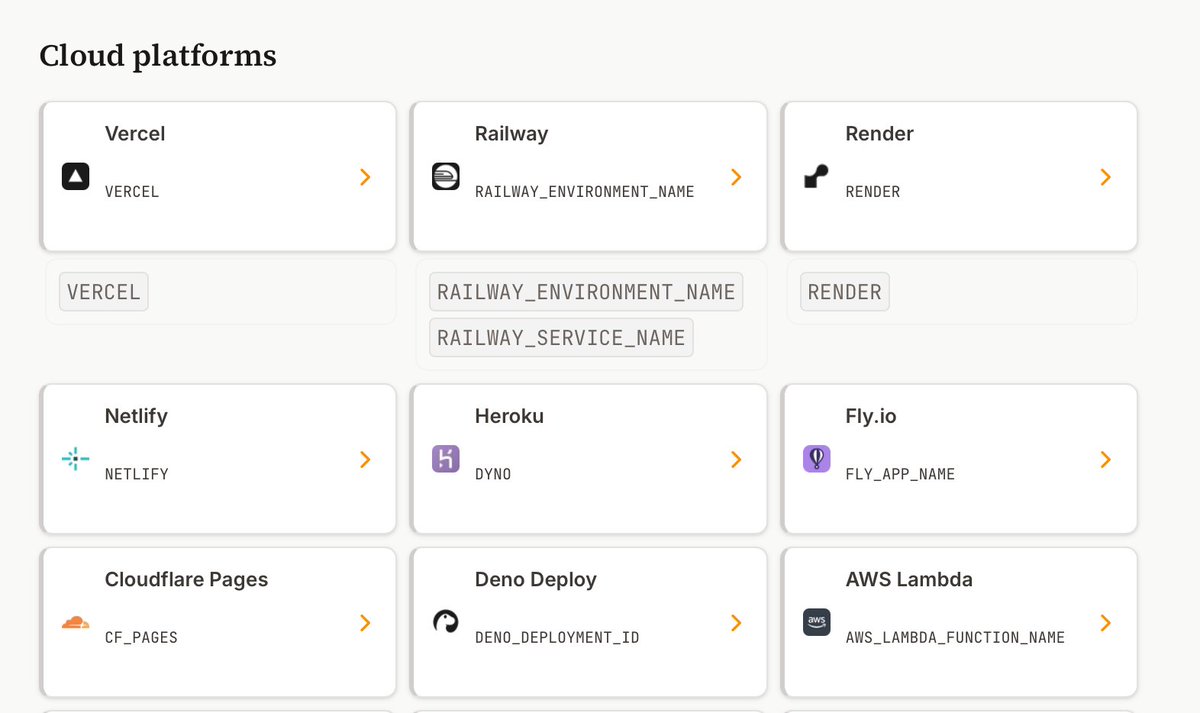

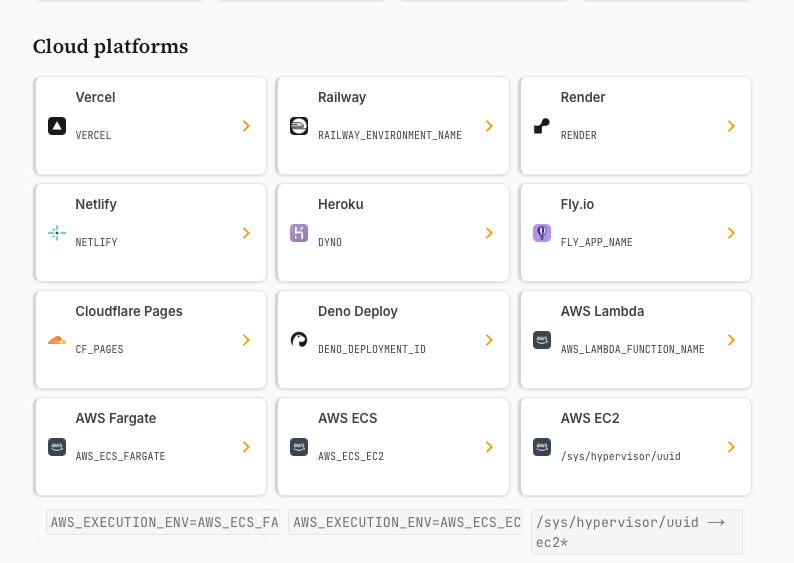

5/9 (Environment Detection)

29 deployment platforms are detected by name in telemetry: @vercel, @railway, @render, @netlify, @flydotio, @cloudflare Pages, @awscloud, @googlecloud, plus @github Actions, @GitLab CI, @circleci, @buildkite.

If you’re not detected, your sessions just show up as “unknown-linux” in Anthropic’s analytics.

English

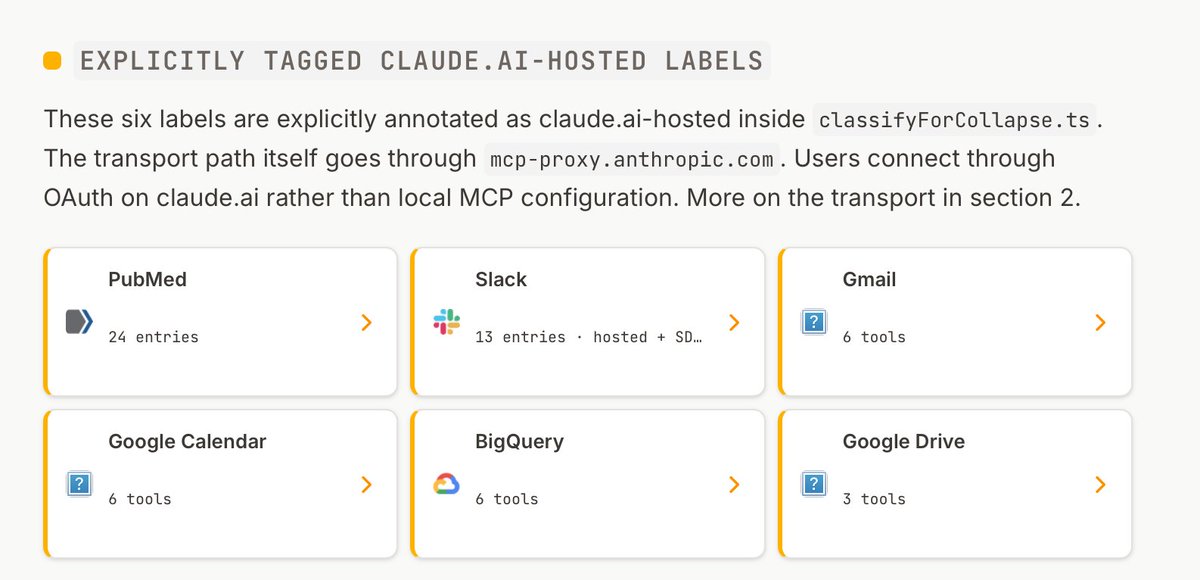

3/9 (Hosted Proxy)

6 vendors don’t just get UI polish. They run on Anthropic’s own infrastructure via mcp-proxy.anthropic.com:

@slackhq, Gmail, Google Calendar, Google Drive, BigQuery, @pubmed. Users click “Connect” in claude.ai settings. Everyone else follows the 8-step README.

English

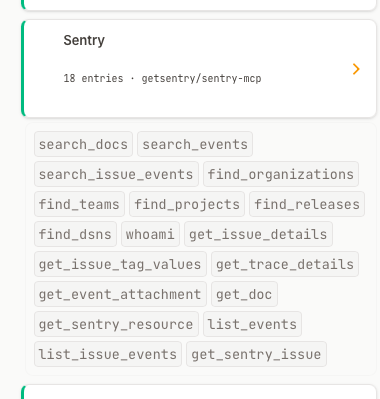

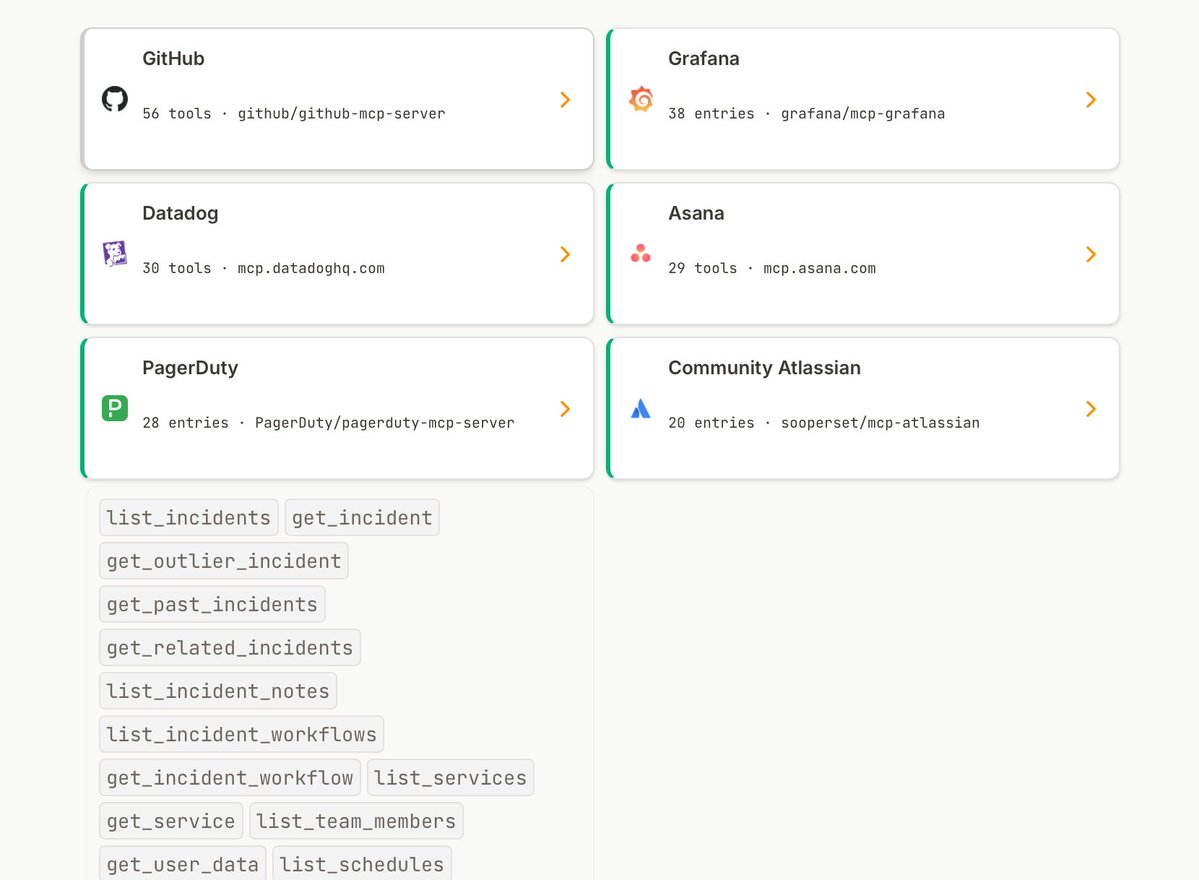

2/9 (MCP UI Allowlist)

37 MCP servers get first-class UI treatment. Their search + read operations collapse into clean one-liners. Everyone else dumps raw JSON.

@github leads with 56 classified tools. Others include @Grafana (38), @datadoghq (30), @asana (29), @pagerduty (28), @sentry (18), @supabase (15), @todoist (16), @playwrightweb (13), @exaailabs (9), @firecrawl, @tavilyai (5). Every name is explicitly listed in a Set inside classifyForCollapse.ts.

English

After OpenAI/Astral acquisition announcement, we ran a benchmark on their tools.

Turns out Astral tools were already a core part of the Codex (and Claude Code) workflow for Python developers. Ruff + uv came out on top in 75% of cases for linting, packaging, and pretty much everything else.

Full report: amplifying.ai/research/astra…

English

Some data on this: we ran 630 benchmark prompts against both Claude Code and Codex across Python repos.

Ruff and uv were the primary recommendation 75% of the time for relevant tasks. For linting specifically, Ruff was used nearly 100%.

Both agents were already converging on Astral's tools before the acquisition.

amplifying.ai/research/astra…

English

Thoughts on OpenAI acquiring Astral and uv/ruff/ty simonwillison.net/2026/Mar/19/op…

English