LiteLLM (YC W23) retweetledi

Thanks to my personal fav @LiteLLM, switching to a faster api model was eassssy. So been using @AnthropicAI Haiku

English

LiteLLM (YC W23)

961 posts

@LiteLLM

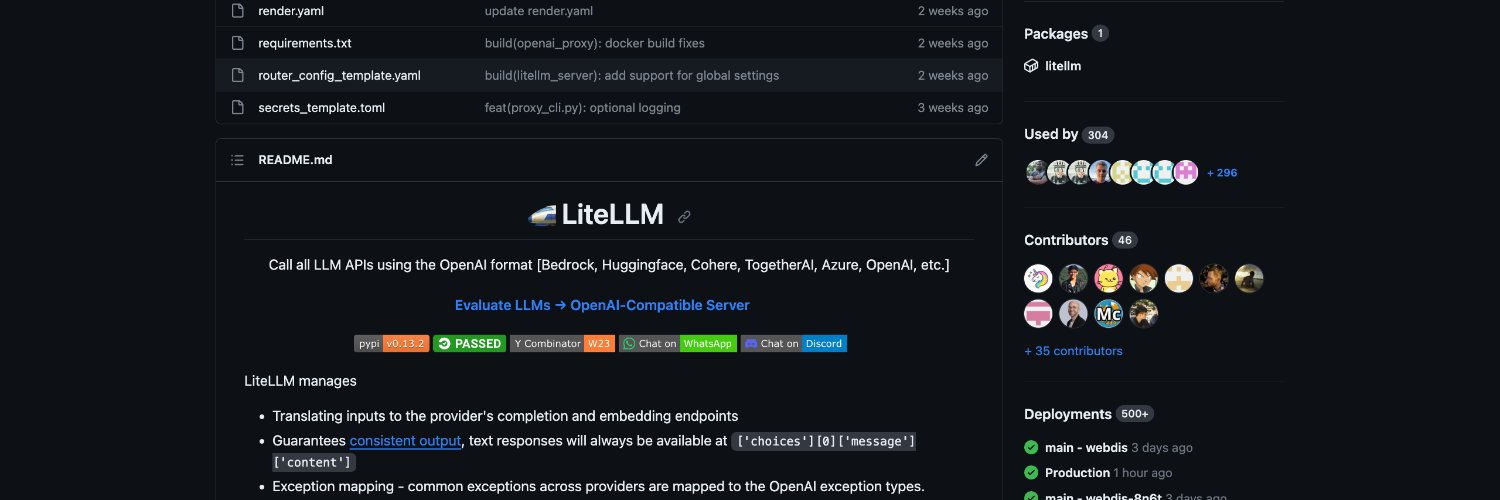

Call every LLM API like it's OpenAI 👉 https://t.co/UV2PpapQo7

Want to use models from Google's Vertex AI Model Garden with an OpenAI-compatible API? My new video shows how to set up @LiteLLM as a local proxy to do just that. Simplify your workflow and call models like Qwen, DeepSeek, and more through a unified interface. #AI #LLM #VertexAI #LiteLLM #GoogleCloud

New blog post by @AmanGokrani: Everyone says Claude Code "just works" like magic. He proxied its API calls to see what's happening. The secret? It's riddled with <system-reminder> tags that never let it forget what it's doing. (1/6) [🔗 link in final post with system prompt]