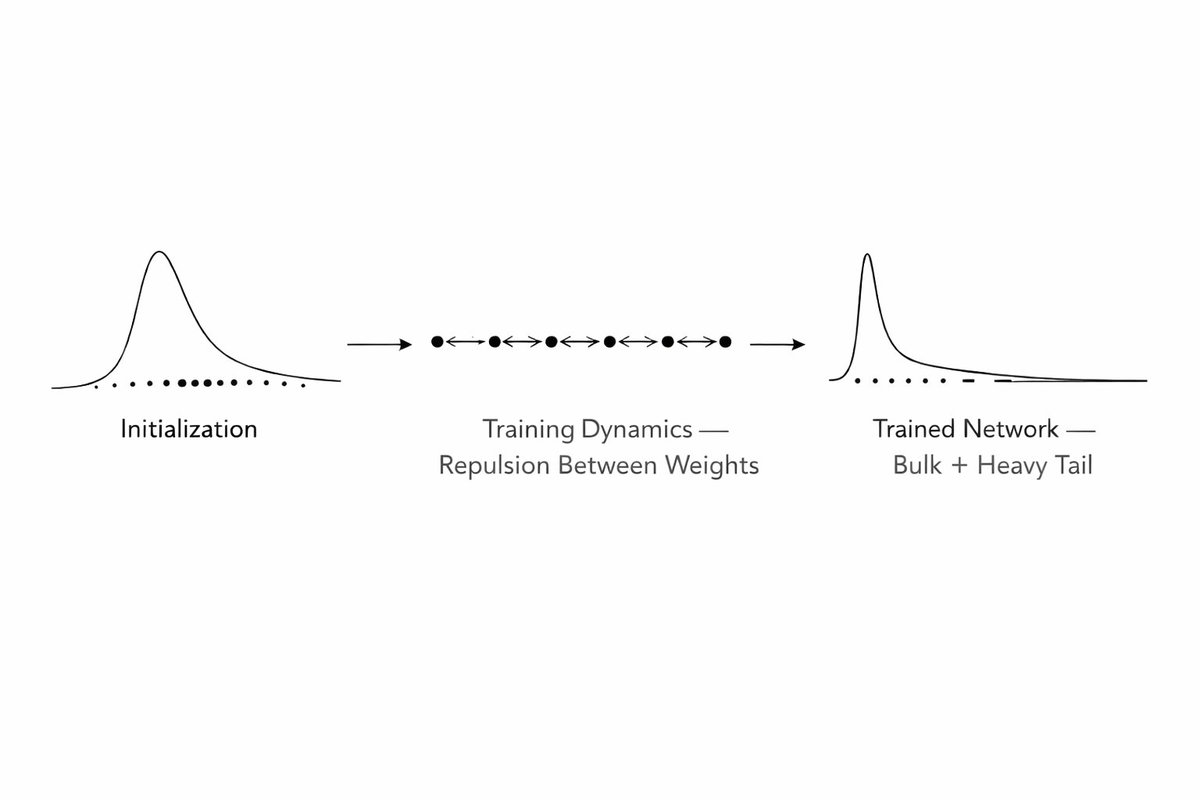

🚨New Preprint! Wondered how grid cells form multiple discrete modules? Interested in continuous attractors and modularity? With @FieteGroup, we discover + generalize a physical mechanism for forming modules from smoothly varying parameters in a dynamical system!👇(1/15)