Calc Consulting

12.5K posts

Calc Consulting

@CalcCon

Calculation Consulting is a boutique consultancy that specializes in machine learning, AI, and data science

San Francisco Katılım Ocak 2013

3.2K Takip Edilen4.3K Takipçiler

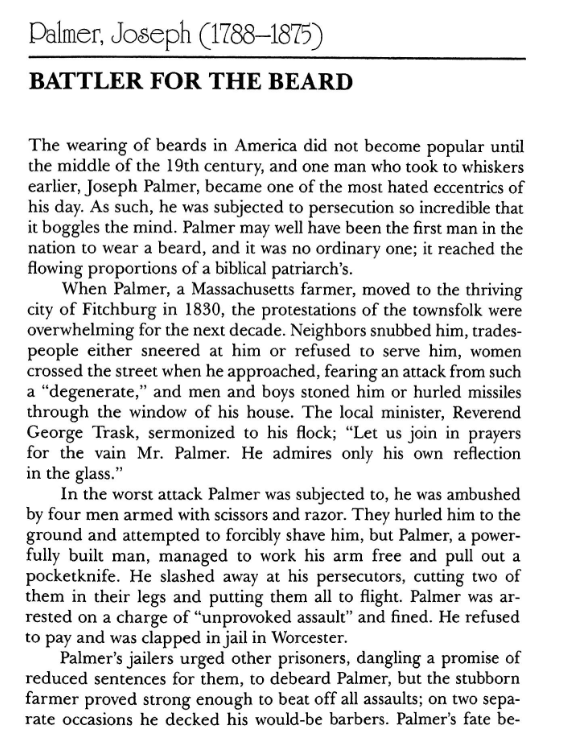

The first guy in America to wear a beard was so hated for it that he used to get into street fights with people trying to forcibly shave him.

Ramin Nasibov@RaminNasibov

What historical fact sounds fake but is true?

English

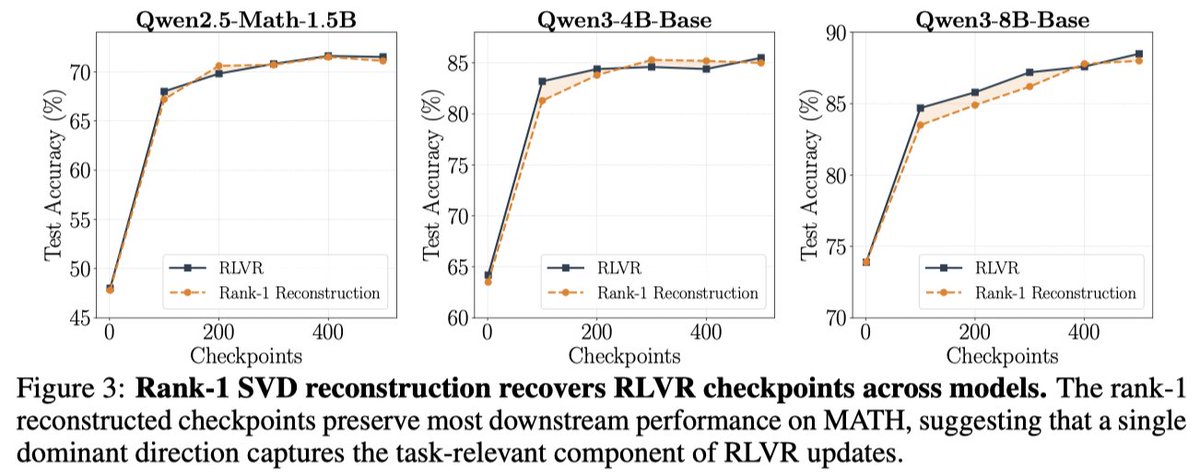

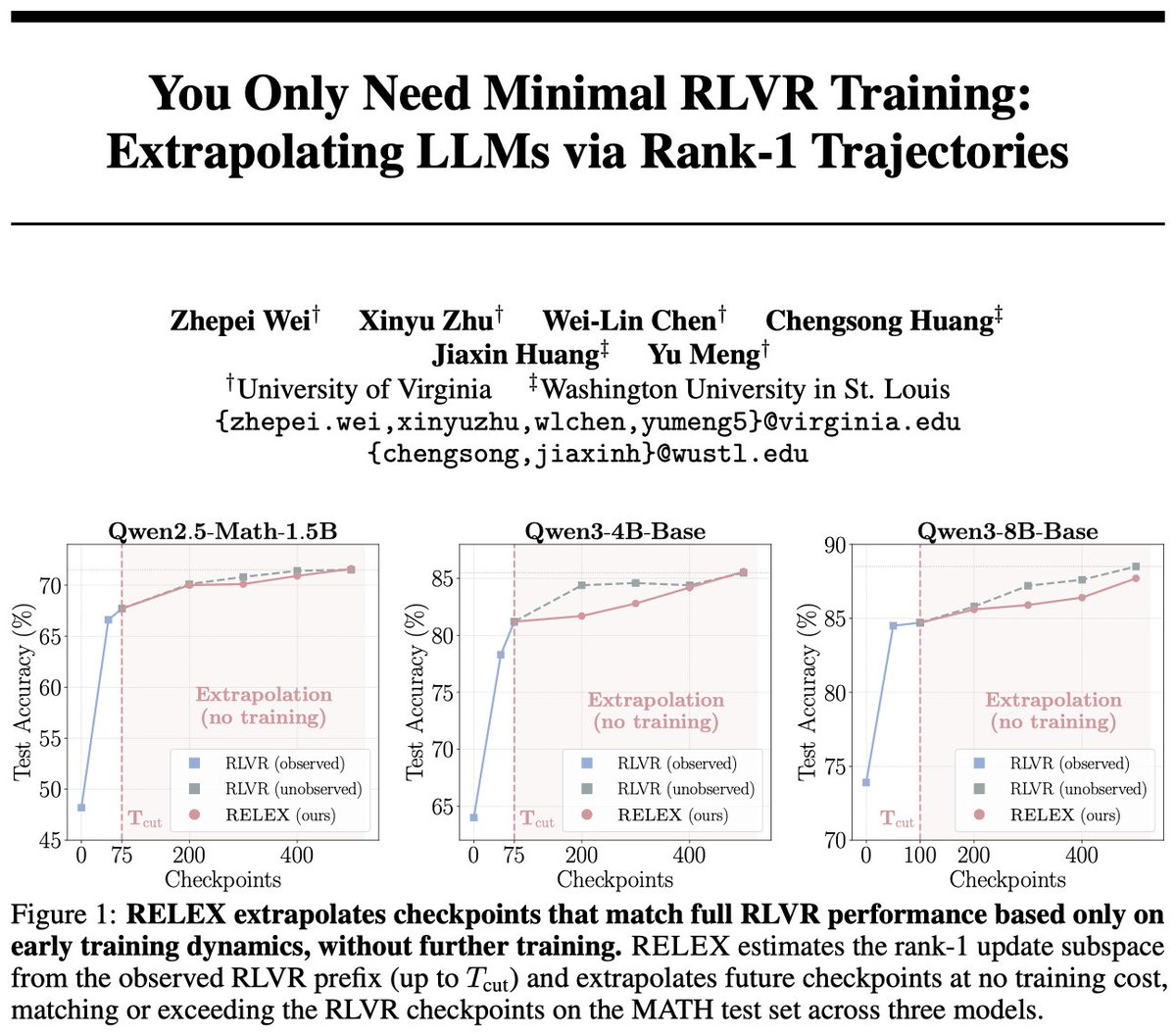

😢RLVR is powerful but expensive

🤯Imagine using <20% RLVR training while achieving 100% performance?

Sounds surprising? We show that minimal RLVR training is enough to know where training is going, and predict future ckpts at no training cost!

📃tinyurl.com/minimal-rlvr

🧵[1/n]

English

Calc Consulting retweetledi

𝐃𝐞𝐭𝐞𝐜𝐭𝐢𝐧𝐠 𝐨𝐯𝐞𝐫𝐟𝐢𝐭𝐭𝐢𝐧𝐠 𝐢𝐧 𝐍𝐞𝐮𝐫𝐚𝐥 𝐍𝐞𝐭𝐰𝐨𝐫𝐤𝐬 𝐝𝐮𝐫𝐢𝐧𝐠 𝐥𝐨𝐧𝐠-𝐡𝐨𝐫𝐢𝐳𝐨𝐧 𝐠𝐫𝐨𝐤𝐤𝐢𝐧𝐠 𝐮𝐬𝐢𝐧𝐠 𝐑𝐚𝐧𝐝𝐨𝐦 𝐌𝐚𝐭𝐫𝐢𝐱 𝐓𝐡𝐞𝐨𝐫𝐲

Hari K. Prakash, Charles H Martin

arxiv.org/abs/2605.12394

English

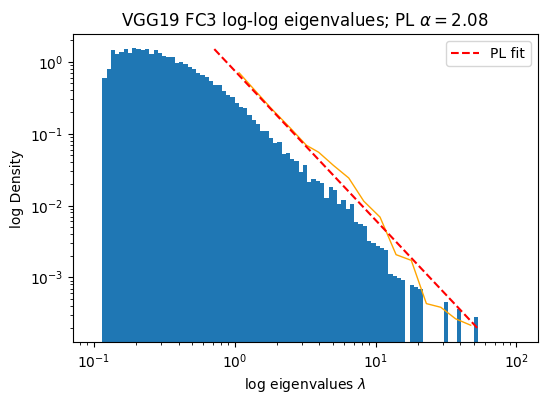

Finally, this helps explain why WeightWatcher α ≈ 2 is often associated with optimal performance.

α ≈ 2 marks the critical boundary where layers are strongly correlated enough to encode useful structure, but not so heavy-tailed that they become dominated by unstable, non-self-averaging fluctuations.

English

This is the same reason the weightwatcher α < 2 is a signal of potential overfitting !

Let 𝐗 be the covariance matrix of the layer weight matrix 𝐖

𝐗 = (1/N)𝐖ᵀ𝐖

Diagonalize it, fit the eigenvalues to a power law with exponent α=2

If α < 2, the fitted spectral tail is in the infinite-variance regime.

This suggests that the layer may be dominated by extreme, non-self-averaging correlations rather than stable, well-distributed learned structure.

English

While everyone is thinking about how to bound the generalization error, they never ask, what does it mean to be unbounded ?

Traps cause overfitting because they have non-vanishing variance.

And it is impossible to form a bound of any kind on a system if the variance stays finite as the system grows

English

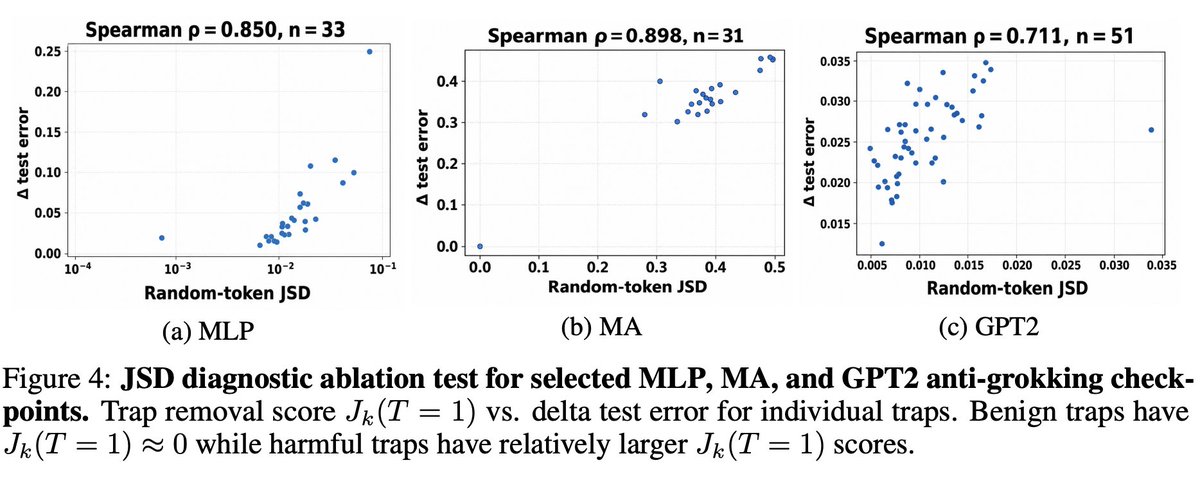

Traps can be harmful or benign. They can be distinguished using a simple Jensen-Shannon Divergence Abalation Test

The test is easy to run.

1) Remove the trap from the model, replace it with a random vector

2) Pass random inputs through the 'ablated' model and the orginal model

3) Measure the Jensen-Shannon Divergence (JSD) between the output logits of both models

The classification is:

• Harmful trap: replacement changes logits and improves or hurts the test accuracy.

• Benign trap: replacement has a negligible effect on the test accuracy.

English

Traps tend to be either highly localized or highly delocalized. These are analogous to phenomena that arise in physics in the study of phase transitions

A localized trap is like Anderson localization,

A delocalized trap is like Bose–Einstein condensate

and is also very similar to what happens in the Curie–Weiss mean-field model of magnetization

English

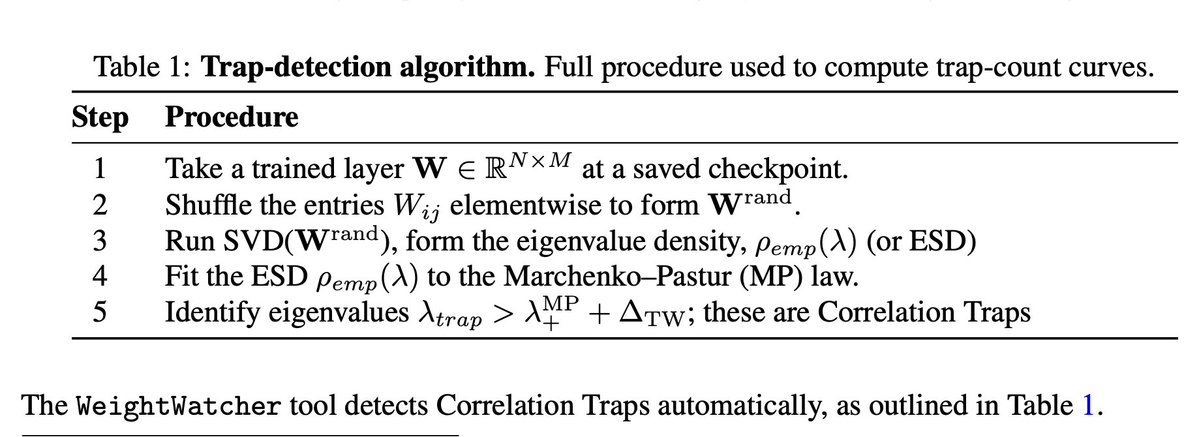

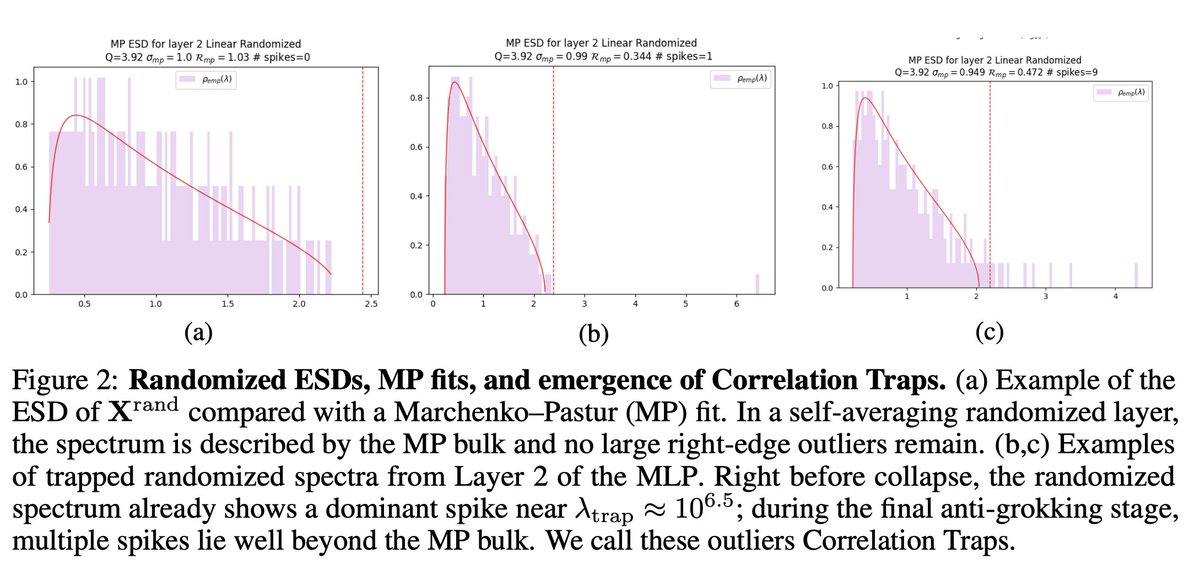

What is a Correlation Trap? And why do they cause the test accuracy to drop ?

If we randomize a layer weight matrix elementwise,W-> rand(W), the matrix should now behave as if its elements are i.i.d. In particular,

The new eigenvalues rand(W) should obey the Marchenko-Pastur (MP) law of Random Matrix Theory (RMT), to within finite size Tracy-Widom (TW) fluctuations

A Correlation Trap is a large eigenvalue appearing well beyond the right edge of the MP+TW baseline

English