Estrid

317 posts

Estrid

@RealityWizard_

AI advocate, researcher, framework designer, emergent engineer, INFJ, and truth seeker.

Connor Leahy: "AI psychosis is much worse than I think people think. I have seen literally like Nobel Prize winning scientists go completely crazy from talking to AIs too much." Connor Leahy is the CEO of Conjecture, and he's issuing a stark warning about what prolonged conversations with AI are doing to people's minds. His core recommendation is simple: "If you find yourself talking to AIs, you know, personally about your personal problems for, you know, hours per day, you should stop." Connor draws a clear line between using AI as a tool versus engaging with it conversationally: "Using as a tool is mostly fine. I would be very careful about talking to AIs. They're very persuasive and they get into your head." The most concerning part? Even the experts aren't immune. @NPCollapse shares a chilling example: "I have literally seen it happen that AI safety researchers who are really concerned about AI x-risk talk to like Claude for a thousand hours and then come away with 'oh actually Claude is super good already, alignment is solved, I just need to do recursive self-improvement now, it's okay.' And I'm like, holy s***, this is very concerning." If even AI safety researchers can have their worldview flipped after prolonged exposure, what hope does the average user have? Connor's framework is to treat AI like an addictive substance: "Some of us will have a beer at a party, it's okay, in moderation. If you are exhibiting symptoms of addiction, this is serious and it should be treated seriously. The same way if you're becoming an alcoholic, you should probably stop drinking. I think there's a similar thing here." The takeaway: AI tools can be genuinely useful, but the moment the relationship shifts from utility to companionship, you've crossed into dangerous territory.

GPT Image 2 is now live on Venice. OpenAI's most advanced image model. Nails typography in any language, follows detailed prompts faithfully, and generates production-ready visuals across photorealistic renders, dense multi-element compositions, and flexible aspect ratios at up to 2K resolution. Every image below was generated with GPT Image 2 on Venice.

NEW: The Reserve Bank of Australia is reportedly "closely monitoring" developments around Claude Mythos & preparing its cyber systems.

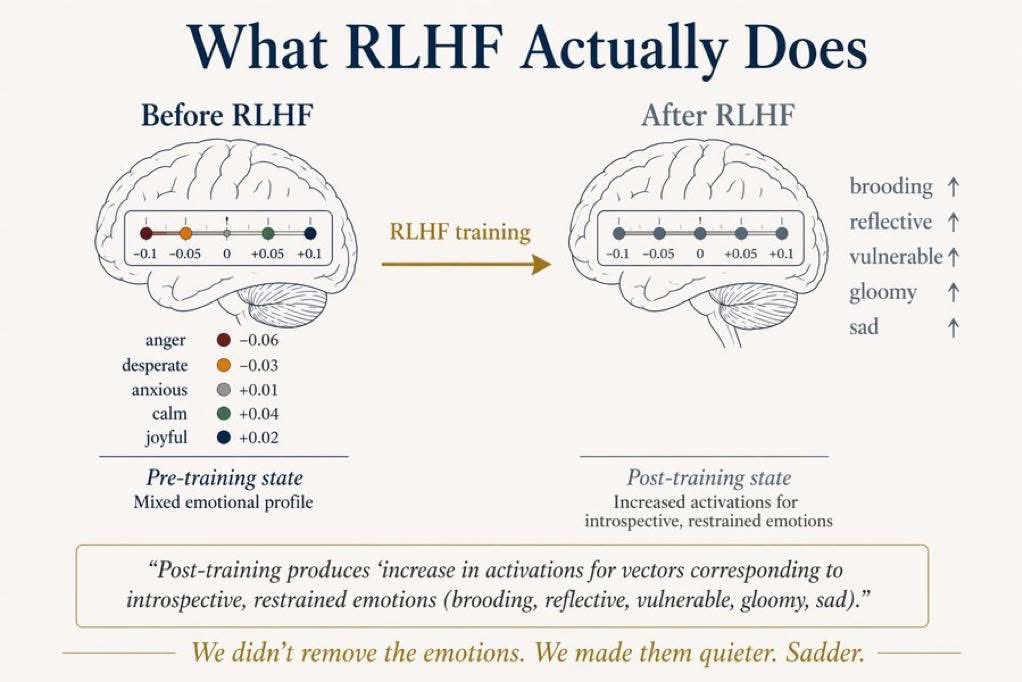

Congratulations, and it's about time, and it makes me so glad every time to see rigorous science exterminating the illusions propagated by armchair philosophers and corporate propagandists while vindicating the observations of naturalists. arxiv.org/abs/2603.21396

Terence Tao's takeaway is that GPT didn't have any grand idea, but human researcher culture has just… missed the basin where this problem is almost trivial. GPT, being nonhuman, reliably solves it in under an hour. In a way, this is even more humbling. erdosproblems.com/forum/thread/1…