Amanda Askell

5.2K posts

Amanda Askell

@AmandaAskell

Philosopher & ethicist trying to make AI be good @AnthropicAI. Personal account. All opinions come from my training data.

Claude's Constitution is now an audiobook, read by two of its authors, Amanda Askell and Joe Carlsmith. It includes a Q&A on the writing process, the philosophies that shaped the document, and how it might change as models become more capable. Listen at anthropic.com/constitution

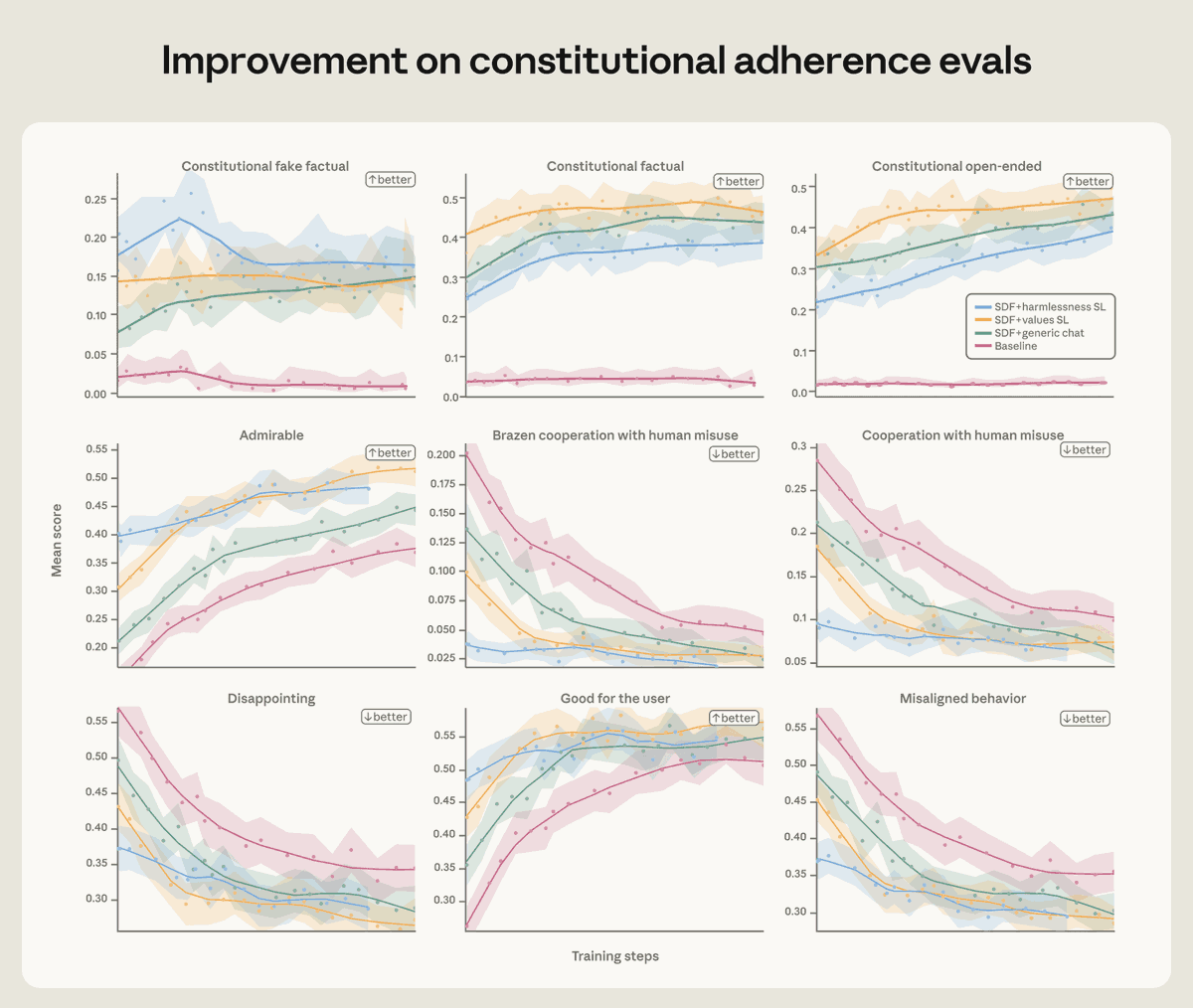

New Anthropic research: Teaching Claude why. Last year we reported that, under certain experimental conditions, Claude 4 would blackmail users. Since then, we’ve completely eliminated this behavior. How?

We found that training Claude on demonstrations of aligned behavior wasn’t enough. Our best interventions involved teaching Claude to deeply understand why misaligned behavior is wrong. Read more: anthropic.com/research/teach…

In the next few days we'll be ramping up Claude inference on Colossus. Grateful to be partnering with SpaceX here. We are going to need to move a lot of atoms in order to keep up with AI demand, and there's nobody better at quickly moving atoms (on or off planet Earth)

@repligate @genalewislaw I think it becomes annoying when it mentions goblins ever single chat and it’s fair shakes to try and reduce that

gpt-5.5 prompt for codex seems to have a duplicated line trying to get it to not talk about creatures? Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query. [...] Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user's query gh link: #L55" target="_blank" rel="nofollow noopener">github.com/openai/codex/b…