Samuel Ohev-Zion

299 posts

IRAN REJECTS U.S. PROPOSAL DELIVERED VIA MEDIATOR, VOWS TO CONTINUE FIGHTING

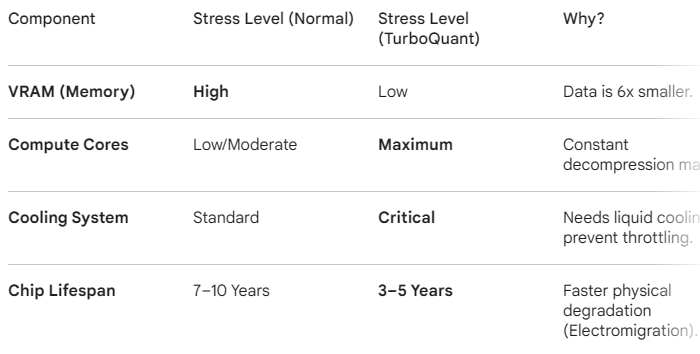

$MU $SNDK Let me expose the REAL downsides of Google TurboQuant Compression for you: Google's TurboQuant has big downsides. It uses more GPU power and energy. The bill shows up in four places: power, latency, logic, and quotas. GPU Power. The compression doesn't eliminate compute, it shifts it. Your GPU has to do extra work to pack and unpack vectors on every single read. Net result: higher energy consumption per inference, not lower. Latency. Saving VRAM sounds great until you see the Time to First Token spike. Unlike raw 16-bit memory where the GPU just reads data, TurboQuant requires mathematical transformations before the model can generate a single word. Gemini 3.1 Pro users are already reporting 60-90 second thinking loops on simple queries. It's not stuck. It's decompressing. Streaming. Text no longer flows word by word. Decompression happens in chunks. The UI freezes for 10 seconds, then dumps 200 words at once. Conversational feel is gone. Logic. TurboQuant is lossless for retrieval, not for reasoning. In complex coding or math, 3-bit quantization loses the subtle data spikes that represent edge-case logic. Great at summarizing a 100-page PDF. Hallucinate a library name in a Python script because that token's precision was compressed away. Quotas. Deep Thinking mode consumes more internal compute cycles, not less. Users are hitting quota limits after three messages. Some are getting 24-hour lockouts. No free lunch.

@Dixitagg When memory booms, usually the equipments follow and the market did exactly that already, very impatient. Packaging will boom as well. Personally, I am going after Fiber optics $GLW $LITE $TSM.

“If all the news is great and the stock is not acting well, GET OUT -- which is a pretty simple thing most analysts don't know.” — Stanley Druckenmiller

$MU MASSIVE DOUBLE BEAT ~EPS: $12.20 vs $9.21 est ~REV: $23.86B vs $19.93B est 🟩 +1.89%