Franck Lebeau

3.9K posts

Franck Lebeau

@_Kcnarf

#AI in #NLP, dataviz expert, full stack dev, math_art and day-to-day enthusiast; also, PhD in CS, and trying to reduce my environmental footprint

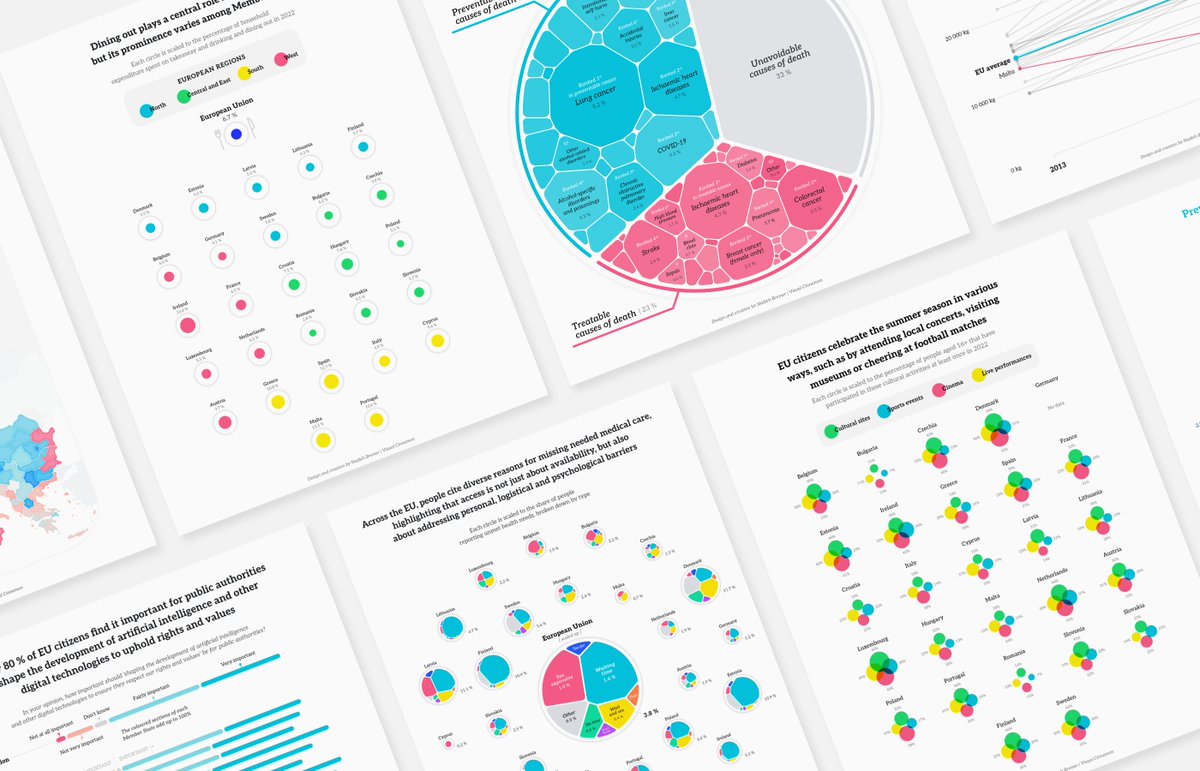

📣 NEW WORK! Excited to share my latest work with the Publications Office of the European Union 🇪🇺 I got to create 9 dataviz for 3 of the EU's monthly Data Stories, covering fascinating topics, from leisure, health and the future. See all the visuals: visualcinnamon.com/portfolio/eu-d…

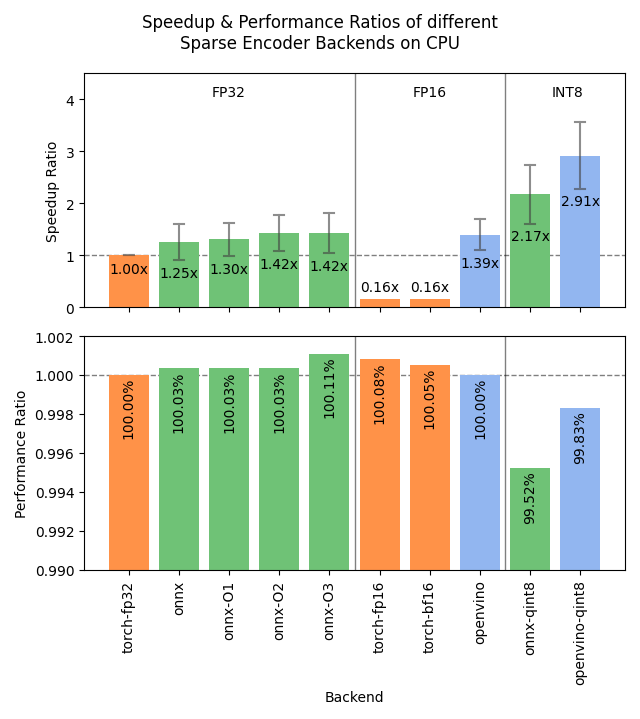

PyLate: Flexible Training and Retrieval for Late Interaction Models @antoine_chaffin et al. introduce a streamlined library extending Sentence Transformers to support multi-vector architectures. 📝arxiv.org/abs/2508.03555 👨🏽💻github.com/lightonai/pyla…

How do LLMs pick the next word? They don’t choose words directly: they only output word probabilities. 📊 Greedy decoding, top-k, top-p, min-p are methods that turn these probabilities into actual text. In this video, we break down each method and show how the same model can sound dull, brilliant, or unhinged – just by changing how it samples.

Every vibe-coder is generating as much technical debt as 10 regular developers in half the time. Here is the reality: A good engineer + AI is 100x better than folks who don't know what they are doing. Don't get carried away by the hype. Knowledge matters today more than ever.

Before: chunk overlaps, context summaries, metadata augmentation Now: voyage-context-3 processes the full doc in one pass and generates a distinct embedding for each chunk. Each embedding encodes the chunk-level details AND full doc context, for more semantically aware retrieval.

Kruft(n): bullshit in the prompt because you didn’t write it (smell: emojis), or copy pasta’d someone else’s 500 line thing from Twitter, or using a library that “prompts for you” yes. I am traumatized from all the prompt reading I do. send help