Raphaël Sourty

1K posts

Raphaël Sourty

@raphaelsrty

AI @LightonIO Language Models, Information Retrieval, Knowledge Distillation PhD

🚨Typical RL algorithms and on-policy distillation methods are blind samplers: they use privileged info to score rollouts, but not to *find* them. We ask: can we use privileged info to *actively sample* the rollouts RL wishes it can stumble upon with compute? ⤵️ Pedagogical RL

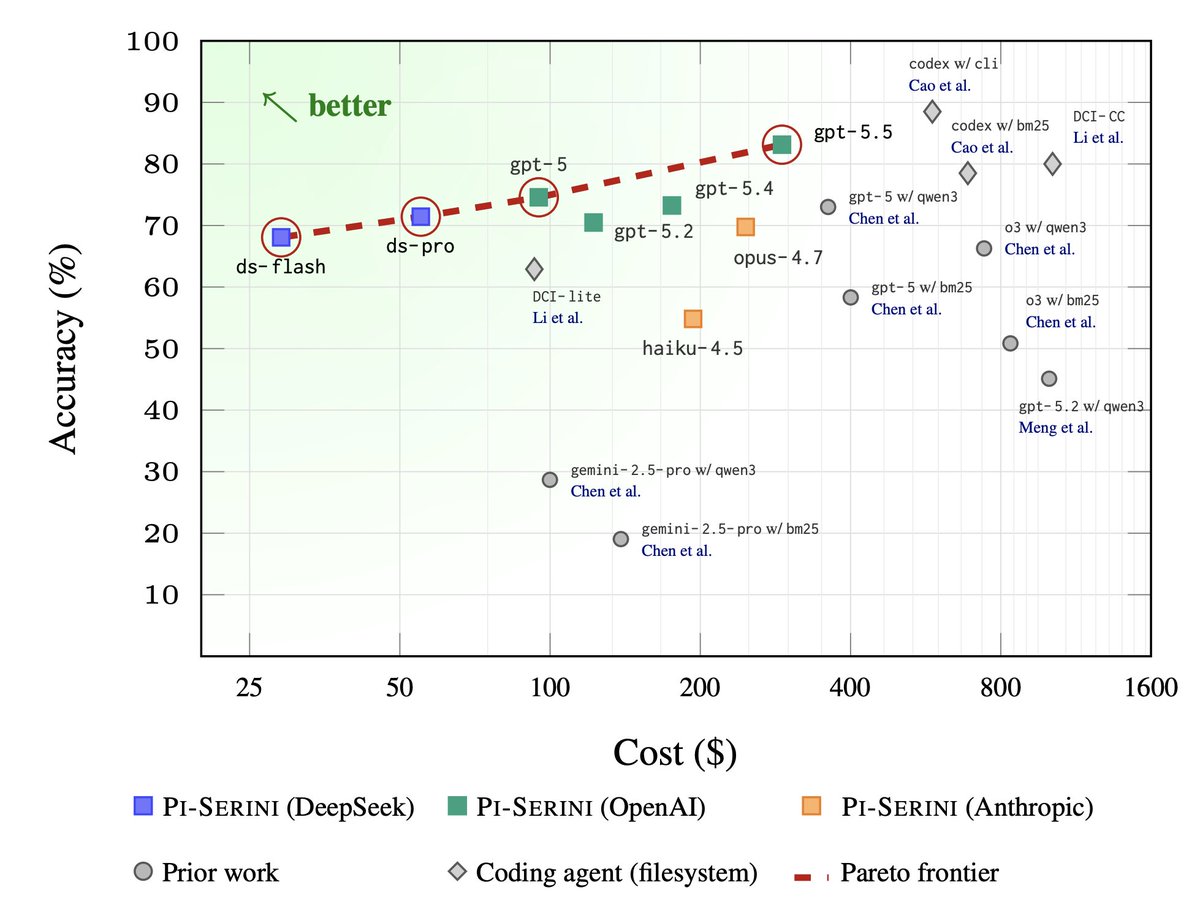

Excited to announce @antoine_chaffin will be a guest speaker at Cheat at Search w/ Agents. His SOTA Deep Research wins need no introduction :)

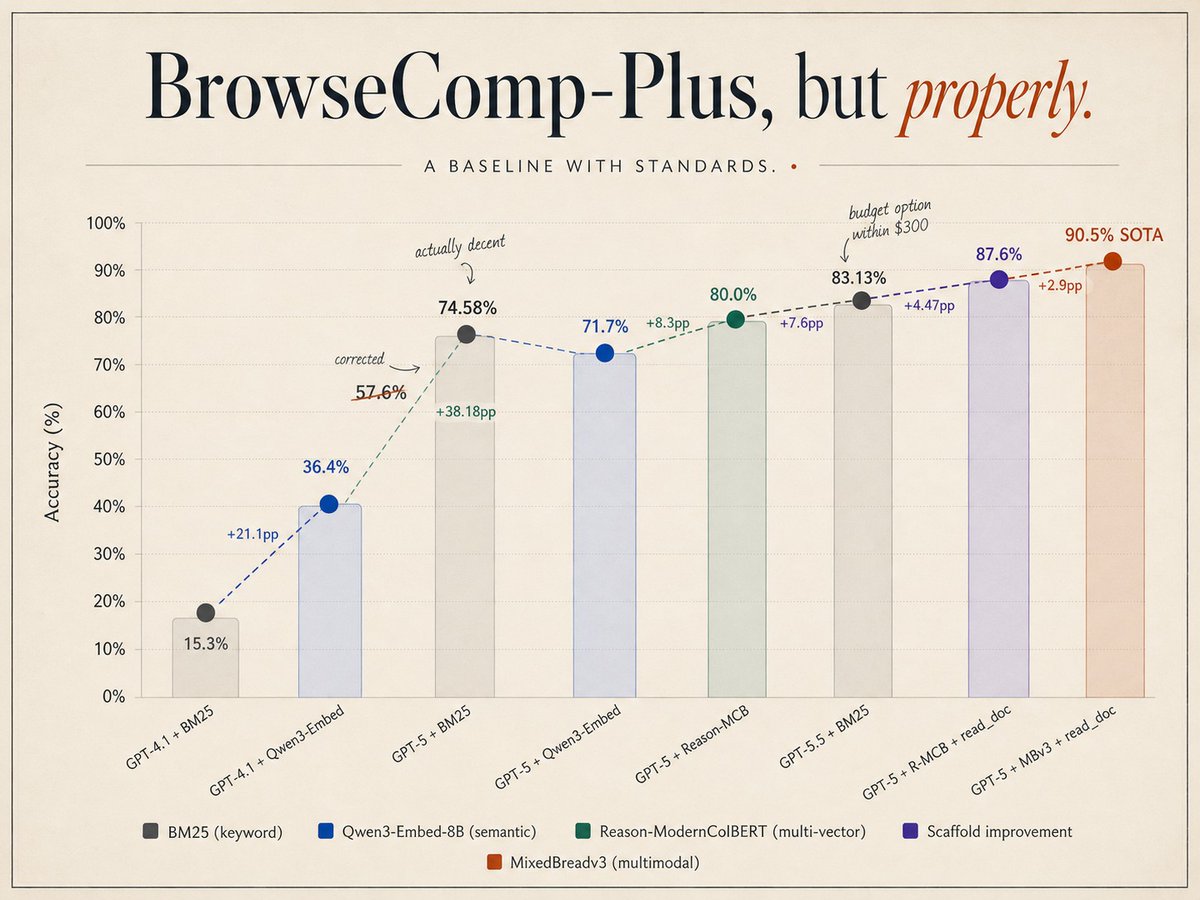

Reason-ModernColBERT nearly solved BrowseComp-Plus, smashing SOTA and outperforming models models 54× bigger Not bad for a 1 year old model not optimized for deep research What if we actually tried? Introducing Agent-ModernColBERT: adding another 10% on top with a 5 min training

Reason-ModernColBERT nearly solved BrowseComp-Plus, smashing SOTA and outperforming models models 54× bigger Not bad for a 1 year old model not optimized for deep research What if we actually tried? Introducing Agent-ModernColBERT: adding another 10% on top with a 5 min training

Reason-ModernColBERT nearly solved BrowseComp-Plus, smashing SOTA and outperforming models models 54× bigger Not bad for a 1 year old model not optimized for deep research What if we actually tried? Introducing Agent-ModernColBERT: adding another 10% on top with a 5 min training

Descriptive queries target latent properties without naming them. Twitter-Conflict asks for tweets that imply a specific stance through framing or irony, with explicit/news tweets as hard distractors. WildChat Errors finds model failures that are never explicitly mentioned.

Oblique tip-of-tongue queries match a fuzzy, lossy recollection to one obscure passage. Congress Hearings: "Someone sent me this clip where a senator starts off weirdly friendly, then flips and starts pinning the guy down…"

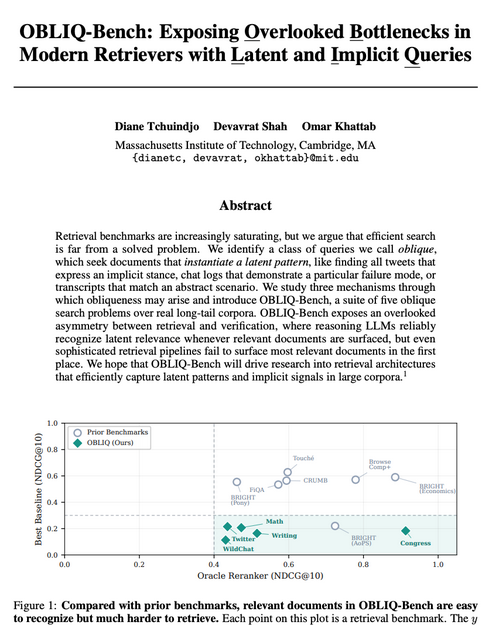

We set out to build a better retriever, so we looked for the hardest IR benchmarks. For each, we asked how much headroom remained by running oracle reranking with a frontier LLM. Most had little room left! So we built OBLIQ-Bench to study much harder search queries than before.