고정된 트윗

Matthew Yang

42 posts

Matthew Yang 리트윗함

Matthew Yang 리트윗함

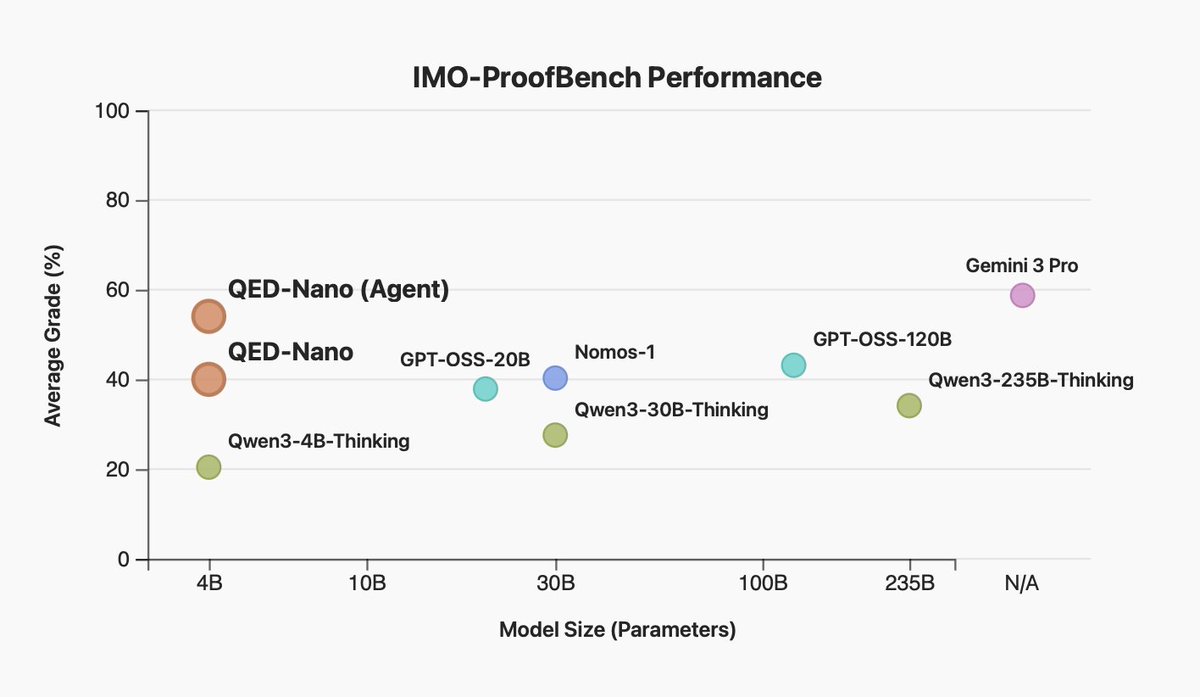

@rosmine We tried generating interventions with larger models, namely Qwen3-30B-A3B-Instruct (see section 3) and Gemini 2.5 Pro (see Appendix).

We find that larger models tend to generate better interventions (row 6 vs. row 5).

English

@matthewyryang Did you try scaling up at all beyond 4B? Curious if larger models can get more improvement, or if the boost decreases with size

English

@dhruvbhatia0 No, it does not, because we run standard online RL after we SFT on the interventions

English

@matthewyryang does this stunt the models ability to correctly generate the critical tokens without seeing the reference solution? Compared to just pure RL

English

Matthew Yang 리트윗함

Matthew Yang 리트윗함

🚨 New Paper Alert 🚨

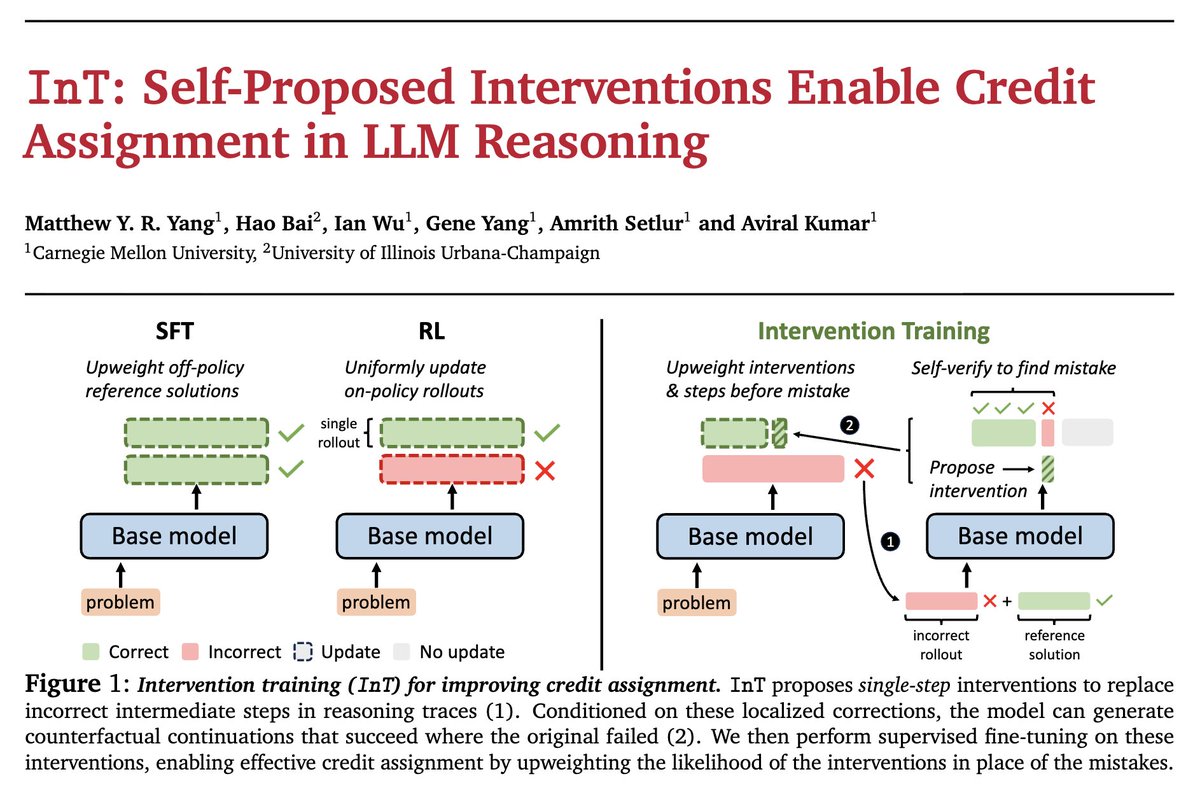

💥 SFT on hard tasks given reference solution is usually too off-policy, which can cause the training to crash.

🐌 On-policy RL on these hard tasks introduces low sample efficiency, although more stable.

😈 Today, we introduce Intervention Training (InT), an algorithm that avoids shortcomings of both sides.

A thread 🧵 1/n

GIF

English

Thank you to my amazing set of collaborators @jackbot_cs @ianwu97 @geneyang4 @setlur_amrith @aviral_kumar2 for making this happen!!! 🙏🙏🙏

And grateful to end my master’s journey with this project ⛵️🌅😎

🧵[7/n]

English

website: intervention-training.github.io

paper: arxiv.org/abs/2601.14209

code: github.com/intervention-t…

🧵[6/n]

English

Matthew Yang 리트윗함

Matthew Yang 리트윗함

Matthew Yang 리트윗함

Matthew Yang 리트윗함

Matthew Yang 리트윗함

Matthew Yang 리트윗함

Matthew Yang 리트윗함

Nice to see ideas in our e3 paper (arxiv.org/pdf/2506.09026): chaining asymmetries to learn meta-behaviors, also work on didactic tasks!

Lifan Yuan@lifan__yuan

🧩New blog: From f(x) and g(x) to f(g(x)): LLMs Learn New Skills in RL by Composing Old Ones Do LLMs learn new skills through RL, or just activate existing patterns? Answer: RL teaches the powerful meta-skill of composition when properly incentivized. 🔗:husky-morocco-f72.notion.site/From-f-x-and-g…

English