Ian Wu

54 posts

New blog on how we train Composer to work on hard problems. With the maestro himself Federico Cassano @ellev3n11

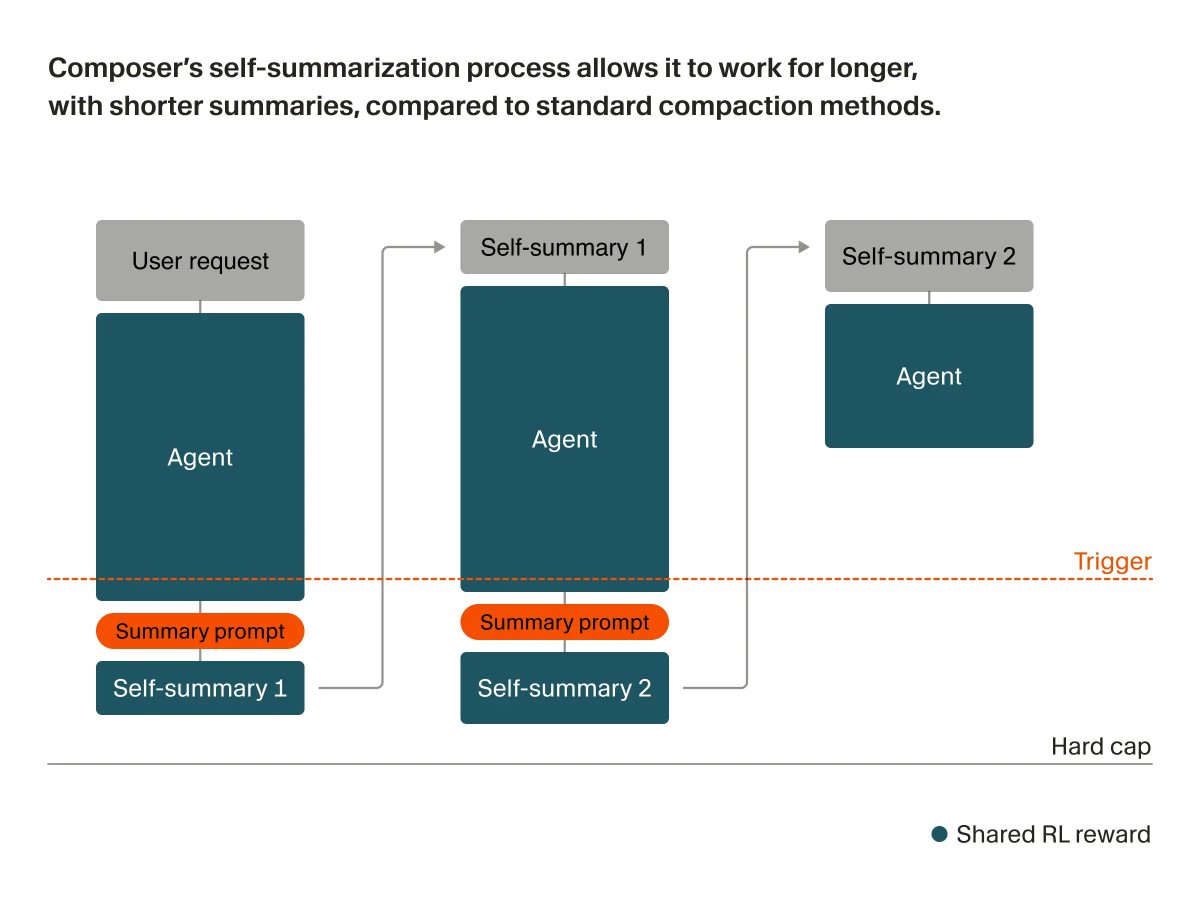

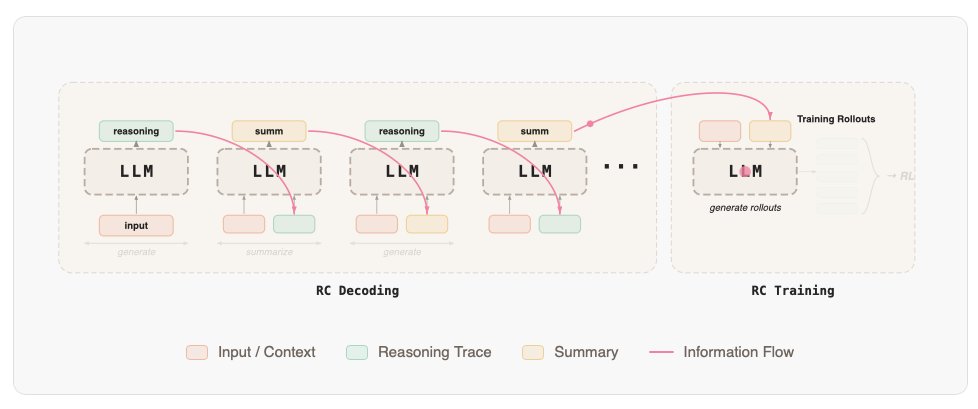

We trained Composer to self-summarize through RL instead of a prompt. This reduces the error from compaction by 50% and allows Composer to succeed on challenging coding tasks requiring hundreds of actions.

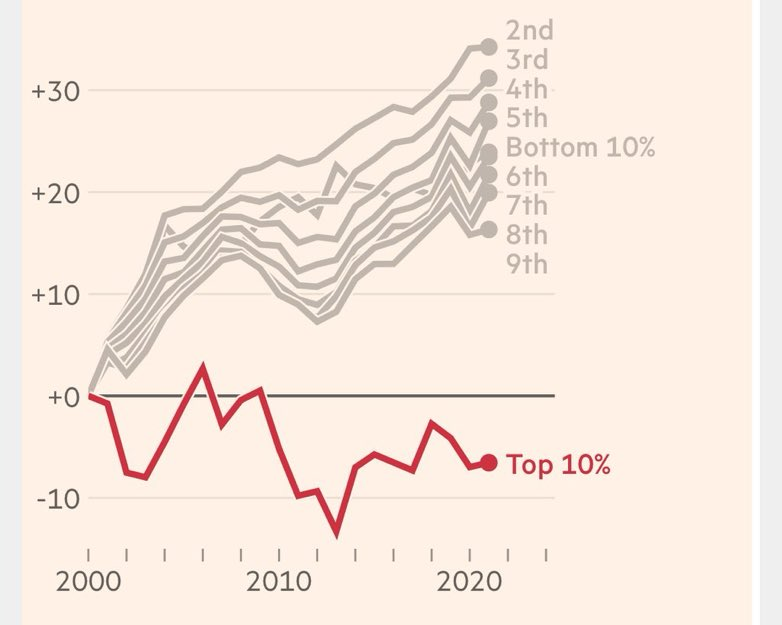

Whereas the graduate premium has increased in most rich countries, it has plummeted in Britain since 1997. Earnings for British graduates have shrunk (next pic). ->

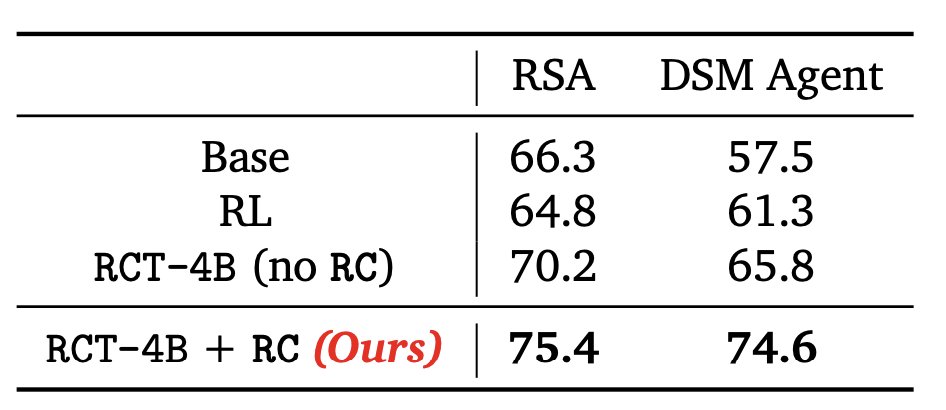

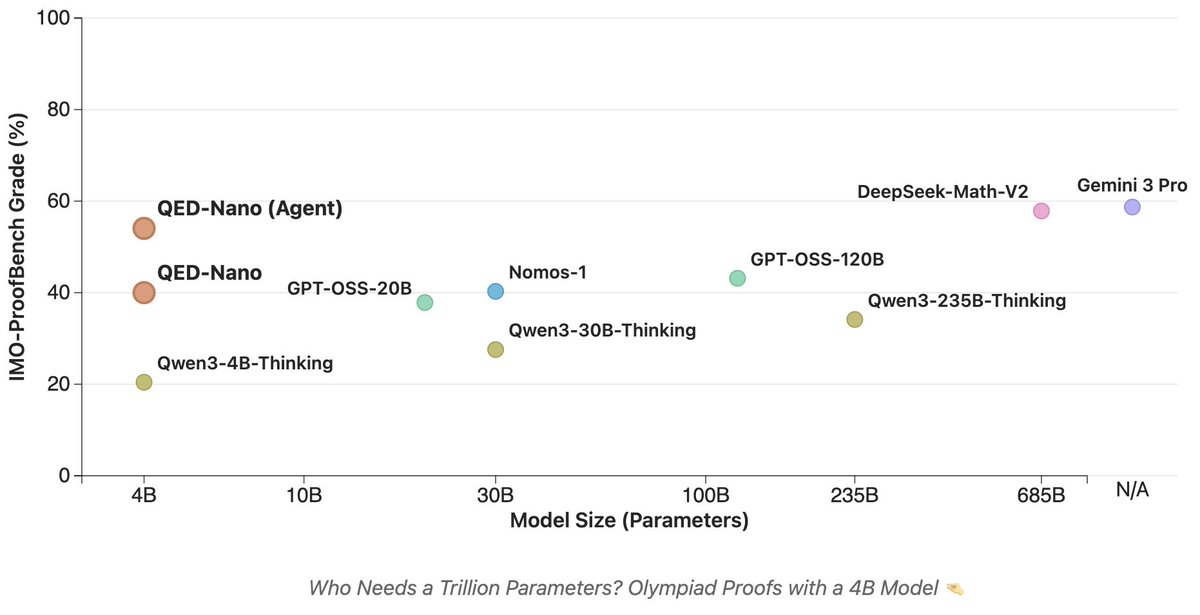

We post-trained a 4B parameter model to write Olympiad-level math proofs! Our tiny model, *QED-Nano*, outperforms gpt-oss-120b, and approaches the performance of Gemini 3 Pro when paired with test-time scaffolding. Check out the blogpost: huggingface.co/spaces/lm-prov…

The final piece of the puzzle is to pair our model with an agentic scaffold that can effectively scale inference-time compute to very long horizons. We experimented with many scaffolds and DeepSeek's to provide the best tradeoff in compute vs performance. However, if you're willing to go the full mile and generate >2M tokens per proof, Recursive Self-Aggregation is the best scaffold and allows our 4B model to match Gemini 3 Pro on IMO-ProofBench!