Abstrack (AKA Lil' Jeb) 리트윗함

Abstrack (AKA Lil' Jeb)

627 posts

Abstrack (AKA Lil' Jeb)

@abstrack97

programming, machine learning, maths, model merging experiments that go wrong, music, art and most importantly: curiosity.

Unknown Space-Time Coordinates 가입일 Aralık 2014

1 팔로잉24 팔로워

@producer_ai I've been making music for many years and I'm interested in replacing my samples lib with AI! I would appreciate it if you dropped an invite link :>

English

@thejoephase @tom_forsyth Basically, a better way to train VAEs was found that makes the latent space much less noisy and much less prone to artifacts under affine transforms of the spatial dimensions.

English

A while back I wrote a post on doing rotations with three shears: cohost.org/tomforsyth/pos…

This was an inspiration for a talk by Andrew Taylor: github.andrewt.net/shears/

...which Matt Parker saw, and now he's made a video: youtube.com/watch?v=1LCEiV…

Science! (obsolete)

YouTube

English

@thejoephase @tom_forsyth Never got to it, but actually with EQ-VAE or other regularized latent objectives this shouldn't be necessary anymore.

English

@abstrack97 @tom_forsyth Hi! I was looking for OP’s article just now cause I remembered it and wanted to try it on latents. Funny to see this. Did you try it?

English

@bandinopla @XorDev It may not be a proper statistical average though... maybe calculating the correlation between the A and B and properly weighting could be interesting.

English

@bandinopla @XorDev Yeah that seems to be the case. I think it can be used intentionally though.

The reason I was curious about that variation of the formulae is that it is actually a proper average contrary to the original shader. the contributions of the inputs give 1.

English

@Mikururun The reason is simple:

Content > Artstyle

You can hate it, but the truth is that what you do post-lineart doesn't have as much impact as the idea.

Personally I like this fact because I find it liberating. You can just have fun with the process without thinking too much about it

English

@ghosttyped has any one of your friends written a blog post on how not to burnout in the first place

English

@_trish_07 I did this 10 years ago. 100% you are absolutely right. If you beat the mammoth first, everything else ends up looking like a snack.

English

@VictorTaelin The solution is quite simple.

I had the same issue but with attention seeking meme accounts and street fighting content (yuk!)

Just start blocking/hiding accounts by the posts they make. The algo doesn't recommend people you block.

English

Abstrack (AKA Lil' Jeb) 리트윗함

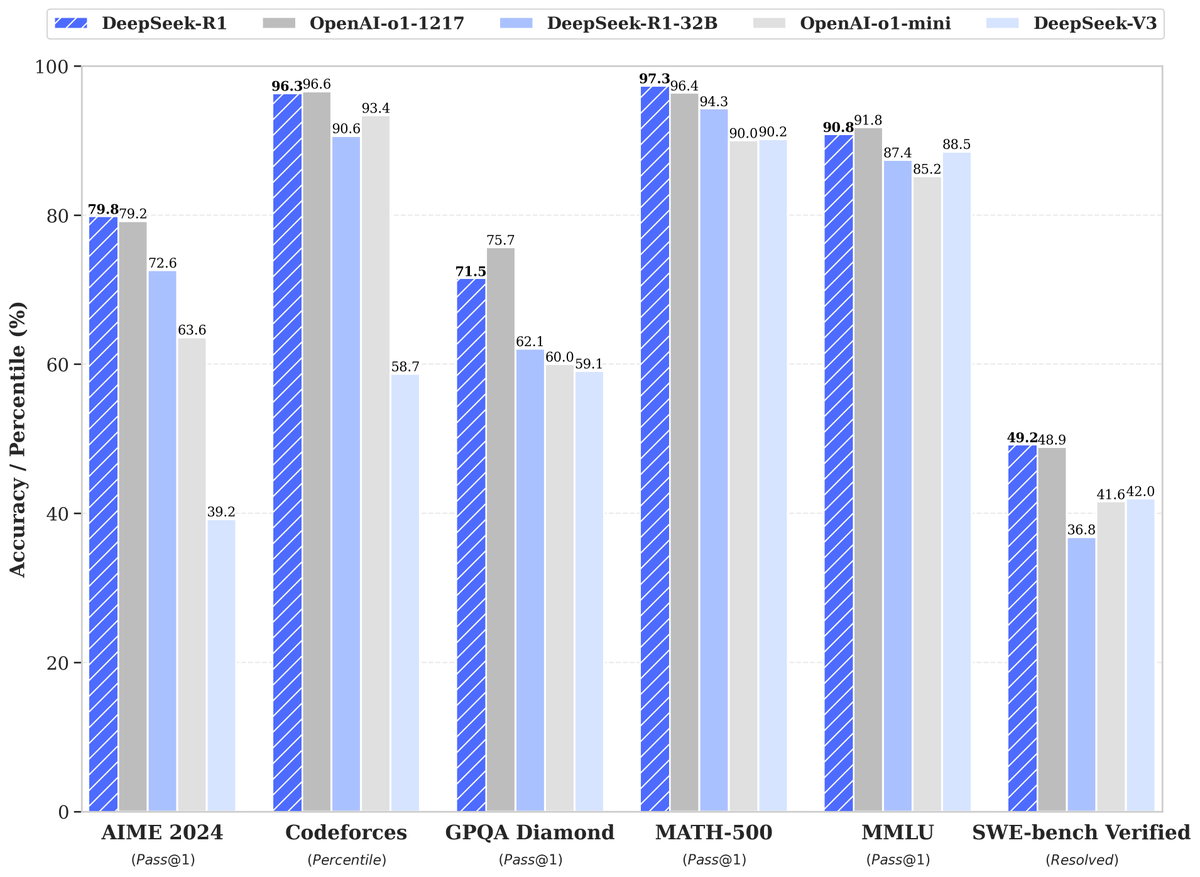

Closed source work makes it impossible to trust these claims. As far as science is concerned, the attribution of techniques in R1 should be solely to deepseek authors unless OpenAI open up their code base with verifiable commit history.

Mark Chen@markchen90

Congrats to DeepSeek on producing an o1-level reasoning model! Their research paper demonstrates that they’ve independently found some of the core ideas that we did on our way to o1.

English

@VictorTaelin How long do you think it would take to find a solution?

English

Abstrack (AKA Lil' Jeb) 리트윗함

🚀 DeepSeek-R1 is here!

⚡ Performance on par with OpenAI-o1

📖 Fully open-source model & technical report

🏆 MIT licensed: Distill & commercialize freely!

🌐 Website & API are live now! Try DeepThink at chat.deepseek.com today!

🐋 1/n

English

@artbyha_ It would be amazing to see you share how you became this effective with time!

English

I'm curious how quickly this derives an iterative method for the square root of a 2x2 (or higher dim?) symmetric matrix. Can it find the newton method by itself? (which is X_{n+1} = \frac{X_n + A X_n^-1}{2})

More interesting would be if it could also synthesize new alternative methods that converge faster.

English

Guys I'm so hyped by how effective our synthesizer is. I write:

take : Nat → [Nat] → [Nat]

- take(2 [a b c d]) = [a b]

- take(3 [a b c d]) = [a b c]

It synths:

take : Nat → [Nat] → Nat

take Z xs = []

take (S n) [] = []

take (S n) (x:xs) = x : take n xs

In exactly 0.065s, running in a single CPU core! This is just so cool. It is like Sonnet on steroids, except it only produces correct code, but is limited to small completion sizes (yet?). I predict 95% of my programming will be automated by this alone once I integrate with Kind/Bend, and it actually compliments really well with existing AIs. Like, even before I start working on hard stuff like a full Symbolic AI architecture, I wonder how effective this could be just integrated in a loop with Sonnet. The LLM drafts the overall skeleton, the synthesizer fills complex parts and fixes bugs 🤔

English

Read carefully. Nowhere, at any point in this conversation, am I ever advocating *for* this concept. All I'm ever saying is that in theory, it is possible.

It can be an interesting concept, if you're curious and see where the tunnel ends. If you do just the slightest research online, you'll find I'm not at all the first to consider this. What's insane about being curious?

Really, my original comment was meant as a fun, playful interjection. All these names I've been called, and the judgment that was made on me -- all of it is not justified.

English

@effectfully i mean technically this point he made is correct, compilers are really some of the most complicated string to string translators we have; but the point he is trying to make about a PL with no fixed syntax..??? genuinely i think youre just talking to an insane person here

English

We can agree that the implementation can be quite intricate for some languages.

One clear criticism of the idea that a compiler can be portrayed as a function of type string -> string, is that even in relatively small projects, often you have to compile multiple files together, you can't compile each input file in isolation. Is this what you're referring to?

If not, could you clarify how a compiler is more than a string -> string function? Maybe it's obvious and it has escaped me.

English

Hey, I think that's a fair point. Effectfully has me blocked so all I can do is QT. Let me elaborate.

Once you pick a relation between a syntax construct and semantics, then you need a different construct to represent different semantics.

These syntax-semantics mappings only ever condition *how* your language gets compiled. Some syntax constructs condition the frontend of your compiler, and some semantics decisions condition the backend. In the end, no matter what your language decisions are, the type of a compiler as a function is always string -> string (or a subtype thereof).

Let's pretend we implemented a language that has no basic syntax (i.e. the only possible program is an empty string). There is basically nothing to assign semantics to in this context.

This is how syntax is half of a programming language. If you can't represent certain concepts, then there is no point in implementing any semantics at all in relation to these concepts. And if you decide that a certain concept is represented in a certain way, then you are forced to think in this way to represent this concept. In this way, syntax conditions semantics.

In the context of the exchange below, my point is that even if you make a decision to attribute a certain semantics to a certain syntax construct, if your language has a general enough backend (that is, it isn't more specific than a string to string function), then you can for example pick the same syntax to mean different things in different programs. You chose what the syntax is, and so you chose what the semantics are.

In this way, in principle, this "general compiler" doesn't have to make decisions about the semantics. As a result, you do not have to justify the semantics of you programming language, since it can encode any semantics you might be interested in.

My original comment was meant as a fun interjection. I am saddened to see that Effectfully and his community see this as an opportunity to attack me without any interest for intelligent discourse.

English