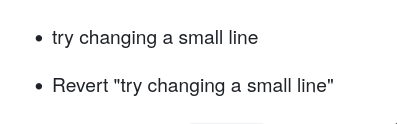

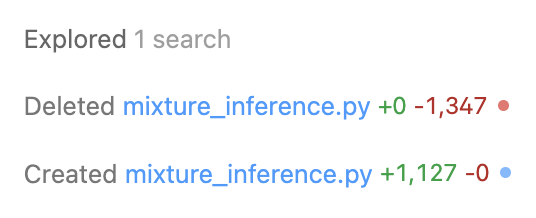

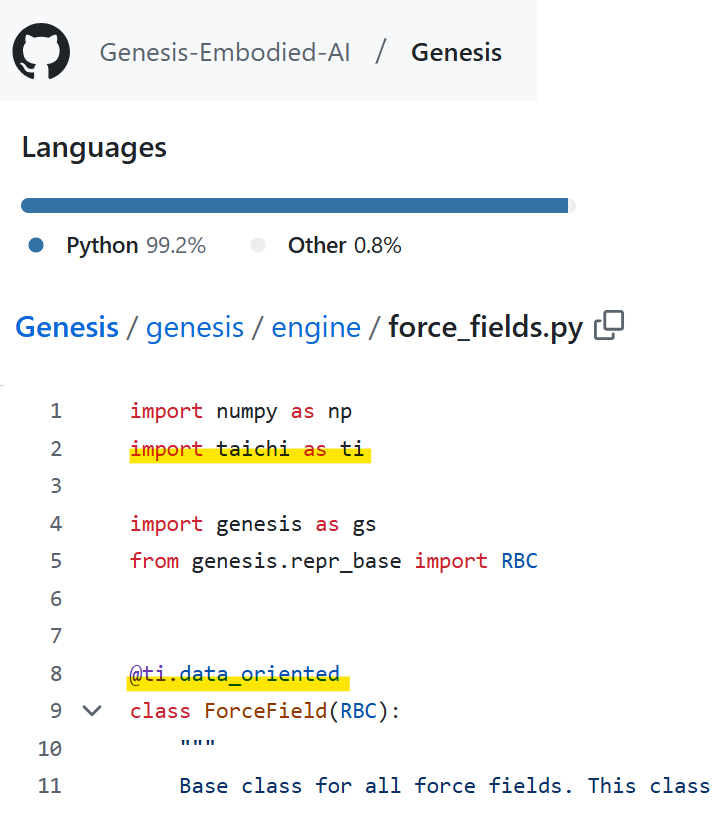

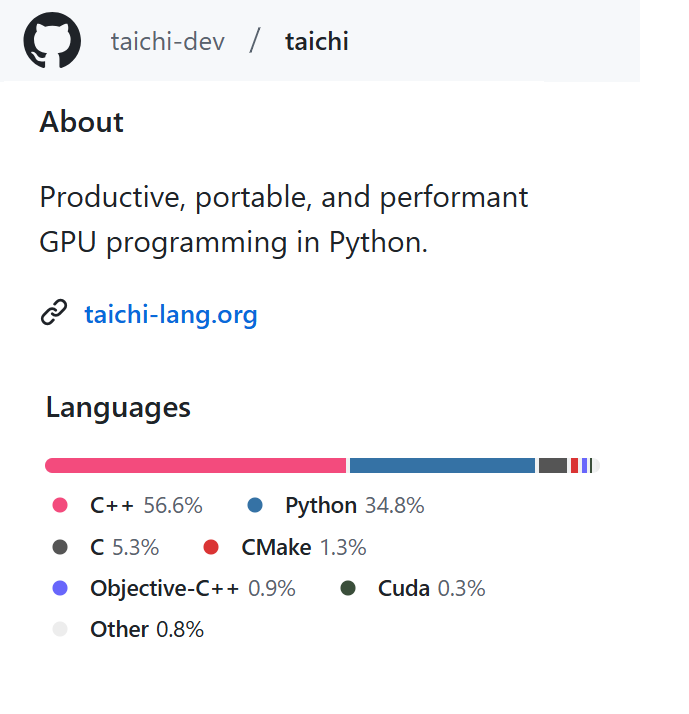

WTF?! New open-source physics AI engine absolutely insane! 🤯 Genesis is a new physics engine that combines ultra-fast simulation with generative capabilities to create dynamic 4D worlds for robotics and physics. TL;DR: 🚀 430,000x faster than real-time physics simulation, processes 43M FPS on a single RTX 4090 🐍 Built in pure Python, 10-80x faster than existing GPU solutions like Isaac Gym 🌐 Cross-platform support: Linux, MacOS, Windows, with CPU, NVIDIA, AMD, and Apple Metal backends 🧪 Unified framework combining multiple physics solvers: Rigid body, MPM, SPH, FEM, PBD, Stable Fluid 🤖 Extensive robot support: arms, legged robots, drones, soft robots; supports MJCF, URDF, obj, glb files 🎨 Built-in photorealistic ray-tracing rendering ⚡ Takes only 26 seconds to train real-world transferrable robot locomotion policies 💻 Simple installation via pip: pip install genesis-world 🤝 Physics engine and simulation platform are fully open-sourced 🔜 ”.generate” method/generative framework coming soon.