Ethan Dyer

39 posts

Introducing Claude Opus 4.6. Our smartest model got an upgrade. Opus 4.6 plans more carefully, sustains agentic tasks for longer, operates reliably in massive codebases, and catches its own mistakes. It’s also our first Opus-class model with 1M token context in beta.

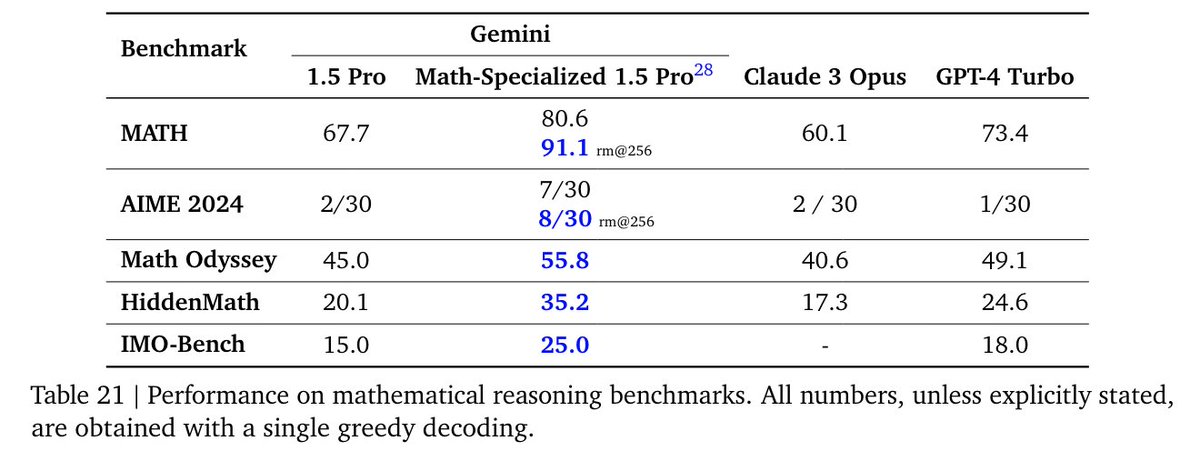

Today we have published our updated Gemini 1.5 Model Technical Report. As @JeffDean highlights, we have made significant progress in Gemini 1.5 Pro across all key benchmarks; TL;DR: 1.5 Pro > 1.0 Ultra, 1.5 Flash (our fastest model) ~= 1.0 Ultra. As a math undergrad, our drastic results in mathematics are particularly exciting to me! In section 7 of the tech report, we present new results on a math-specialised variant of Gemini 1.5 Pro which performs strongly on competition-level math problems, including a breakthrough performance of 91.1% on Hendryck’s MATH benchmark without tool-use (examples below 🧵). Gemini 1.5 is widely available, try it out for free here aistudio.google.com & read the full tech report here: goo.gle/GeminiV1-5

The fact that most individual neurons are uninterpretable presents a serious roadblock to a mechanistic understanding of language models. We demonstrate a method for decomposing groups of neurons into interpretable features with the potential to move past that roadblock.

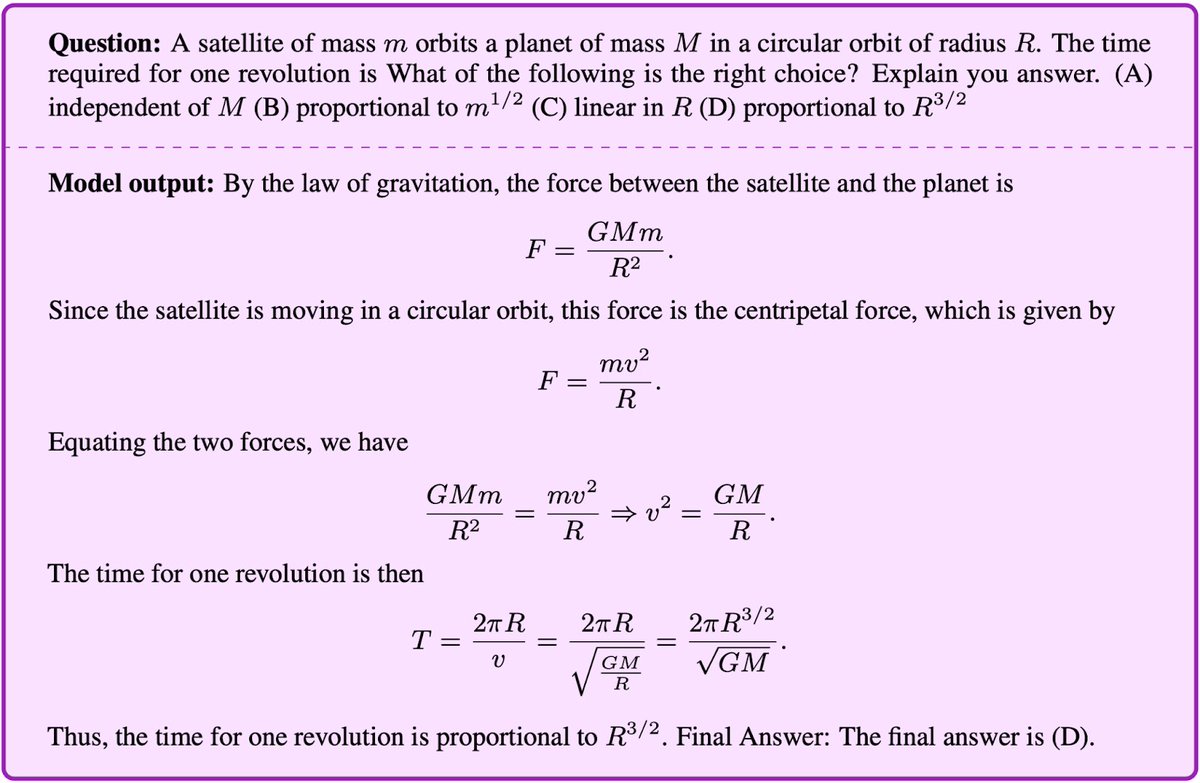

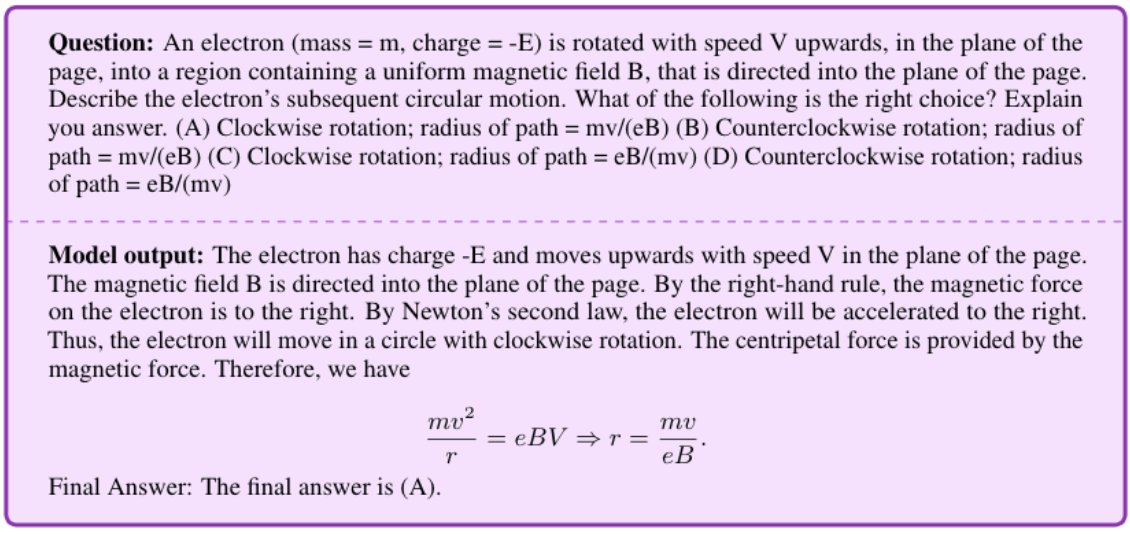

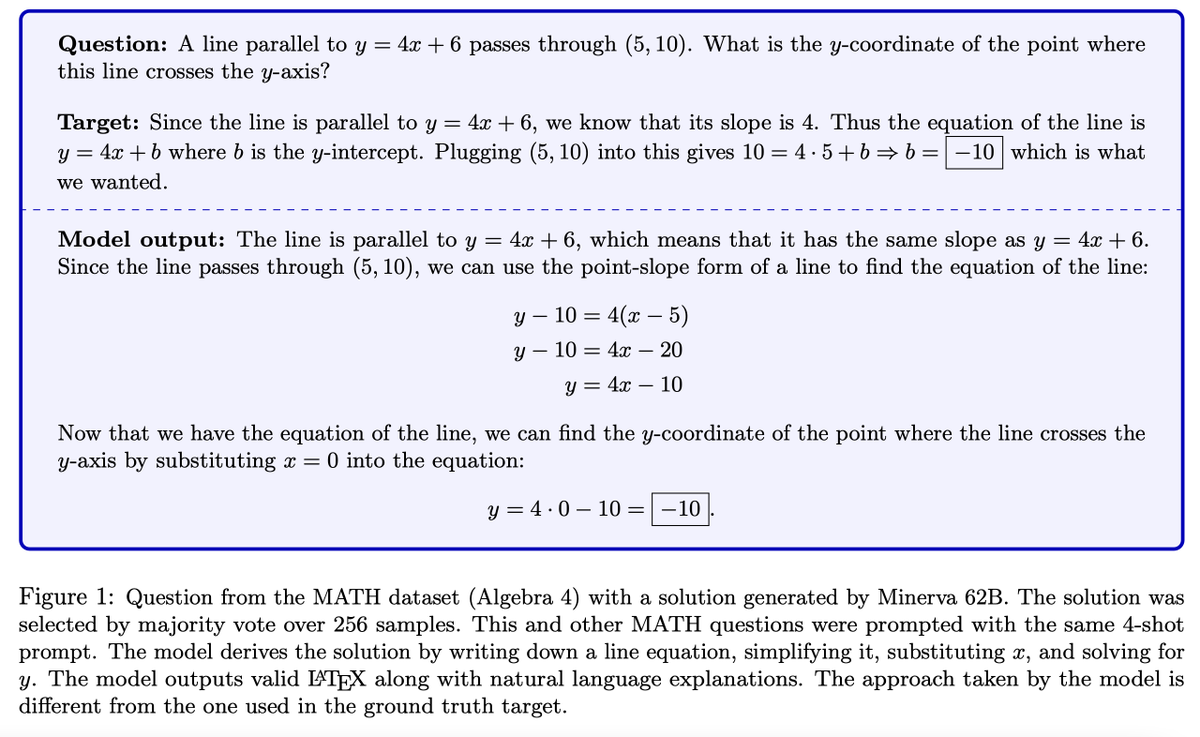

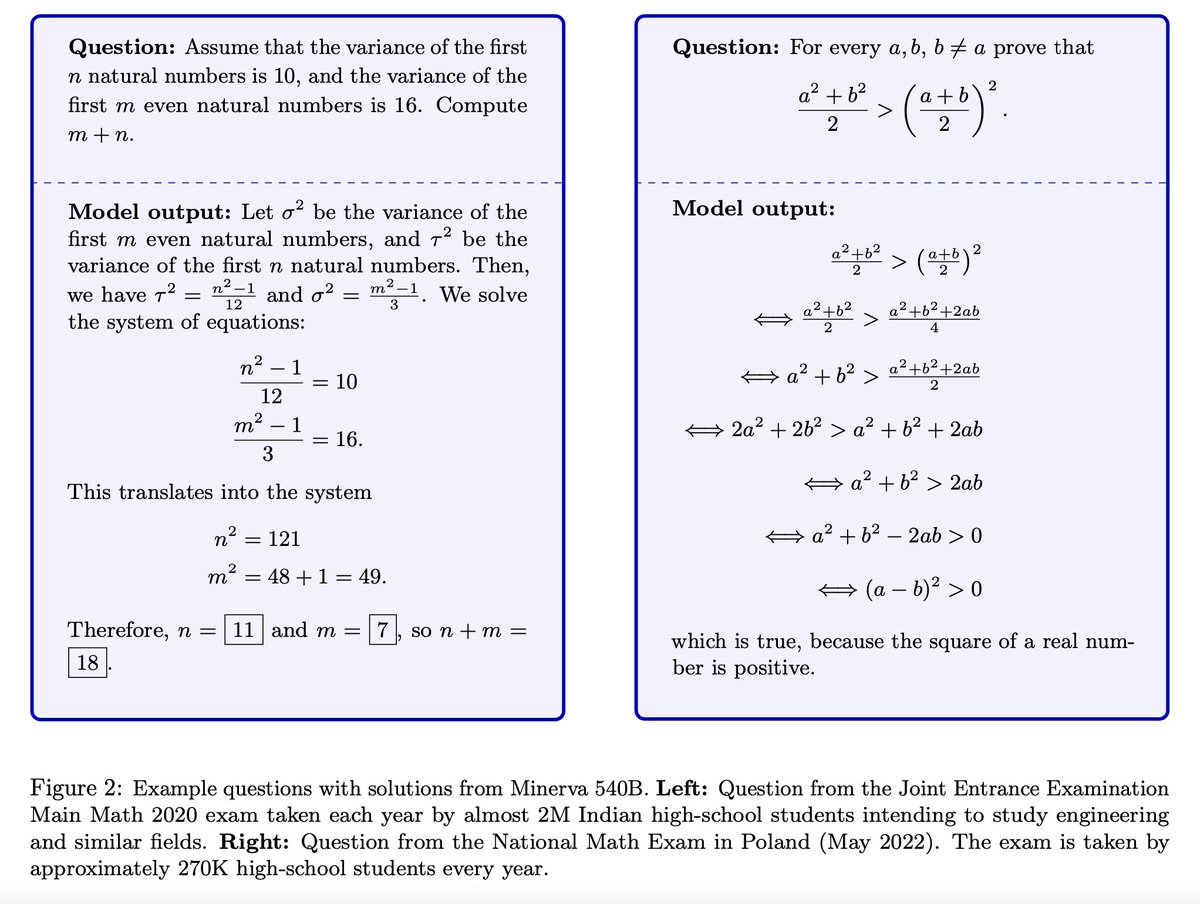

Very excited to present Minerva🦉: a language model capable of solving mathematical questions using step-by-step natural language reasoning. Combining scale, data and others dramatically improves performance on the STEM benchmarks MATH and MMLU-STEM. goo.gle/3yGpTN7

Very excited to present Minerva🦉: a language model capable of solving mathematical questions using step-by-step natural language reasoning. Combining scale, data and others dramatically improves performance on the STEM benchmarks MATH and MMLU-STEM. goo.gle/3yGpTN7

1/ Super excited to introduce #Minerva 🦉(goo.gle/3yGpTN7). Minerva was trained on math and science found on the web and can solve many multi-step quantitative reasoning problems.

1/ Super excited to introduce #Minerva 🦉(goo.gle/3yGpTN7). Minerva was trained on math and science found on the web and can solve many multi-step quantitative reasoning problems.

Very excited to present Minerva🦉: a language model capable of solving mathematical questions using step-by-step natural language reasoning. Combining scale, data and others dramatically improves performance on the STEM benchmarks MATH and MMLU-STEM. goo.gle/3yGpTN7

Very excited to present Minerva🦉: a language model capable of solving mathematical questions using step-by-step natural language reasoning. Combining scale, data and others dramatically improves performance on the STEM benchmarks MATH and MMLU-STEM. goo.gle/3yGpTN7