Jack Brown

51 posts

Jack Brown

@jackbr513

automating testing @getlark (YC S25). prev @stripe. climb with me https://t.co/fN5BghnSes

@bcherny @UltraLinx please at least fix the uncontrollable scrolling/flickering before the next 3000 features

Trump's approval rating just fell below 40 percent in our tracking for the first time. And his net approval rating is now -17.4, also a new low and down about 5 points over the past several weeks.

ICE detain mother at airport—traveling with young daughter. First reported kidnapping at airport since Trump ordered ICE agents to report—refuse to show ID. "I don't know who you are!" witness yells. "You could be someone kidnapping her!" Agent continue to refuse to show ID until bystanders agree they should call police to report a kidnapping. Incident occurred at the San Francisco International Airport Gate E2 on March 22, 2026, at 10pm. #DemsUnited

SFPD Statement on Sunday’s Incident at SFO

things are about to get interesting from here on

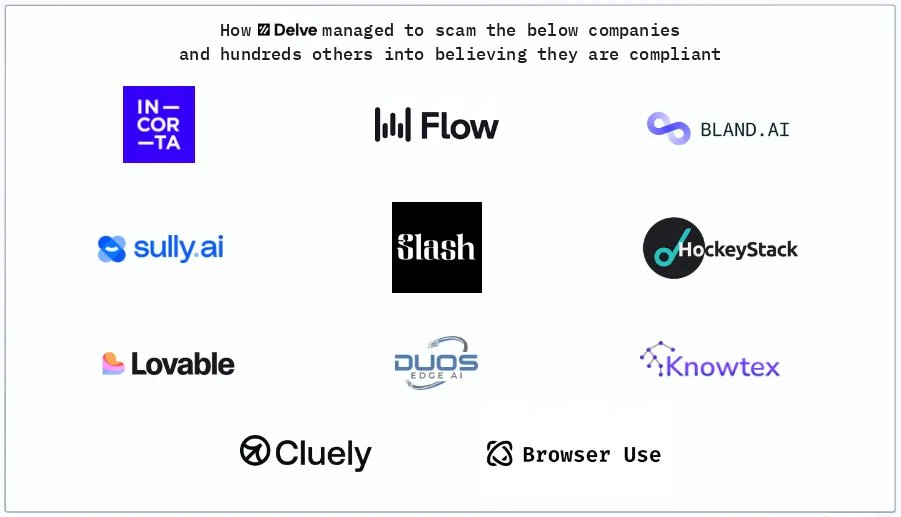

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

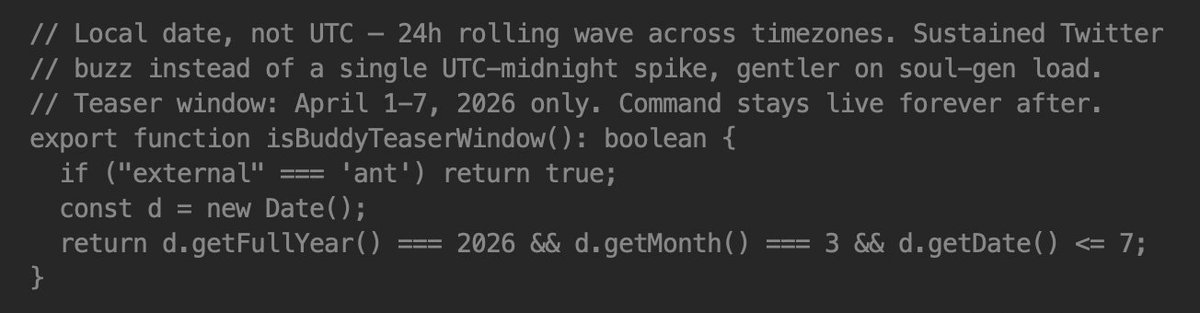

Run agents like Claude Code in any sandbox. We just open sourced runtimeuse.com - a framework for running agents in sandboxes without building all the infra yourself. Agents + sandboxes are powerful. The hard part is everything around them: - streaming messages - file exchanges - cancelling jobs mid-run, etc We hit this while building @getlark, so we open sourced our runtime. Try it: `npx -y runtimeuse --agent=claude` Feedback welcome 👇