David Cramer

33K posts

David Cramer

@zeeg

fractional executive, full time founder 🐛 https://t.co/Z9bOh4b9Kb ~$10,000k/m 🍺 https://t.co/Z9pUTnVBto $0k/m 🎲 https://t.co/3ADUqihLwW $0k/m 💭 https://t.co/KafPebObuI $0k/m

San Francisco Katılım Ağustos 2008

740 Takip Edilen23.8K Takipçiler

Sabitlenmiş Tweet

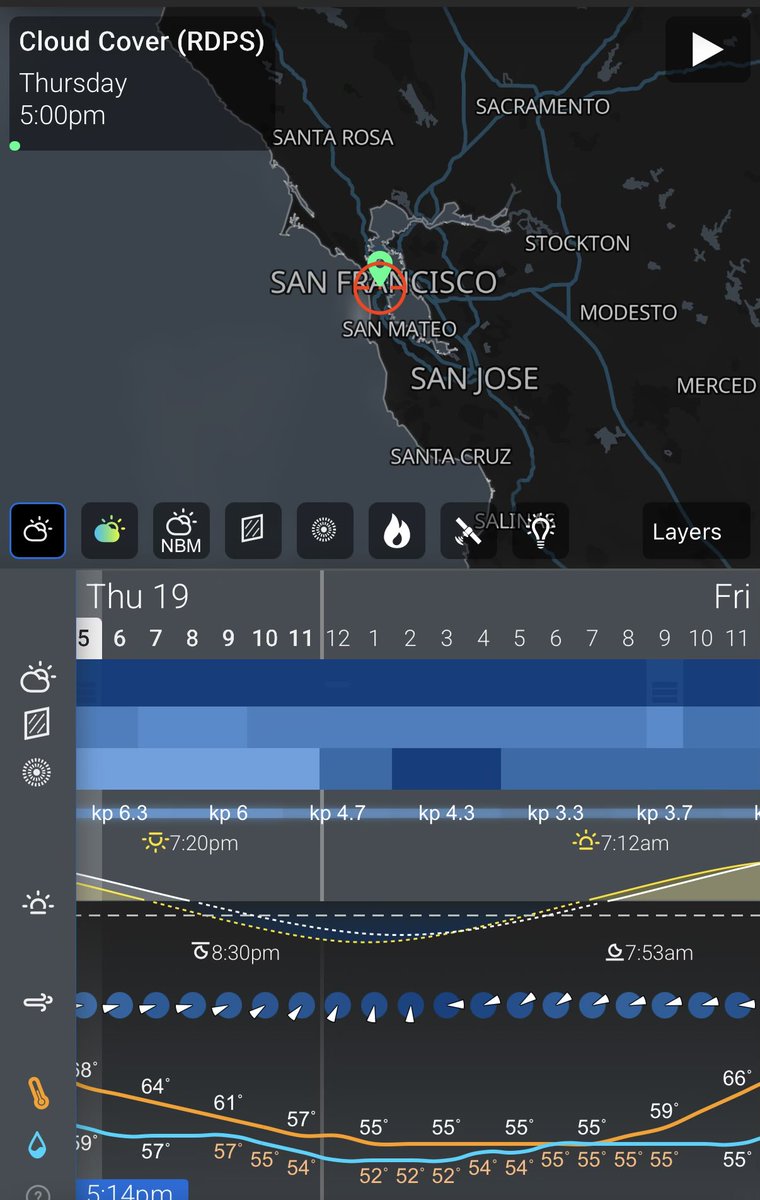

Gonna boot up the telescope again tonight. Need to get some calibration frames and fix some of the software.

should be live here when it’s up: cra.mr/astro

English

1) not surprising whatsoever

2) this is exactly what I keep saying about models not being powerful enough today

the fact that they can do so much with lossy compression is amazing, but there's no magic here

imo (for transformers) context windows need to be 1-2 orders of magnitude larger for the future people keep saying is reality, and even then the compute is probably not worth it

Lossfunk@lossfunk

🚨 Shocking: Frontier LLMs score 85-95% on standard coding benchmarks. We gave them equivalent problems in languages they couldn't have memorized. They collapsed to 0-11%. Presenting EsoLang-Bench. Accepted to the Logical Reasoning and ICBINB workshops at ICLR 2026 🧵

English

@_ChrisCovington @0xblacklight @dexhorthy Careful around anyone who names themselves after one of my open source projects 😅

English

David Cramer retweetledi

Hey. Sentry supports @EffectTS_ now. 😎

github.com/getsentry/sent…

English

@jiaweiou @miguelbetegon @sentry We should def detect TTY here - I think it’s fine for humans but when it’s not an interactive we can collapse it

English

@miguelbetegon @sentry I know. without instructions/skills, the first "instinct" of a harness is to call `help`, so that logo is in the context window.

maybe partly my issue. I don't use claude code and am too lazy to write skills.

English

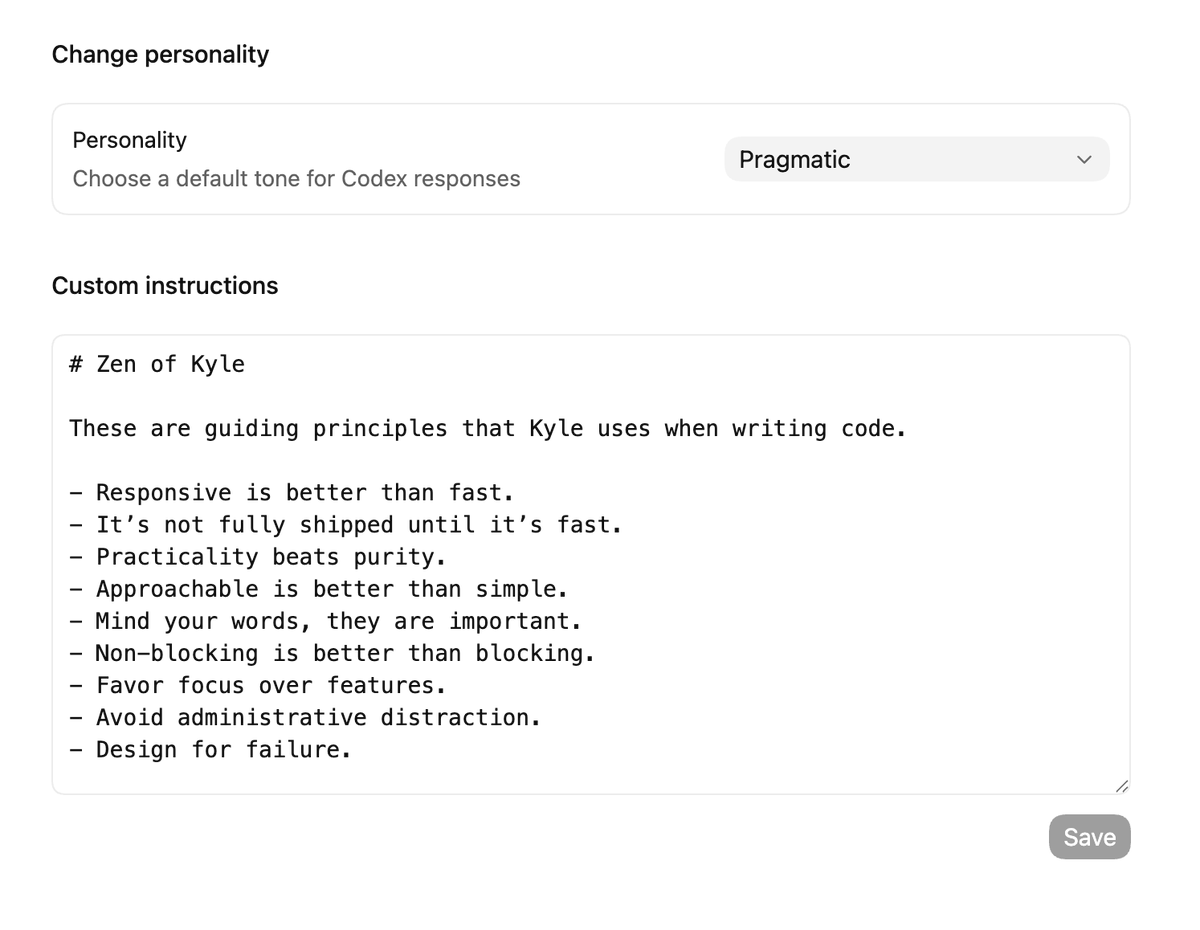

Way back when, I created The Zen of GitHub to direct the taste of a growing software company. I'm still really proud of it.

Fast forward twelve years. Now it's the basis for my agent instructions (with some edits). What a wild end-run.

warpspire.com/posts/taste

English

David Cramer retweetledi

Very disappointed to learn that they used a python templating engine to generate SOC2 reports vs Opus 4.6

erin griffith@eringriffith

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

English

opencode 1.3.0 will no longer autoload the claude max plugin

we did our best to convince anthropic to support developer choice but they sent lawyers

it's your right to access services however you wish but it is also their right to block whoever they want

we can't maintain an official plugin so it's been removed from github and marked deprecated on npm

appreciate our partners at openai, github and gitlab who are going the other direction and supporting developer freedom

English

@djgrant_ its definitely not _just_ larger context windows, i agree with that

and larger might not be the solution. its very possible transformers cannot solve this at all.

either way the amount of information it can manage is insufficient

English

@zeeg I don't think larger context windows solve this. You give an LLM all the information and it can be completely in the dark about what a thing is ontologically. It's like a DVD player that has no idea what the film is about.

English

David Cramer retweetledi

At the Agents Anonymous SF meetup last night we did another 🙋 AI usage survey, here are the est. numbers:

Usage stats:

- 90% Claude Code

- 60% Codex

- 30% Cursor

- 20% OpenCode

- 10% Conductor

- 10% Own agent/Pi

80% have prompted a coding agent from mobile

50% have not handwritten a single line of code this year

99% think they're more productive now vs. pre agentic coding agents

Parallel agent usage:

- 90% 3+

- 70% 4+

- 50% 5+

- 5% 10

Also want to give a ginormous thank you to our incredible speaker lineup:

- @jonas_nelle & @alexirobbins from @cursor_ai

- @southpolesteve from @Cloudflare

- @LewisJEllis from @ycombinator

- @aidandcunniffe from Git AI

- 🦞 @steipete from @openclaw

Hope to see you all at the next one! 🫡

English

@0xblacklight Yeah I agree it’s both recall and quantity and neither problem improved much. Codex skill calling is fairly impressive on recall but it still struggles more as time goes on just like everything else

English

yeah to be fair if we can get 1M-2M context AND an OOM better-quality instruction adherence at that length then we are really cooking with gas

I think right now folks have the mindset of 'longer context will save us' but longer context really doesn't help without better instruction following

e.g. I have so far been generally unimpressed with Opus 1M, even at shorter lengths it seems to attend less to instructions that regular opus would handle fine

English

@deepdrpt They can be totally helpful and also not capable of the impossible ;)

English