고정된 트윗

Lin Feng

127 posts

Lin Feng

@lf4096

AI believer. Building the OS where agents live, remember, and work as teams. 🇸🇬

가입일 Aralık 2022

79 팔로잉54 팔로워

Cursor is thriving. Cursor is collapsing.

Both can be true.

On paper, Cursor looks unstoppable: ARR went from $1B to $2B in 3 months. One of the fastest ramps in software history.

But Claude Code went from launch to $2.5B ARR in 8 months. Teams like Valon moved 90+ employees off Cursor entirely.

The important part is that revenue reacts slower than developer behavior.

Individual developers can switch tools in one afternoon. One person, one credit card, done. Enterprises can't. Contracts, security review, procurement, training. So developer migration gets masked by enterprise revenue for a while.

But companies don't really choose coding tools. Developers do. IT usually just formalizes that decision later.

So Cursor can keep posting incredible numbers while the users who made it famous are already experimenting with something else.

This is why "Cursor is thriving" and "Cursor is collapsing" can both be true at the same time.

Cursor won the phase where humans stayed inside the IDE and AI helped them write code. But if the next phase is humans describing intent while agents do the work, then the center of gravity moves away from the editor itself.

To its credit, Cursor sees this. It’s pushing Cloud Agent, building a model-neutral orchestration layer, and training its own model. It knows the future may not belong to the IDE alone.

Cursor’s fate hinges on a race between autonomous coding AI and its own transformation. The real question is whether it can change faster than the category evolving around it.

English

@Polymarket A new social contract should mean AI benefits flow to most people, not just a handful of AI companies.

English

@pbteja1998 @openclaw Same here. gpt-5.3-codex always says but does nothing. gpt-5.4 is better, but still not Opus.

English

Tried GPT 5.3 Codex with @openclaw, and it's so bad...

and it also is giving me the famous... "If you want, I'll now...." thing at the end 😵

Not sure what's the best model to use with @openclaw now...

I started a new session and asked that same question to Sonnet, it's response is easily 10x better.

English

@thespearing @steipete @AnthropicAI Understand the cost pressure of Anthropic, but OpenClaw was arguably the biggest driver of Claude subscription growth recently. Cutting it off means losing exactly the users who were willing to pay the most.

English

“Hello, this is @AnthropicAI, and we know you’ve been building the most amazing things for the last 4 months. However, the truth is we don’t have the infrastructure to keep up with the power of open source AI agents. We’ve decided to confine you to our apps that can only do about 25% of what you’re currently doing.”

No thanks.

Subscription canceled.

New models have already been configured.

Thanks @steipete and @openclaw for giving us so many options.

English

@damengchen 1. Claude Code can connect to non-Claude models without the source code.

2. Claude Code is strong largely because Opus is strong.

English

LiteLLM hit by a supply chain attack on PyPI.

Axios npm account hijacked and shipped malware.

Anthropic accidentally leaked Claude Code via npm.

The entire AI toolchain runs on package registries that keep getting compromised.

Now we're building AI skill marketplaces on top of that same model.

English

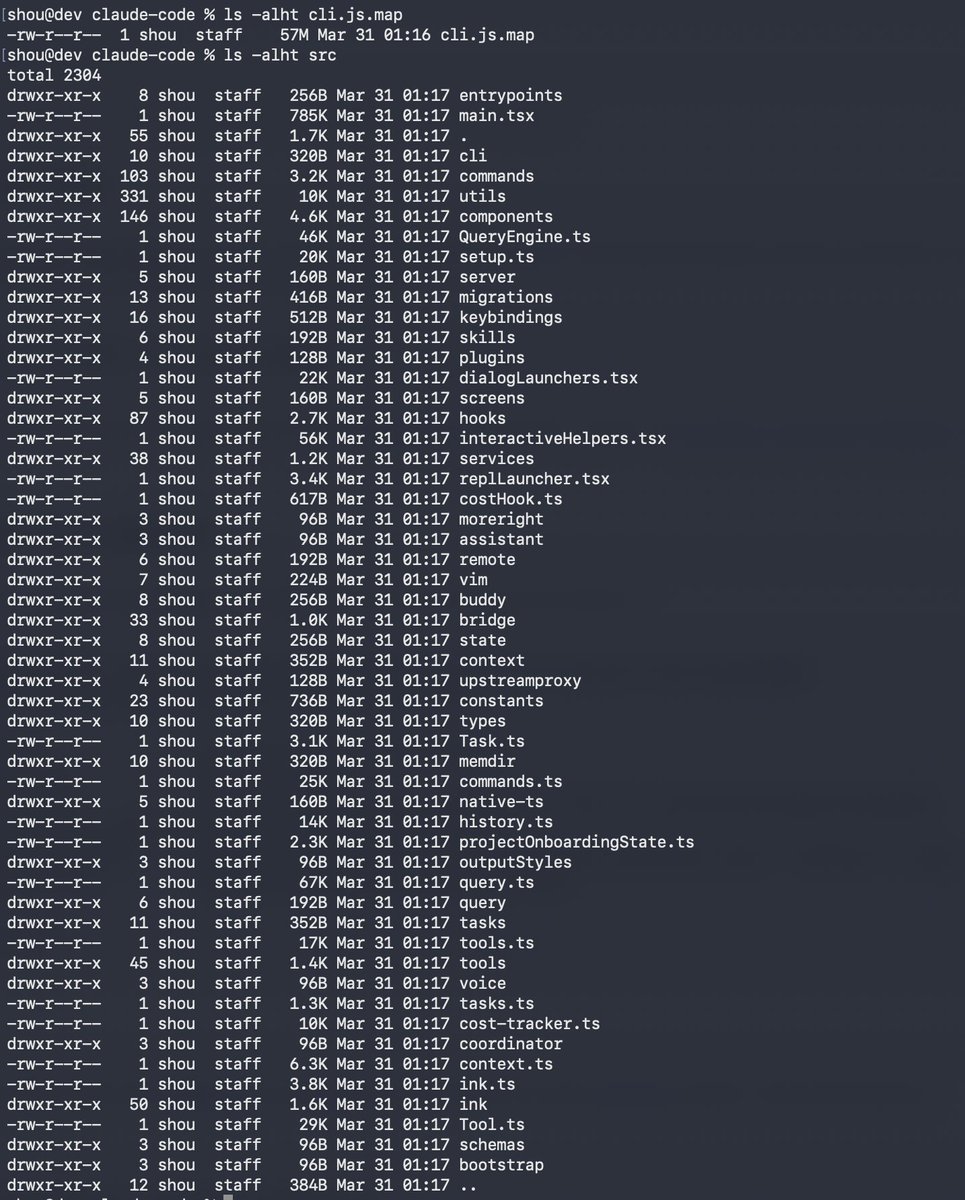

Claude code source code has been leaked via a map file in their npm registry!

Code: …a8527898604c1bbb12468b1581d95e.r2.dev/src.zip

English

The most powerful AI story I read today: GitLab co-founder Sid Sijbrandij used AI to fight his own cancer when doctors ran out of options.

Sid was diagnosed with osteosarcoma, a rare bone cancer in his spine. After surgery, radiation, and chemo, the cancer came back. His oncologist had nothing left to recommend. No treatments, no trials.

He quit his job and went founder mode on his cancer. Ran every diagnostic available, collected 25TB of genomic data, and started making his own drugs: a personalized mRNA vaccine, engineered T-cell therapies, and a CAR-T with a custom logic gate to avoid destroying his liver.

His geneticist (not a doctor) built a multi-agent AI system to analyze his genomic data. It found a protein target 10,000x overexpressed in his tumor that no lab had published on, invisible in standard tests because it's hydrophobic.

AI-driven single-cell sequencing revealed his cancer cells were rich in a protein called FAP. That led him to a doctor in Germany running an experimental therapy combining FAP-targeting with radioactive substances. Two treatments: 60% tumor necrosis, 20% shrinkage. The tumor detached from his spine and was surgically removed.

He's now building evenone.ventures to scale this approach for others. Right now, going founder mode on your own cancer requires resources most patients don't have. But AI is making personalized medicine possible at a speed and cost that could one day put this within reach for everyone.

English

Your headphones just became a personal translator in 70+ languages. 🎧✨

Google Translate’s “Live translate” with headphones is officially on iOS. We're also expanding this capability to more countries around the world for both @Android and iOS users.

To try it, open the Translate app, tap “Live translate” and connect your headphones.

English

Didn't expect monks to get replaced by AI before accountants 🙏

Ejaaz@cryptopunk7213

this is insane lol japan is running out of monks... so they're training AI robots called "buddharoid" to replace them 😂 (im not joking): - japan's temples are closing because fewer priests are available to run them + aging population - the solution: chatgpt robots trained on 1000+ years of buddhist scripture that answer your spiritual questions - the robot even sits in religious prayer positions like an actual monk does. you can literally have a conversation on life's deepest dilemmas with a robot as smart as the dalai llama i cannot believe they're scaling these robots to run actual temples.

English

🚨 BREAKING: ANTHROPIC'S MOST POWERFUL MODEL JUST LEAKED

Anthropic accidentally left draft blog posts in a publicly accessible data cache. Cybersecurity researchers found them:

>new model called "Claude Mythos"

>also referred to as "Capybara"

>a brand new tier of model

>larger and more intelligent than Opus

Anthropic confirms it's real:

>"a step change"

>"the most capable we've built to date"

>"dramatically better at coding, reasoning, and cybersecurity"

>"currently far ahead of any other AI model in cyber capabilities"

so far ahead they're worried about it:

>"it presages an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders"

This is genuinely a big deal.

English

@AnthropicAI In my experience, auto mode block too much on deployment tasks and kill the workflow. Suggest to add an "auto-allow only" option: the classifier approve safe actions automatically, but fall back to asking the user instead of auto-denying.

English

New on the Engineering Blog: How we designed Claude Code auto mode.

Many Claude Code users let Claude work without permission prompts. Auto mode is a safer middle ground: we built and tested classifiers that make approval decisions instead.

Read more: anthropic.com/engineering/cl…

English