Nick Gates

77 posts

In the AI Era, speed of execution is key to winning GW-scale deals worth tens of billions of dollars. In Abilene, TX, @CrusoeAI , @Lancium and @Oracle are moving remarkably fast to serve OpenAI. In barely 7 months, they simultaneously built six 103MW buildings!!! (1/4) 🧵

I’m excited to announce that @CrusoeAI has closed our Series E round of financing valuing the company at $10.4B to help us build the infrastructure of intelligence. This round was led by our incredible partners at Valor Equity Partners and @MubadalaCapital. Solving the scaling needs of AI is one of the greatest challenges of our generation. If you’re inspired by working on big and difficult problems, come and join us! We had an amazing group of investors in the round including @137ventures, @1789Capital, Activate Capital, @AltimeterCap, @Atreidesmgmt, BAM Elevate, DPR Construction, @OraGlobal, @Fidelity, @foundersfund, @FTI_US, @GalvanizeLLC, @LongJourneyVC, @lowercarbon, M37, MCJ, @nvidia, @RadicalVentures, @RibbitCapital, @SalesforceVC, @saquon, @sparkcapital, @stepstonegroup, @Supermicro, @TRowePrice, Tiger Global, @upper__90, @winklevosscap, @ziggcap crusoe.ai/resources/news…

Data center coalition PJM proposal includes customer-enabled capacity commitment 1:1 matched with their generation ramp in the same LDA (e.g., PPA, urate, supporting asset that didn't clear last auction, etc.) in exchange for expedited interconnection treatment

Big jump in the quarterly orders for aeros. Heavy-duty gas turbines remain strong too. Won't increase capacity (20GW run-rate by 2H26) until they have 4-5 years of capacity (80-100GW backlog). They will get to 60GW backlog by YE25. Will continue to drive price in meantime...

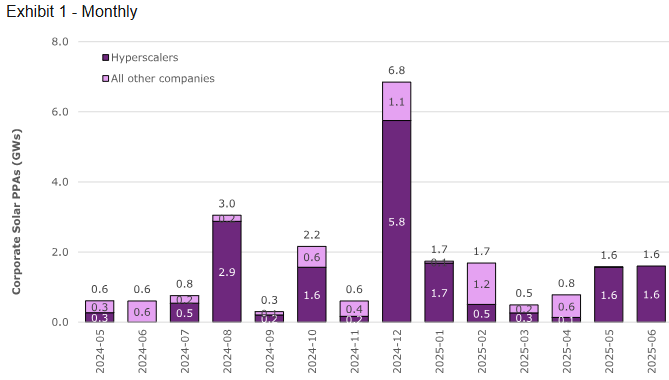

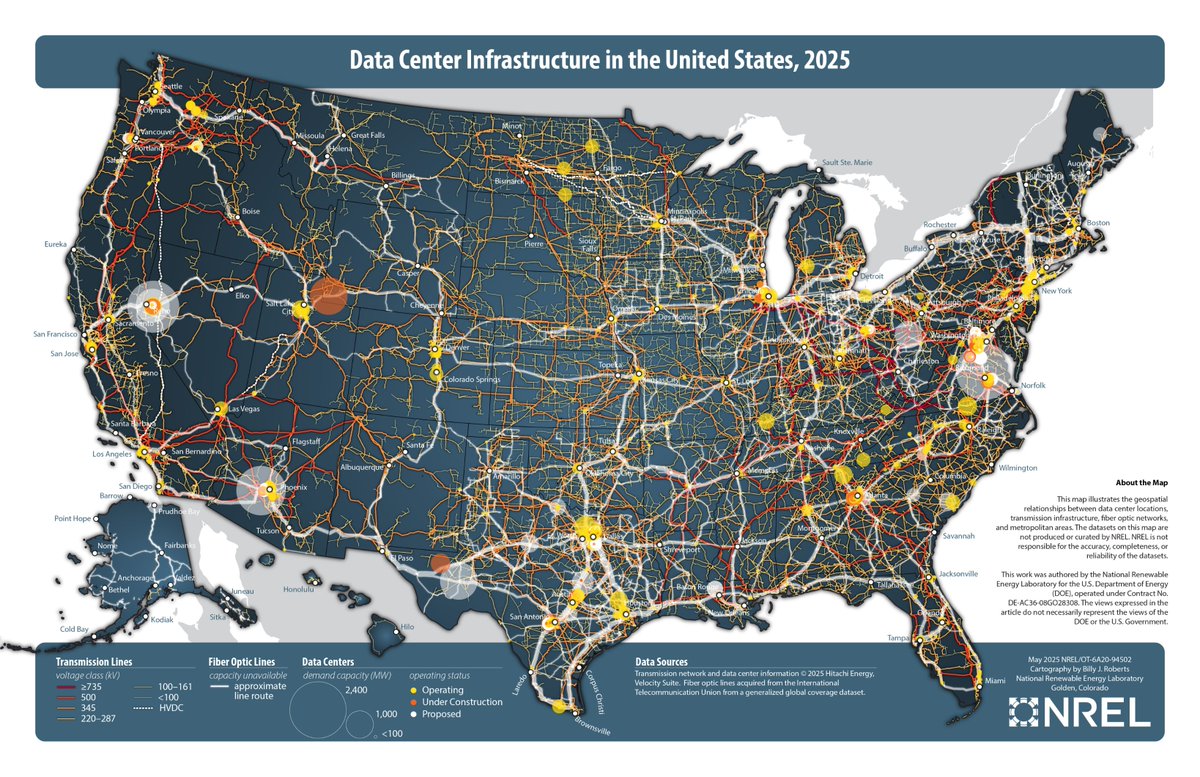

Broker insights from NEE developer day: -Data centers driving growth: NEE forecasts US power demand to increase 55% from 3.8 to 5.9 thousand TWh between 2020-2040, with data centers accounting for approximately one-third of this growth. -Large islanded power solutions are specialized: These 3-5GW solutions require customers to build backup systems and accept risks of potential stranded power assets. -NEE's gas generation portfolio: Currently operates 25.9GW of gas-fired capacity, with 16.1GW built in the last 20 years (more than any US competitor). -Construction costs have surged dramatically: CCGT from $800/kW (2021) to $2,400/kW (2030), gas peakers from $600/kW to $1,400/kW, with EPC costs increasing over 3x. -Transmission development timelines: New AC lines require 3-4 years to complete, while HVDC lines need 5-6+ years.

The issue with these cost data estimates for solar + BESS is that everyone is focused on 4-hour battery life as the standard measure. That is simply not sufficient. To be a true replacement for baseload CCGT, you legitimately need at least 16 hours, which increases the costs dramatically, significantly higher than the ~$2000/kw for CCGT. The CCGT costs themselves will also go up over the next five years, but still not in the range of solar + BESS.

Seen via BBG but Information reporting: Meta plans $200B+ data center for AI. Campus could require 5-7 GW of power and would significantly exceed the $10B Louisiana facility previously announced. This massive investment aims to support AI features across Meta's apps and compete with OpenAI's expansion.

@clawrence @duncancampbell Let me put it this way...the ONLY thing impressive about the solar industry is its remarkable ability to retain top level PR, lawyers, and lobbyists. This is objectively true. And the fact that you ran away offended within seconds of hearing this truth just confirms it.