0.005 Seconds (3/694)

48.5K posts

@seconds_0

human first systems are the only thing left built https://t.co/DSD2mZtuap

What game is this?🚀

We just released Claude Code channels, which allows you to control your Claude Code session through select MCPs, starting with Telegram and Discord. Use this to message Claude Code directly from your phone.

New libidomaxxing method just dropped

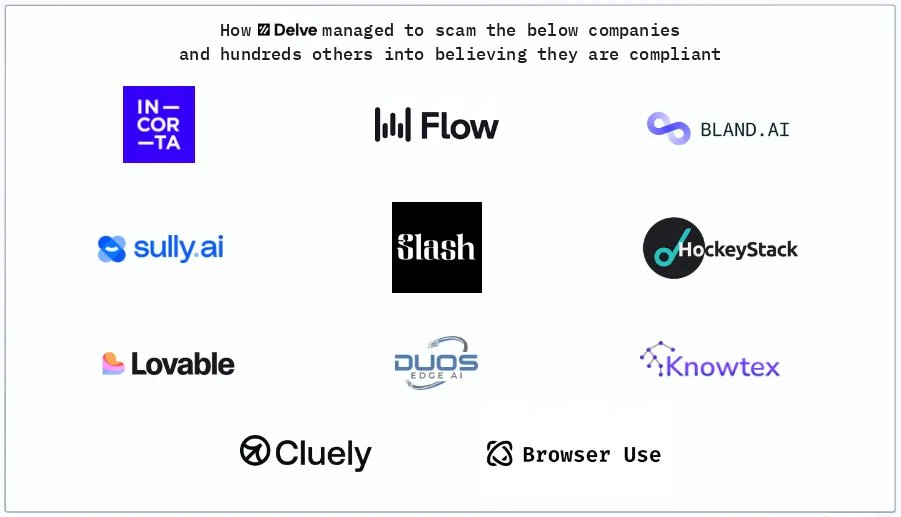

A detailed and brutal look at the tactics of buzzy AI compliance startup Delve "Delve built a machine designed to make clients complicit without their knowledge, to manufacture plausible deniability while producing exactly the opposite." substack.com/home/post/p-19…

This is not good: People have learned that GLP-1s are really effective, so if they're not losing weight, they know they're in the placebo group. So these people getting placebos are getting mad and leaving the trials.

I have no idea what specialized model for context compaction means and I have like 5 papers and announcements to read before I can think about this. It’s crazy that for even a single model we may have a whole ecosystem of specialized models for optimization. Spec decode model, compaction. What comes after that?