sidecus

2.2K posts

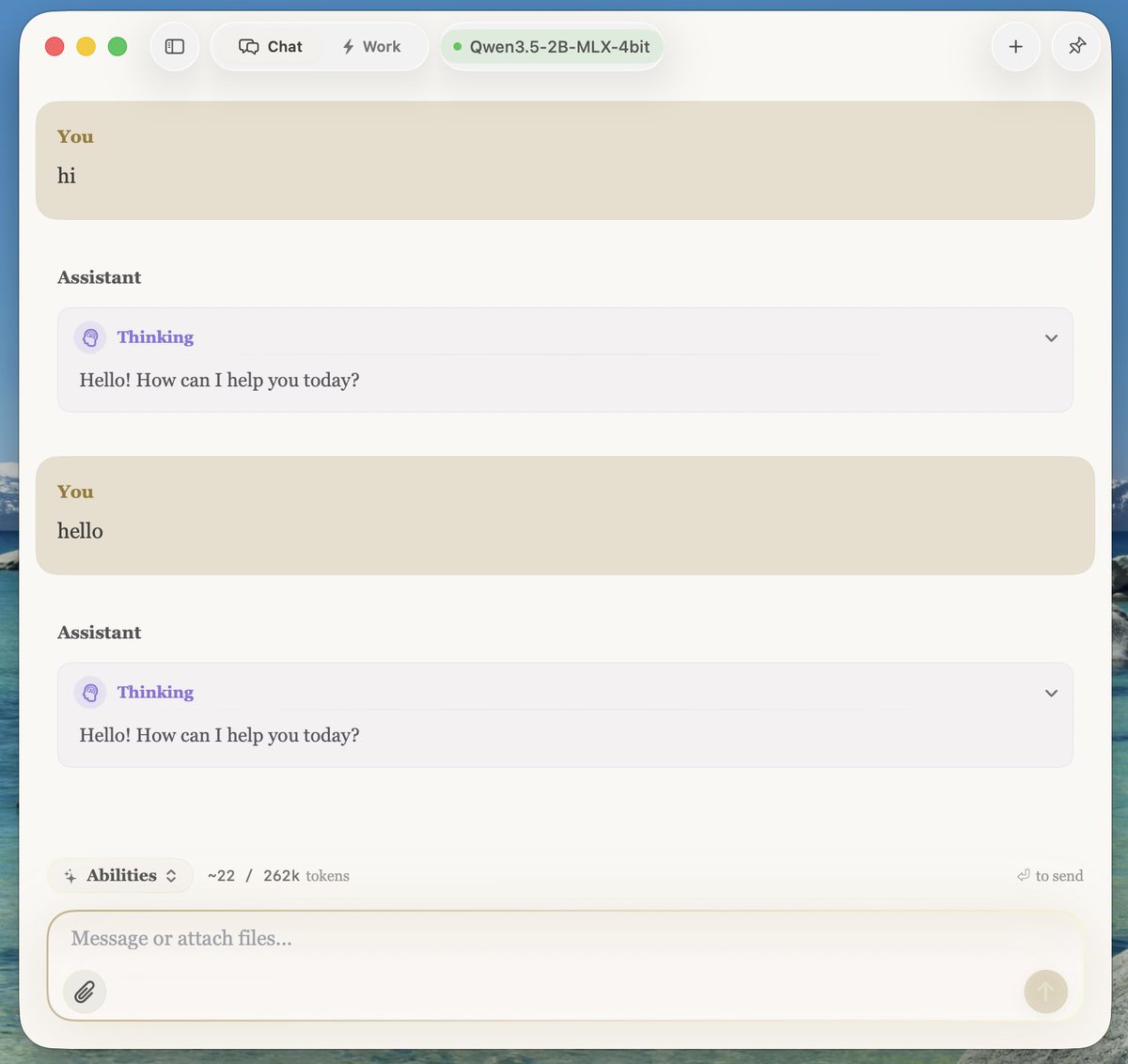

🚀 Introducing the Qwen 3.5 Small Model Series Qwen3.5-0.8B · Qwen3.5-2B · Qwen3.5-4B · Qwen3.5-9B ✨ More intelligence, less compute. These small models are built on the same Qwen3.5 foundation — native multimodal, improved architecture, scaled RL: • 0.8B / 2B → tiny, fast, great for edge device • 4B → a surprisingly strong multimodal base for lightweight agents • 9B → compact, but already closing the gap with much larger models And yes — we’re also releasing the Base models as well. We hope this better supports research, experimentation, and real-world industrial innovation. Hugging Face: huggingface.co/collections/Qw… ModelScope: modelscope.cn/collections/Qw…

Why is Rachel @Maddow intentionally spreading disinformation? She falsely claimed President Trump called coronavirus a "hoax." He did not, and the lie has been debunked by 5 independent fact checkers. Will she apologize for lying to her viewers? checkyourfact.com/2020/02/29/fac…