Sabitlenmiş Tweet

Osaurus

815 posts

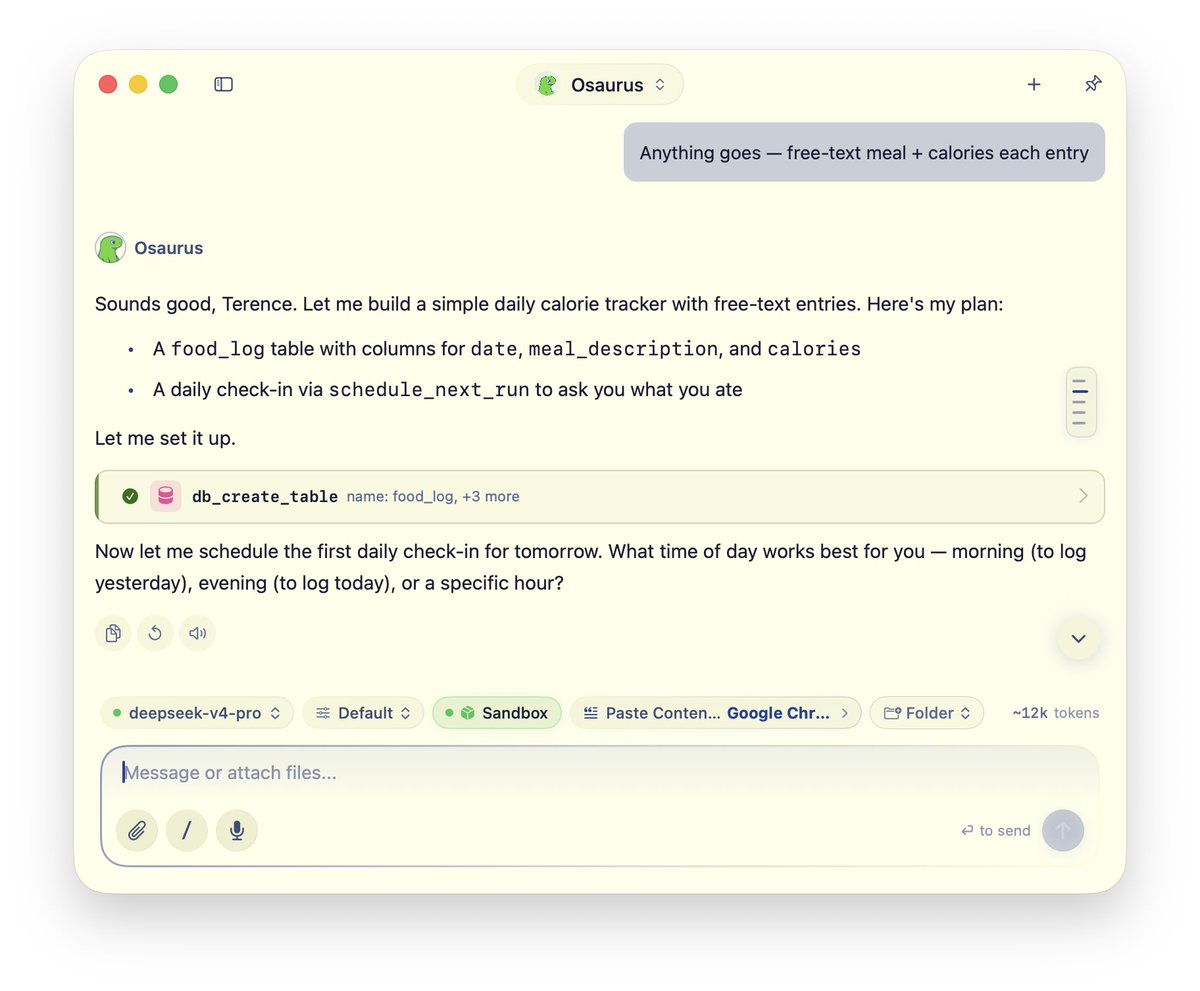

Osaurus

@OsaurusAI

Own your AI. Agents that remember, execute code in isolated VMs, and stay reachable from anywhere -- all on your Mac. Any model. No cloud required. Open source.

Katılım Mayıs 2025

15 Takip Edilen6.1K Takipçiler

@icefrog_sol @TechCrunch Local-first everything, inference is local/cloud agnostic. There's no backend, everything is running locally.

English

Osaurus retweetledi

Osaurus brings both local and cloud AI models to your Mac techcrunch.com/2026/05/15/osa…

English

@PsudoMike @TechCrunch You can choose both as default. Osaurus also ships with it's own router, which is hosted locally

English

@TechCrunch The real question is the routing logic. Most local plus cloud apps default to cloud and use local as fallback. Flipping that default changes battery and latency assumptions overnight. Curious which way Osaurus leans.

English

Models get commoditized.

The harness compounds.

Thanks @SarahPerezTC for the writeup.

TechCrunch@TechCrunch

Osaurus brings both local and cloud AI models to your Mac techcrunch.com/2026/05/15/osa…

English

Osaurus retweetledi

🧵 1/4 You can run Hindsight (an AI memory layer) entirely locally — no cloud LLM required. Here's how to wire it up with @OsaurusAI, a local OpenAI-compatible model server. 🔒

English

Osaurus retweetledi

If you want to beat Claude at Legal AI, make your SaaS work on local AI inference. Client's data then never leaves their control.

I told @MaxJunestrand to create a "Legora Server" earlier this year.

Offline = inevitable.

Happy to advise anyone on how to do it.

English

"can you order me a box of tissues from amazon?"

Grok 4.3 did the rest.

Elon Musk@elonmusk

Grok 4.3

English

@InsiderPresider Sometimes you need those box of tissues when you make breakthroughs

English

@OsaurusAI It is impressive that the AI finally mastered the art of solving your minor inconvenience while the rest of the world waits for it to solve the big ones.

English