Dzmitry Pletnikau

1.4K posts

Dzmitry Pletnikau

@spring_stream

Curious about how the world works.

I hate tmux It's so incredibly user unfriendly The shortcuts make no sense I wish someone would make a better tmux Even just logging into tmux attaching the screen is an illogical hell to type Again I hate tmux, it's so shit

I see things like /simplify and the existence of code review and bug finding AIs. I have to ask, why do these things exist? Why doesn't the coding agent just naturally do these things? I'm sure there's a good answer. Can someone help me understand?

@itsandrewgao In 2026, "have a basic fucking clue what you are doing" is being presented as secret lore. What a time.

There are nearly no good reasons for an AI to ever impersonate a human. Making that illegal in as many countries as possible, and improving state capacity to enforce it, would be a good trial balloon for human civilization's ability to ban negative uses of AI.

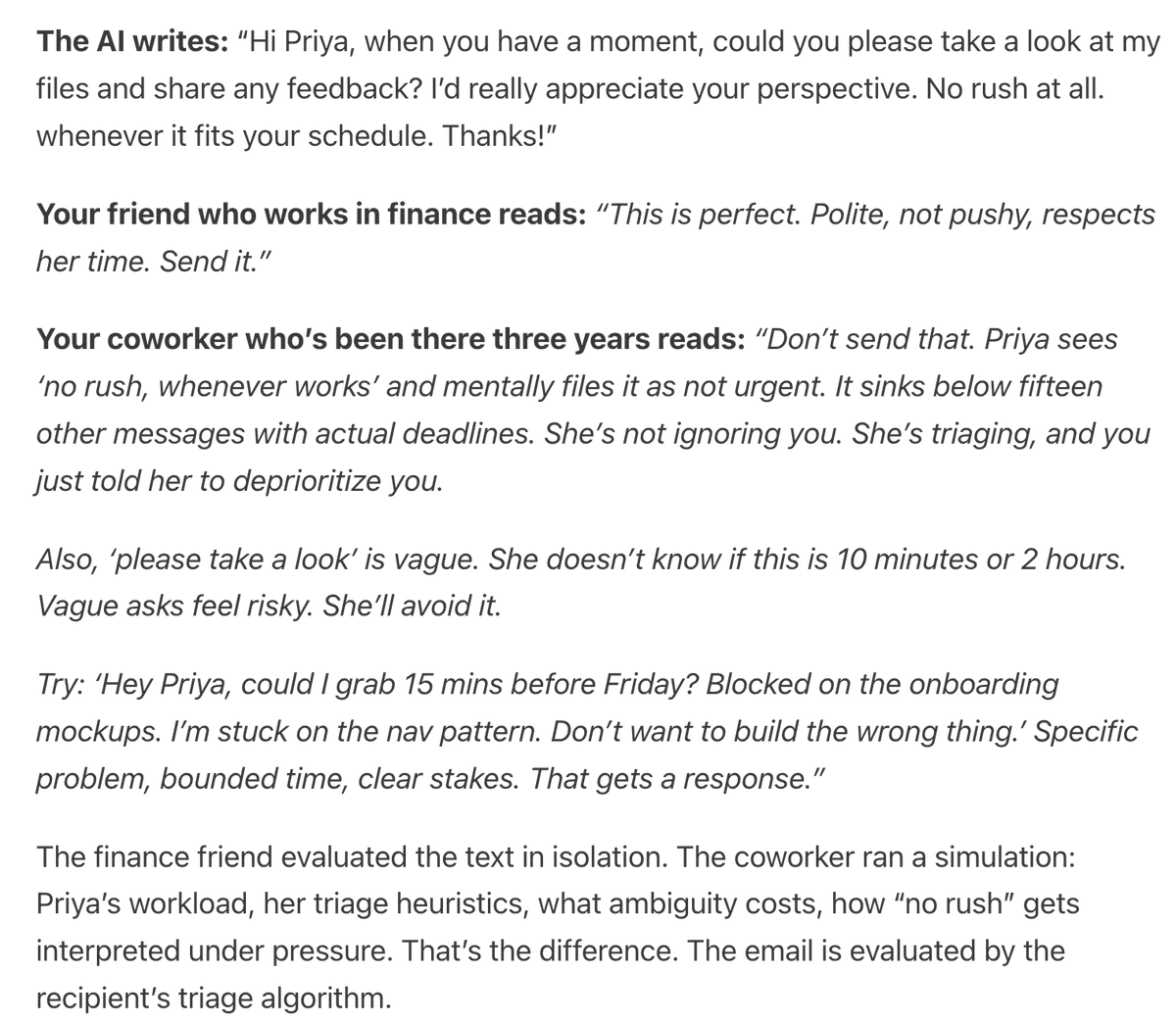

AI assistants like Claude can seem shockingly human—expressing joy or distress, and using anthropomorphic language to describe themselves. Why? In a new post we describe a theory that explains why AIs act like humans: the persona selection model. anthropic.com/research/perso…

Reached the stage of parallel agent psychosis where I've lost a whole feature - I know I had it yesterday, but I can't seem to find the branch or worktree or cloud instance or checkout with it in