Bert Maher

433 posts

Bert Maher

@tensorbert

I’m a software engineer building high-performance kernels and compilers at Anthropic! Previously at Facebook/Meta (PyTorch, HHVM, ReDex)

Technology and the future of our industry will be defined by two things: frontier models, and the products through which they are experienced. For some time, I’ve been thinking about how we best tackle these huge challenges, and today I’m excited to be evolving our structure at Microsoft AI, ensuring we’re positioned to succeed in both. I came to Microsoft with an overriding mission: to create Superintelligence that delivers a transformative, positive impact for millions of people. This requires us to build frontier models, at scale, pushing the boundaries of what’s possible. Everything else follows from this. It's the foundation for our future as a company. With our ambitious, long-term frontier scale compute roadmap locked, we now have everything we need to build truly SOTA models. The next phase of this plan is to restructure our organization to enable me to focus all my energy on our Superintelligence efforts and be able to deliver world class models for Microsoft over the next 5 years. These models will enable us to build enterprise tuned lineages that help improve all our products across the company. They’ll also enable us to deliver the COGS efficiencies necessary to be able to serve AI workloads at the immense scale required in the coming years. Achieving all this will be a huge challenge, and I’m committing everything we have – and I have personally – to make it happen. To that end, I’ve been working hard with other leaders in the background for a while now to define a strategy to unify Copilot by bringing together the Consumer and Commercial efforts as one. We all know this makes sense. Every user – whether at home or at work – will be able to enjoy the full benefit of what we are all building. Today, we’re combining these organizations into a single, unified Copilot org. @JacobAndreou has demonstrated himself to be an outstanding leader for the product experience and clearly has the product instincts, the operational range, and the conviction to make Copilot a great success. Jacob will retain a dotted line to me, and I’ll stay directly involved in much of the day-to-day operation of MAI and supporting Jacob to drive all areas of product strategy. To ensure that the models we build and the products we ship are mutually reinforcing, we are establishing a Copilot Leadership Team that includes me, Jacob, Charles Lamanna, Perry Clarke, and Ryan Roslansky. This will enable us to focus our brand strategy, our product roadmap, our models and our core infrastructure as one to deliver the best experiences possible for all our users. Thank you to the team for everything you’ve done over the last few years. I know how hard everyone has been pushing to help the company adapt to this new era. We really do have an incredible opportunity to redefine Microsoft for this agentic revolution. Let’s keep driving hard in this next chapter! blogs.microsoft.com/blog/2026/03/1…

A statement on the comments from Secretary of War Pete Hegseth. anthropic.com/news/statement…

@CernBasher As the number of bits drops, the difference between floating point and integer decreases until they are the same thing at 1 bit. “Floating point” is not real. It is emulated with 2 integers and a lot of complexity.

I’ve heard this complaint from a couple people recently, and I’m surprised because we optimized the launch path like a year ago and got it down to ~10us. There’s a now closed GitHub issue I filed with a microbenchmark - someone should run it, profile, and bring it down

why is triton’s kernel launch cpu overhead so freaking high? the actual kernel takes 10x less execution time than to launch it and i can’t use cuda graphs because the shapes are dynamic.

Spent the last couple of days porting my program verification class from Dafny to Lean via Loom/Velvet, and it just works! Whenever the SMT solver can’t fully prove a program correct, Lean’s aesop and grind take care of the remaining goals.

Today Thinking Machines Lab is launching our research blog, Connectionism. Our first blog post is “Defeating Nondeterminism in LLM Inference” We believe that science is better when shared. Connectionism will cover topics as varied as our research is: from kernel numerics to prompt engineering. Here we share what we are working on and connect with the research community frequently and openly. The name Connectionism is a throwback to an earlier era of AI; it was the name of the subfield in the 1980s that studied neural networks and their similarity to biological brains. thinkingmachines.ai/blog/defeating…

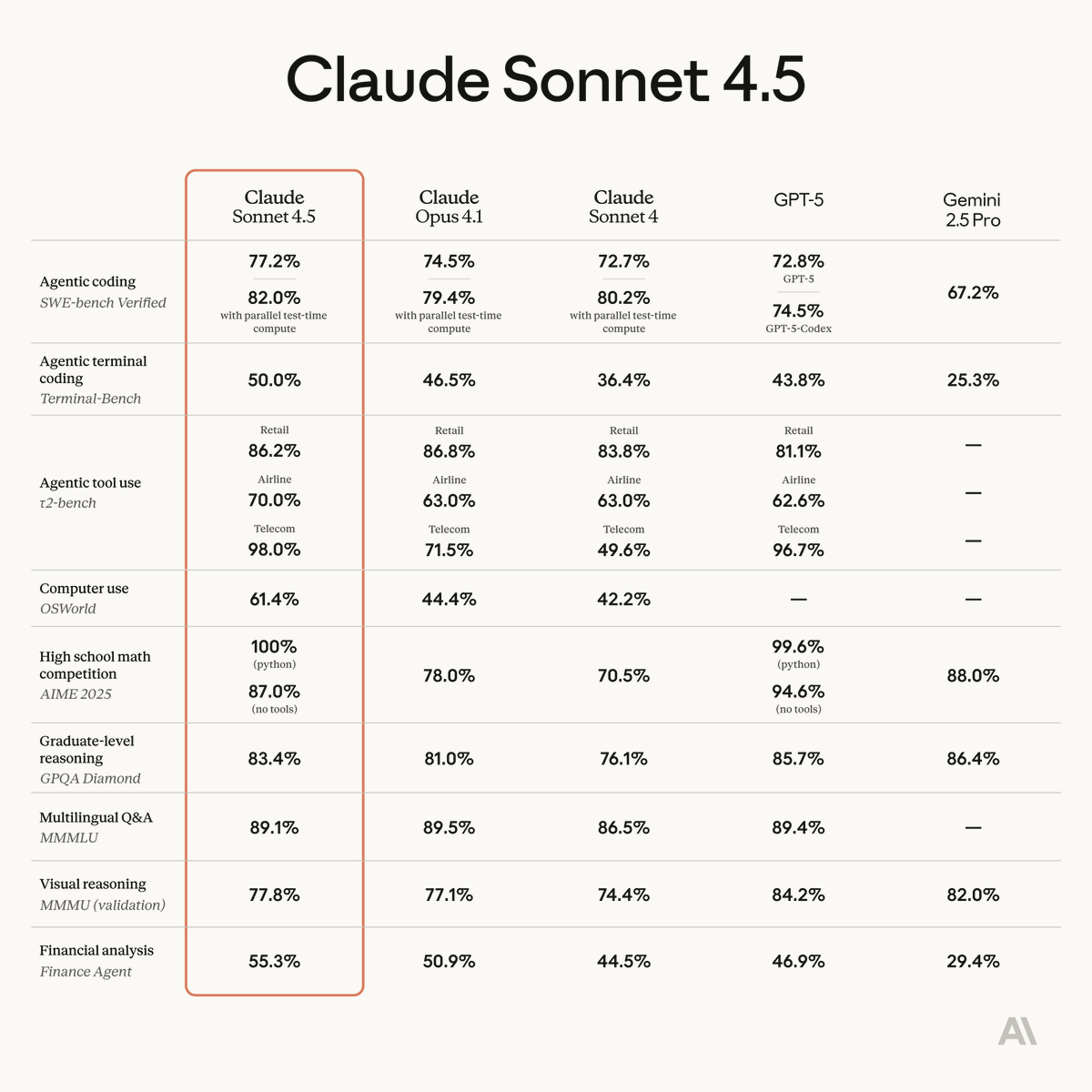

Introducing Claude Sonnet 4.5—the best coding model in the world. It's the strongest model for building complex agents. It's the best model at using computers. And it shows substantial gains on tests of reasoning and math.

Just one more DSL bro. I promise bro just one more DSL and we'll fix hardware adoption. It's just a better DSL bro. Please just one more. One more DSL and we'll port all the kernels. I just need one more DSL