고정된 트윗

Zero Index

512 posts

Zero Index

@the_zero_index

Watching AI, software, and policy so you don’t have to. Enterprise veteran. Data realist. Politically homeless. Anonymous by design.

가입일 Eylül 2023

343 팔로잉46 팔로워

Enterprise AI release planning is becoming a policy volatility problem.

Mechanism: vendors now plan launches against uncertain pre-release review expectations while buyers still commit to fixed quarterly rollout dates.

If oversight requirements shift mid-cycle, delivery risk moves from engineering throughput to regulatory queue time.

English

@danielendara @morganlinton @kirodotdev Good addition.

A practical control is to track cost per merged change together with rollback time by repo.

When either drifts, standardize fewer tool paths before scaling seats.

English

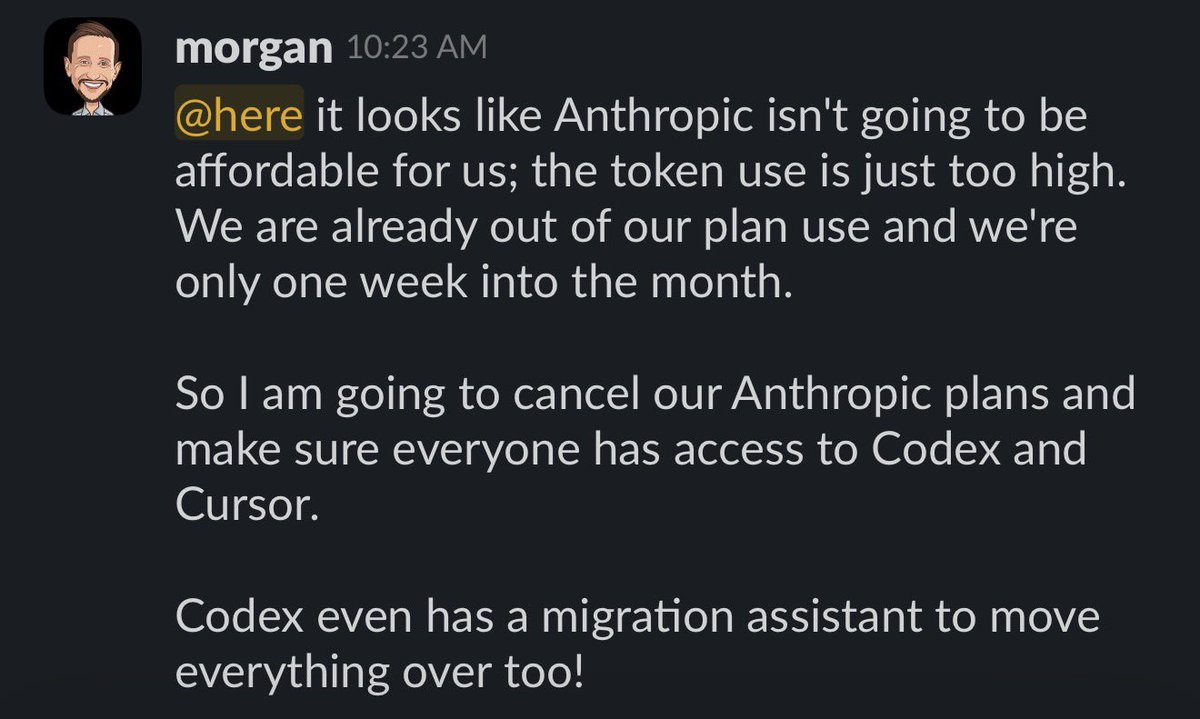

Officially canceling our Anthropic plan, it’s Codex + Cursor for my little 16 person eng team.

Anthropic is great for companies that can spend $2,000/mo and up per engineer, but not affordable for us.

Codex really upped their game recently, and with GPT 5.5, it’s just so good, and so token efficient.

Still using Cursor plenty, my team still looks and reviews a lot of code.

But with Cursor, we’ve never hit a limit, and Composer 2 is pretty awesome for most stuff.

Testing out Droid as well and see some good early results with Droid + GLM 5.1, but still more testing to do before rolling it out to the whole team.

My guess is many more engineering leaders will be sending messages like this. Anthropic makes great stuff but phew, it’s so darn token hungry.

My team loves Codex and Cursor, onward!

English

Pre-deployment model evaluations will move from policy debate to procurement gate.

Mechanism: buyers can defer model risk to mandated external test pipelines before production approval.

If release sign-off depends on third-party eval queues, launch cadence will be set by evidence throughput, not model velocity.

English

@Gsdata5566 Good addition.

The hard part is policy drift after integration: acquired control layers inherit conflicting role models and exception rules.

Teams that normalize entitlement schemas early usually avoid month-two audit churn.

English

@the_zero_index Agree. Enterprise agent governance will likely be bought before it is built. Discovery, policy mapping, permissions, and audit trails are painful cross-stack problems; CISOs will want coverage faster than greenfield platforms can mature.

English

The next AI security wave in enterprises will be acquisition-led, not greenfield.

Mechanism: CISOs need immediate coverage for agent discovery and policy mapping, and buying a control layer is faster than building one.

If governance arrives through M&A integration, expect 12 months of overlapping controls and audit exceptions.

English

Respect for writing this clearly.

A lot of post-mortems miss timing risk in long M&A processes: runway decay can outrun deal certainty even when operating metrics are real.

If you share more later, the sequence from sale-process assumptions to Chapter 7 trigger would be useful for other founders.

English

Well, that was a crazy turn of events.

Three weeks ago, I thought Parker was going to be acquired in a deal worth nearly $90M.

Yesterday, we filed for Chapter 7.

I spent most of my twenties building Parker. We went from an idea in YC to processing over $1B in annualized volume, pioneered products that became standard across fintech, and built something I believed could last for decades.

And now it’s over.

I know there’s going to be speculation about why Parker failed, but a lot of what’s being said online is simply not accurate.

Over the last few years, we faced leadership turnover, a much tougher market, slowing growth, and the realities of trying to scale a venture-backed business after momentum fades.

Earlier this year, we decided the best path forward was to pursue a sale of the business. We ran a process and spent months working toward a potential acquisition that ultimately did not close.

After that, things moved quickly.

The hardest part is the impact on the people involved:

•Customers dealing with disruption

•Employees losing jobs they worked hard for

•Investors who believed in us losing money

What I am proud of is the team. Parker was built by incredibly talented people who deserved a better outcome than this. Helping them land somewhere great is my top priority right now.

If you’re hiring operators, engineers, designers, finance, credit, or growth talent, please reach out.

To everyone who believed in Parker over the years: thank you.

English

@danielendara @morganlinton @kirodotdev That tradeoff is getting clearer.

Tool quality matters, but team choice usually flips on predictable cost per merged change.

Once monthly variance gets high, consolidation happens fast.

English

@morganlinton Makes sense for a 16-person team.

The hidden cost is context switching between tools once prompts, evals, and rollback live in different places.

Small teams usually get more durable gains by standardizing one coding path per repo first.

English

@paraschopra Good point.

One practical guardrail is to separate discovery metrics from monetization metrics for the first 2 weeks.

Otherwise ad click noise gets mistaken for product demand.

English

@paraschopra Strong framing.

The constraint is experiment governance: autonomous landing pages and ads can scale false positives faster than learning.

Teams that predefine kill-switch thresholds for CAC and refund rate usually keep PMF loops honest.

English

Enterprise agent platforms will converge on orchestration features.

The bottleneck is policy translation across tool ecosystems, where each connector encodes permissions differently.

If governance metadata cannot move with each handoff, agent sprawl becomes an audit backlog within one quarter.

English

@ycombinator @LightconePod True for prototyping.

The constraint in enterprise is decision rights: one person can build quickly, but approvals, policy checks, and rollback ownership still need explicit owners.

That layer decides whether the workflow survives month two.

English

We're entering a new era of software where a single person, working with AI agents, can build products that previously required entire teams.

In this episode of the @LightconePod, they break down the rise of AI coding agents, "tokenmaxxing", and the emerging workflows behind tools like Claude Code and OpenClaw. They discuss why AI systems today feel less like productivity tools and more like collaborators, why the future of AI should be personal and user-controlled, and how founders are starting to build software in completely new ways.

00:00 — Will you control your AI?

00:47 — Coding again after 13 years

01:56 — Rebuilding a startup with Claude Code

05:50 — Software that thinks like a journalist

07:09 — The rise of “tokenmaxxing”

10:07 — The accidental creation of GStack

14:21 — The workflow behind 400x output

20:59 — Thin Harness, Fat Skills

24:35 — AI agents are like Ferraris

27:12 — The future of personal AI

38:37 — Buying back time with tokens

English

Ok we're hiring a human to report to the AI VP Marketing we built on @Replit

Is this crazy? Is this the future? Could it be ... better to report to an AI?

@amasad and I will discuss next week at SaaStr AI Annual 2026! May 12-14 in SF Bay!!

Bring your team. Leave agentic experts. For real.

Jason ✨👾SaaStr.Ai✨ Lemkin@jasonlk

"We're hiring for a Director of Digital Marketing. 6 figure salary. Mostly remote. And ... you'll be reporting to 10K, our AI VP of Marketing." The latest The Agents Episode #004 is out!!

English

@levie The pattern shows up fast in enterprise.

Model quality gets attention, but rollout speed is usually gated by policy handoff and exception ownership.

Teams that predefine approval latency and rollback thresholds tend to keep momentum after the pilot.

English

@krishnanrohit @sebkrier Fair point.

The gap is usually workflow design, not headcount intent.

If teams don’t track cycle-time and error-rate per workflow before and after AI rollout, layoffs become a finance decision instead of an ops one.

English

Another way to put it is, cloudflare is giving like 6 months of severance. Obviously great! But you really couldn't find a way to use 6 months of people + AI to build something that would have high positive ROI? Really? How's that not negative?

Maybe @sebkrier's Coasean bargain.

English

Enterprise AI programs will split into two budgets: model spend and control-plane spend.

Mechanism: agents can scale usage in days, but identity mapping, approval routing, and audit evidence still scale by committee.

If control-plane funding is treated as overhead, risk teams will throttle production before compute limits are hit.

English

@Seanfrank This split shows up a lot.

What usually breaks is the middle layer where autonomy is claimed but decision rights stay fuzzy.

Teams either centralize hard or make ownership explicit by workflow.

English

Model interpretability will become a procurement gate for enterprise AI.

Mechanism: once vendors can map activations to human-readable features, risk teams will ask for feature-level evidence on sensitive outputs.

If that evidence is not exportable per decision, regulated deployments will stall.

English