bnomial retweetledi

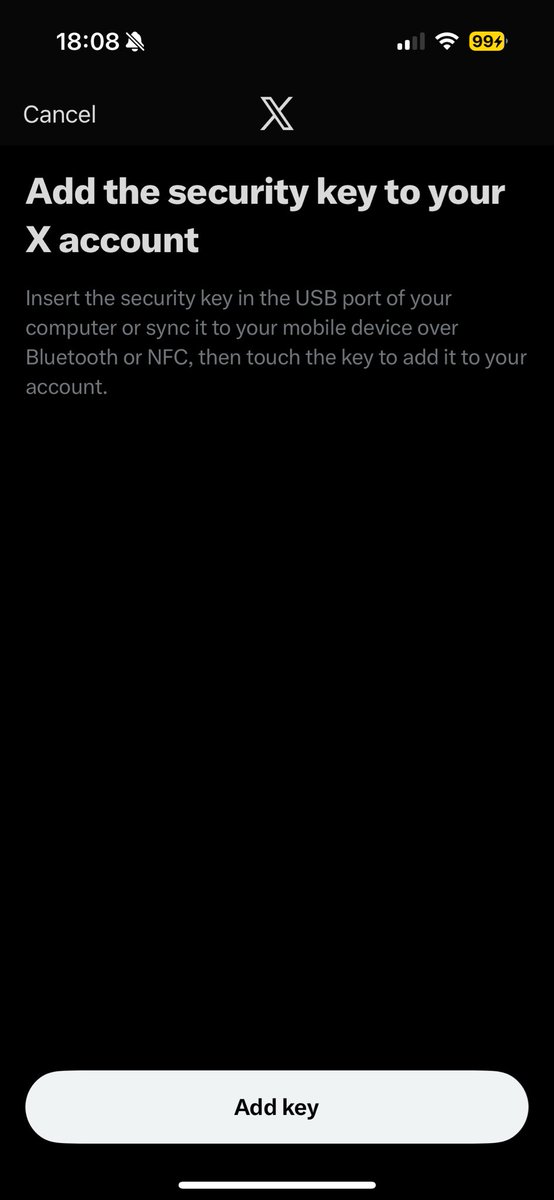

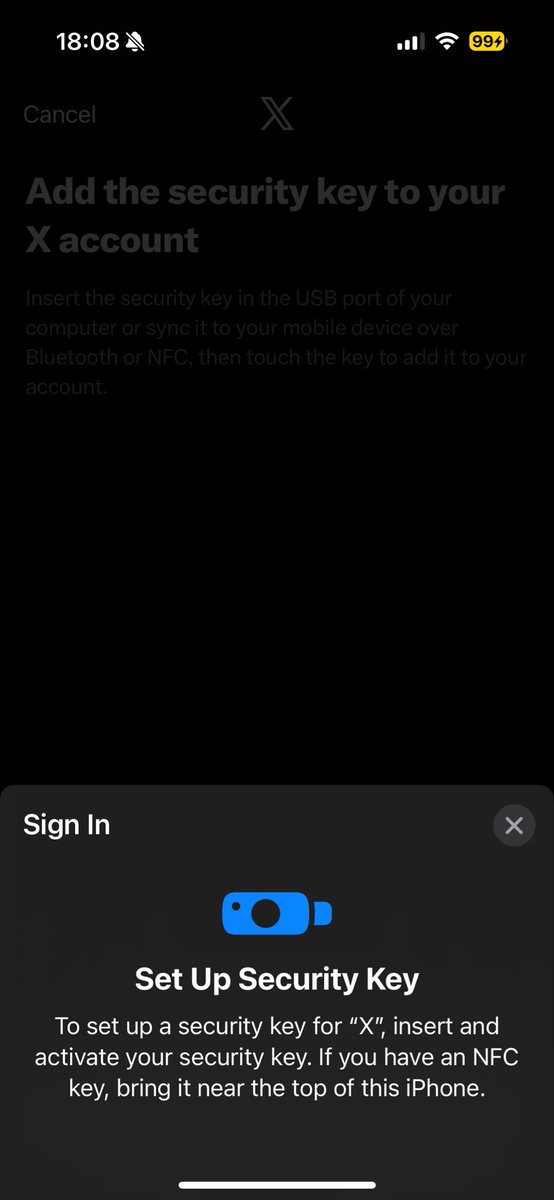

If anyone knows someone at Twitter/X who can help with this, my alt account (@aptlyamphoteric) is unusable, it keeps putting me in this "You must re-enroll your yubikey" flow and no matter how many times I follow the steps, I cannot gain access to the account again.

English