Chief Yeti

1.1K posts

Chief Yeti

@0xchiefyeti

Chief Yeti @knightwavegg | Blockchain Gaming Innovatooor | Bringing the action onchain

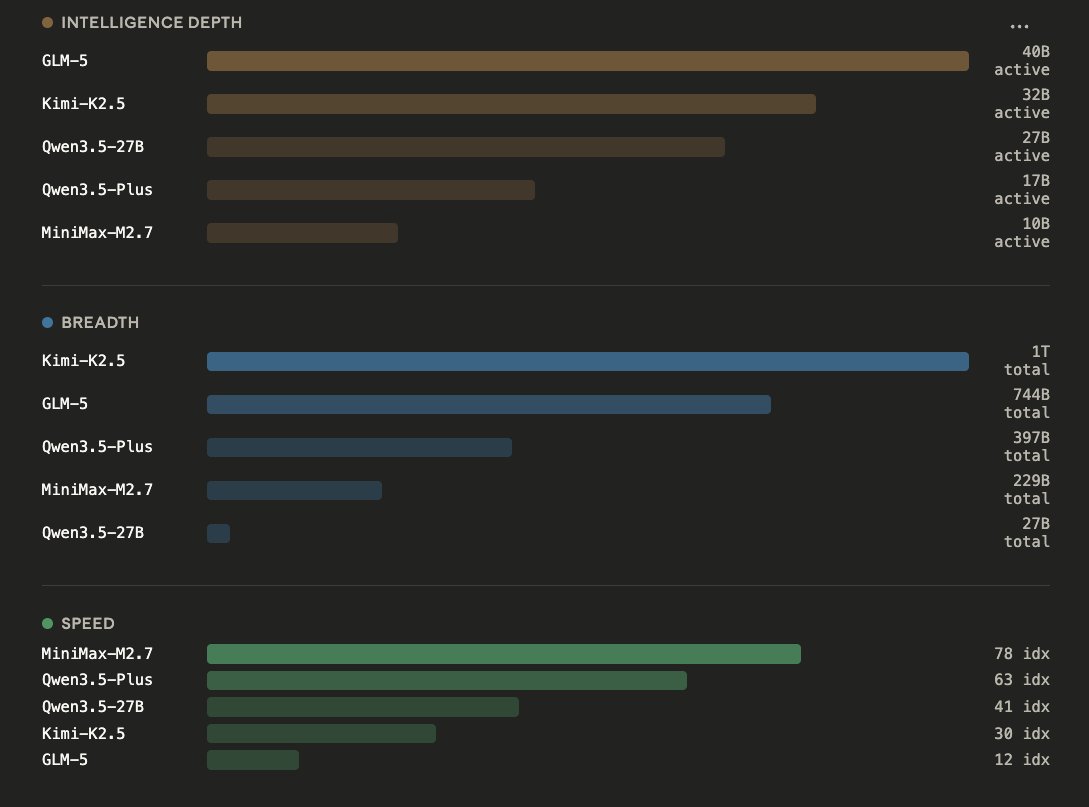

People are not lying when they say Qwen3.5-27B is incredibly capable. 1. Bubble size = total params - World Knowledge, Languages, Skills 2. X axis = active params - Raw Intelligence per token 3. Y axis = tokens/s - Speed of prefill and generation (decode) GLM-5 | 744B params | 40B active Kimi-K2.5 | 1T params | 32B active Qwen3.5-27B | 27B active params Qwen3.5-Plus | 397B params | 17B active MiniMax-M2.7 | 229B params | 10B active MoEs can store much more world knowledge, and breadth of information. For a Mixture-of-Expert, you can stack it up to 1T params, so you can give it 20 Trillion tokens or more of training data, it learns more. But during runtime, only a small portion of that gets activated. Taking MiniMax-M2.5 as an example: Only 10B are active at a time, so while you use it you get the speed and closer intelligence to nemotron-8B it's just MiniMax-M2.5 can know much more, and thus perform better.

I’m interested in redo’ing the post training for Qwen3.5:27b/9b specifically for the hermes-agent harness by @NousResearch If anyone is willing to let me use a RTX PRO 6000 or something with >VRAM (even AMD), I would be happy to set this up for the community and share my results on huggingface We need improved agentic intelligence on 16 gb and 24 gb systems.

Local LLM Day 1 Pointed karpathy's autoresearch framework at optimizing tok/sec Experiment 1: 14->58 Experiment 2: Laptop dies. If I'm lucky it just overheated, but we weren't that hot yet...and I'm not lucky. Getting a second mortgage so I can afford some RAM, I guess...

Local LLM day 1 ~14 tok/sec Qwen3.5-9B-Q4_K_M.gguf @ 32k context w/ llama.cpp on a 3070. great for async/overnight tasks, not ready for primetime