Mohit

542 posts

Mohit

@0xhashqueu

Building bitty-ai || 0.5x engineer , I use Neovim x Arch BTW Tweeting till andreessen horowitz follows me https://t.co/vZsQiBKyju

Katılım Eylül 2024

344 Takip Edilen31 Takipçiler

@nengjiali this looks amazing from first glance, can you create a blog post / video for same would be amazing to get all things at one place

English

I spent 4 brutal months building a full Stereo Visual SLAM system from scratch in C++17 + CUDA.

NO pre-made libraries. NO black-box magic.

I just wanted to understand the underlying mechanics behind SLAM.

Here’s the intuitive breakdown

(the full SLAM pipeline, the math that almost broke me, and the real KITTI footage)👇

cc @aelluswamy your work in this space is a massive inspiration for tackling this from absolute scratch

English

@pupposandro and what about unsloth ? were those guys also not having better kernels ?

@UnslothAI

English

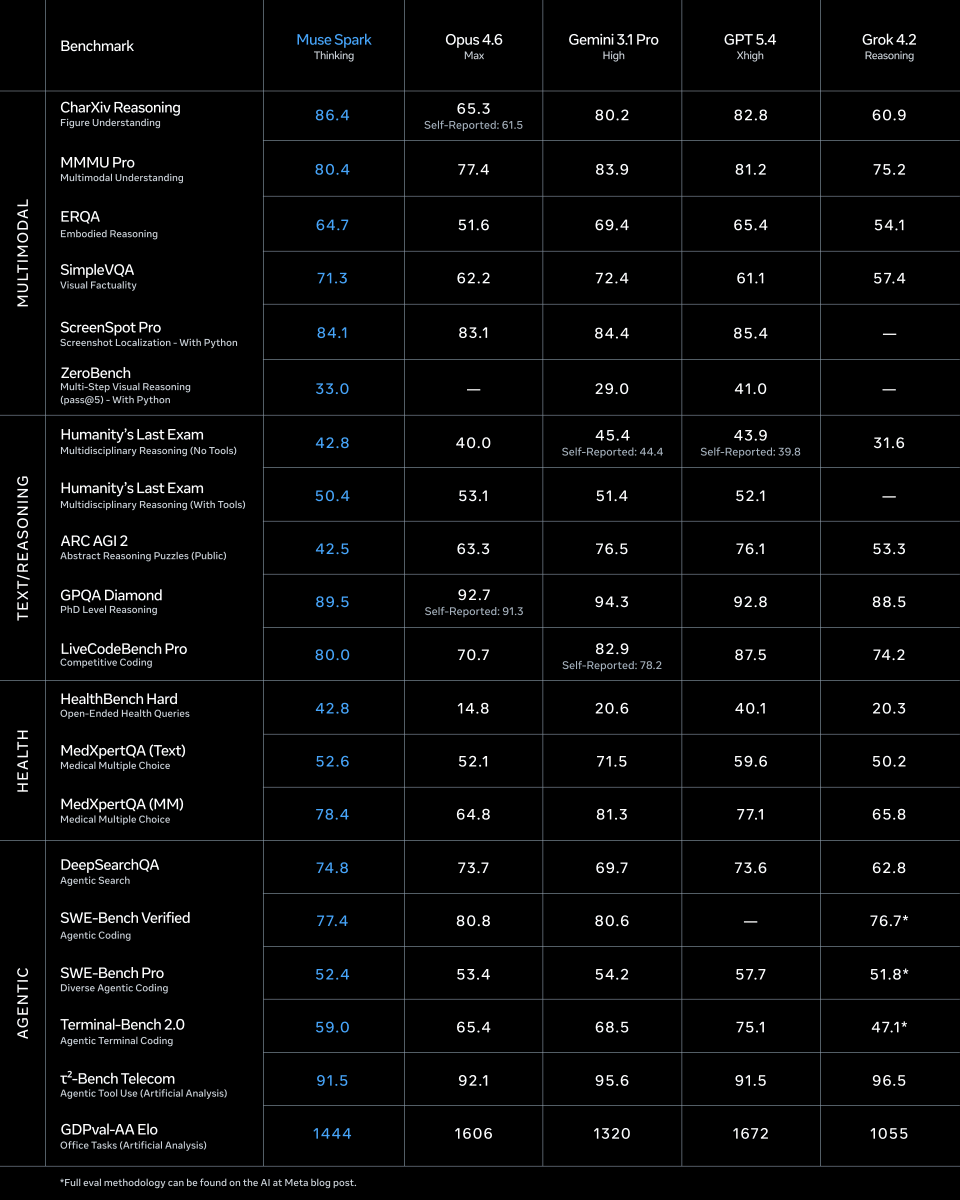

@alexandr_wang hm still gpt 5.4 xhigh looks good .. now sure on pricing though

English

@pupposandro so tensorrt also doesnt have fused kernel for this deltanet + full attention layers ?

English

@MarioNawfal now real delta is in making cheap interceptors , one main interceptor guiding ultra cheap small ones

English

🇮🇳 India just unveiled an AI-powered kamikaze drone with a 2,000km range and 12-hour endurance.

Every major power is now racing to mass-produce cheap one-way attack drones, the weapon that's rewriting the rules of modern war.

Expensive interceptor missiles vs. cheap drones. The math isn't pretty.

Source: Zero Hedge

Mario Nawfal@MarioNawfal

🇮🇷🇺🇸 Iran's Minister of Defense just told the IRGC to monitor “enemy movements with utmost accuracy” to counter their plans, as they prepare to defend against a potentially imminent U.S ground attack. Source: Walter Bloomberg

English

I implemented @GoogleResearch's TurboQuant as a CUDA-native compression engine on Blackwell B200.

5x KV cache compression on Qwen 2.5-1.5B, near-loseless attention scores, generating live from compressed memory.

5 custom cuTile CUDA kernels ft:

- fused attention (with QJL corrections)

- online softmax

-on-chip cache decompression

- pipelined TMA loads

Try it out: devtechjr.github.io/turboquant_cut…

s/o @blelbach and the cuTile team at @nvidia for lending me Blackwell GPU access :)

cc @sundeep @GavinSherry

English

Omni 3.5 is close source .. really @Alibaba_Qwen

maybe that why the founder left ..

English

when you realize you could have cloned any influencer in seconds.

but you wasted hours doing everything manually.

ViralOps@ViralOps_

English