Bryce Adelstein Lelbach

12K posts

@blelbach

Principal Engineer at @NVIDIA working on programming languages. @adspthepodcast co-host. C++ Library Evolution chair emeritus. Frequent flyer. Horology fan.

My talk proposal was rejected.

My latest: Nvidia GTC Day One - Groq Will Be a Grand Slam Homerun taekim.substack.com/p/nvidia-gtc-d…

Anyone else impressed nvidia got the install script on the main domain, root path? Imagine the meeting of lawyers, security and infra

@marksaroufim @GPU_MODE @NVIDIAGTC Award ceremony for the nvfp4 kernels. Come hang out at GuildHouse!

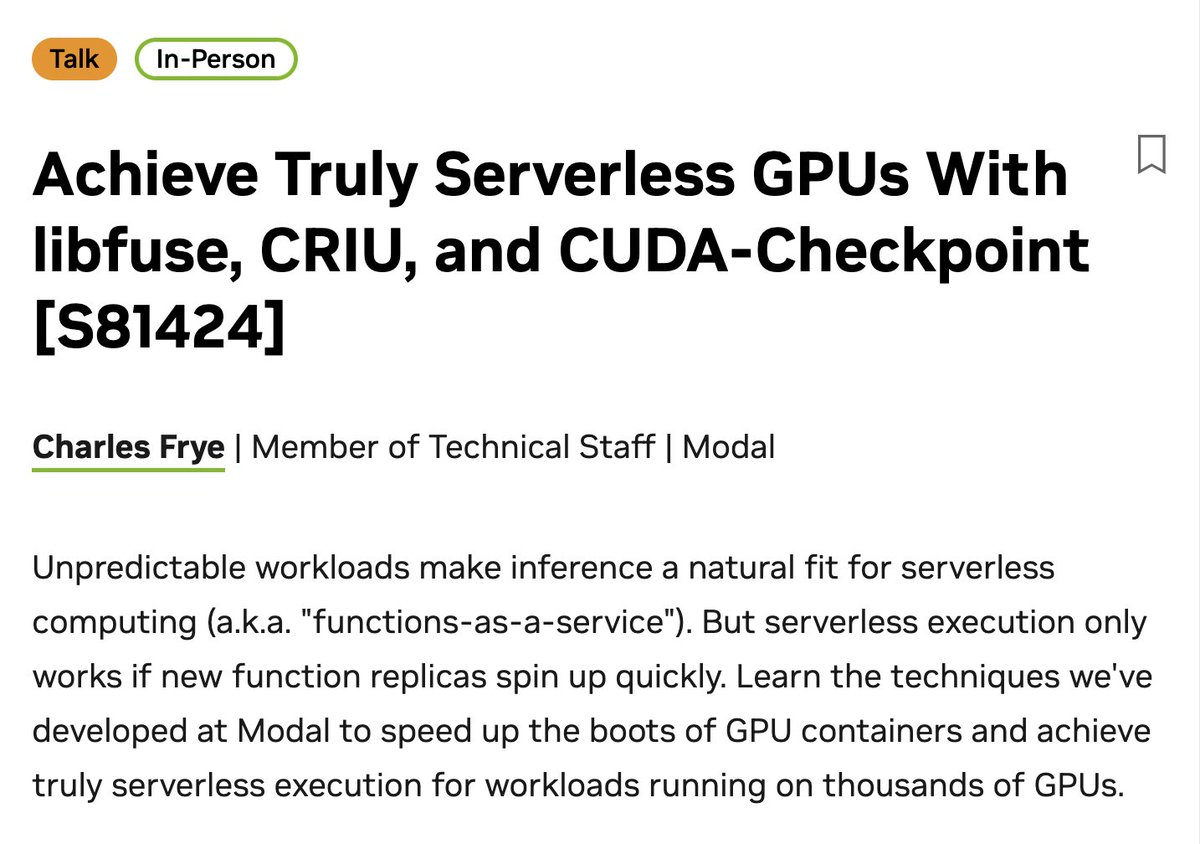

getting pretty hyped for my talk at GTC! come thru tuesday at 9am to hear how we speed up inference server starts from half an hour to half a minute -- featuring cloud capacity buffers, custom filesystems, and cuda-checkpoint nvidia.com/gtc/session-ca…

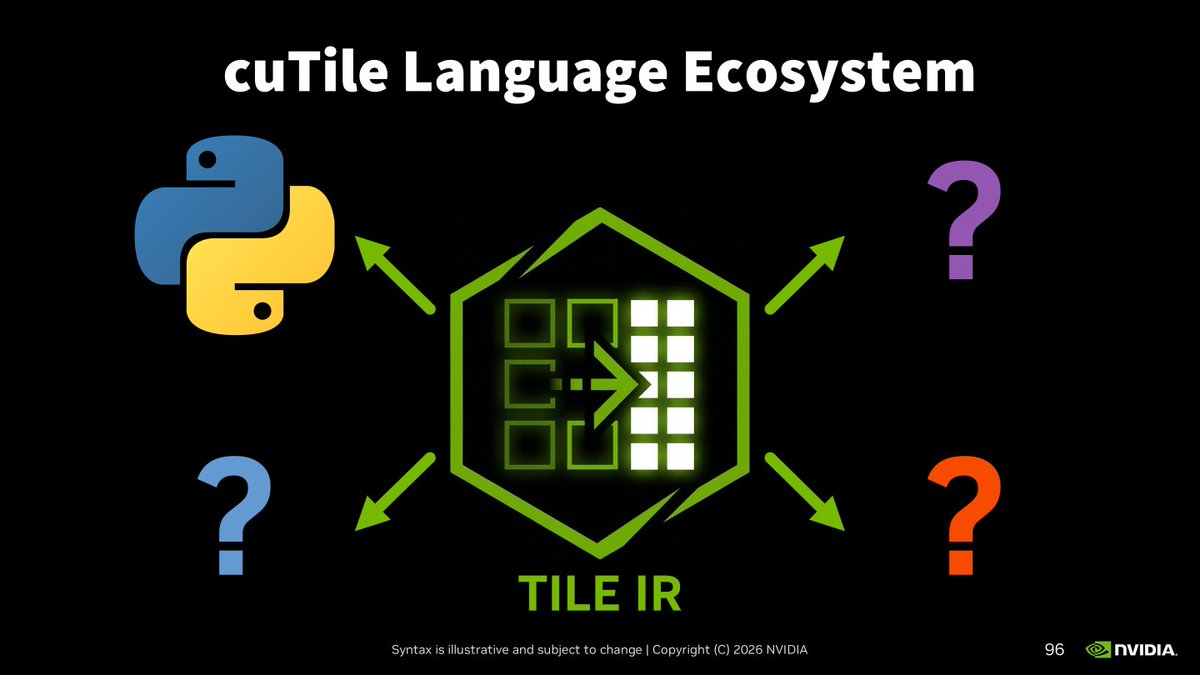

cuTile Rust: a safe, tile-based kernel programming DSL for the Rust programming language github.com/NVlabs/cutile-… features a safe host-side API for passing tensors to asynchronously executed kernel functions

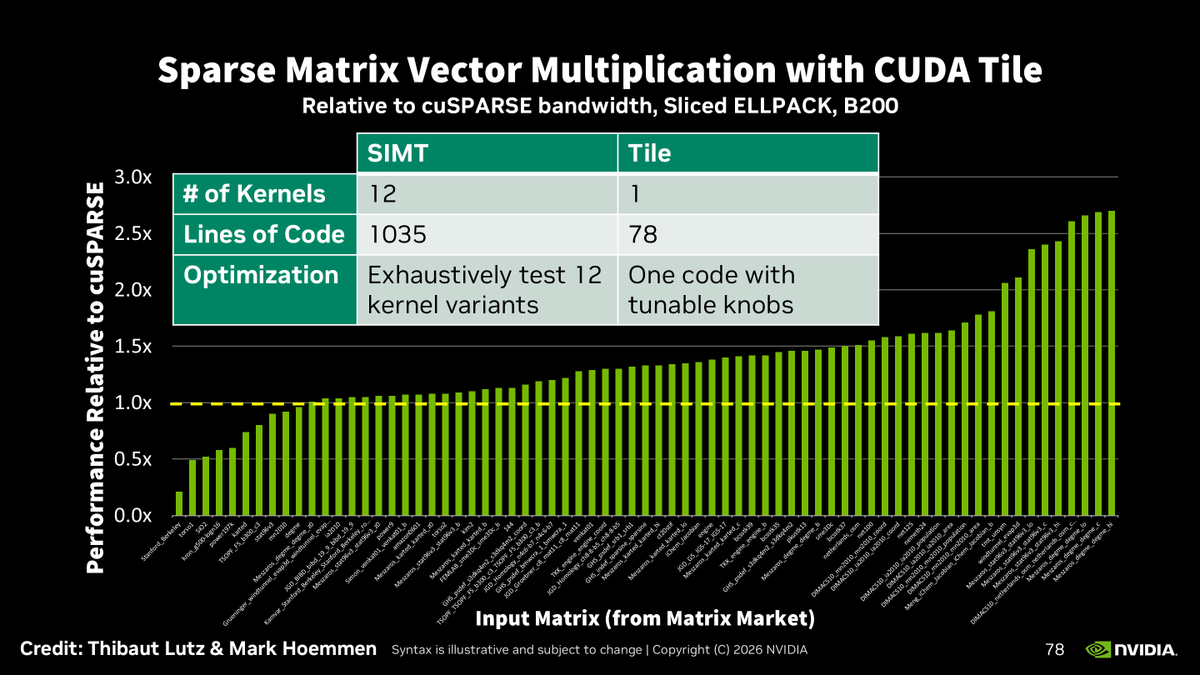

刚发现 VectorWare 团队在 2026 年 2 月发布的一篇技术博文中宣布,他们成功在 GPU 上运行了 Rust 的 async/await。 vectorware.com/blog/async-awa… 这解决了传统 GPU 上并发困境。 传统 GPU 编程是数据并行,所有线程对不同数据执行相同操作。但随着 GPU 程序变复杂,开发者开始使用 warp specialization(warp 特化),让不同 warp 跑不同的任务(比如一个 warp 负责加载数据,另一个负责计算)。这本质上是从数据并行转向了任务并行。 问题在于:这种并发和同步完全靠手动管理,没有语言或运行时层面的支持,就像 CPU 上手写线程同步一样容易出错且难以推理。 博客文章梳理了三个已有的高层抽象方案: JAX 把 GPU 程序建模为计算图,编译器分析图中的依赖关系来决定执行顺序和并行策略。 Triton 用"block"作为独立计算单元,通过 MLIR 多层编译管线来管理并发。 CUDA Tile 则引入了"tile"作为一等公民数据单元,让数据依赖变得显式。 但这些方案有共同的缺点: 它们都要求开发者用全新的方式组织代码,需要新的编程范式和生态,对采用构成显著障碍。 vectorware而且代码复用困难。现有的 CPU 库和 GPU 库都无法直接与这些框架组合。 文章的核心论点是 Rust 的 Future trait 恰好满足了他们想要的所有特性: 1. Future 是延迟的、可组合的值。 跟 JAX 的计算图类似,你先构建程序的"描述",再执行。编译器可以在执行前分析依赖关系。 2. Future 天然表达独立的并发单元。 跟 Triton 的 block 类似,多个 future 可以串行(.await 链)或并行(join!、组合子)执行。 3. Rust 的所有权系统让数据依赖显式化。 跟 CUDA Tile 的显式 tile 类似,future 通过捕获数据来编码数据流向,而 Send/Sync/Pin 等 trait bound 则约束了数据如何在并发单元间共享和传递。 4. 最关键的一点: Warp 特化本质上就是手写的任务状态机,而 Rust 的 future 恰好编译成编译器自动生成和管理的状态机。 vectorware既然 future 只是状态机,没有理由不能在 GPU 上运行。 他们移植了 Embassy,一个为嵌入式系统设计的 no_std 异步执行器。GPU 没有操作系统,不支持 Rust 标准库,这跟嵌入式环境非常相似,所以 Embassy 是天然的选择。将 Embassy 适配到 GPU 上只需要很少的修改,这种复用现有开源库的能力远优于其他非 Rust 的 GPU 生态。 这篇文章表面上在讲 async/await,但它真正在说的是一个更大的事情:用 Rust 的类型系统和零成本抽象来统一 CPU 和 GPU 的编程模型。 Future 不关心自己跑在哪里,线程、核心、block、warp 都可以。同一段 async 代码可以不改一行在 CPU 和 GPU 上运行。 这跟 JAX/Triton 那种"为 GPU 写一套全新的东西"的思路根本不同,是一种从语言层面自底向上的统一。 VectorWare 之前还发过一篇关于在 GPU 上启用 Rust std 的文章,加上这次的 async/await,他们的目标很明确:让 GPU 编程变成"普通的 Rust 编程",而不是一个需要全新心智模型的特殊领域。