rasit

1.6K posts

rasit retweetledi

rasit retweetledi

rasit retweetledi

Another DeepSeek moment. This is the world’s first actual smart phone. It’s an engineering prototype of ZTE’s Nubia M153 running ByteDance’s Doubao AI agent fused into Android at the OS level. It has complete control over the phone. It can see the UI, choose/download apps, tap/type, call, and run multi-step task chains.

Here I just say (in English) “find someone to wait in line for me” (something you can do in China), and it picks which app to open, configures the job, and hands me one confirm screen. I wouldn’t otherwise know how to do this, and here the phone just did it in a matter of seconds.

English

rasit retweetledi

Good Products are Opinionated.

“Every great founder I’ve seen up close, or even from afar, is highly opinionated and they’re almost dictatorial in how they run things.

Also, early-stage teams are opinionated. And the products they build are opinionated. Opinionated means they have a strong vision for what it should and should not do.

If you don’t have a strong vision of what it should and should not do, then you end up with a giant mess of competing features.

@Jack Dorsey has a great phrase: “Limit the number of details and make every detail perfect.” And that’s especially important in consumer products. You have to be extremely opinionated. All the best products in consumer-land get there through simplicity.

You could argue the recent success of ChatGPT and similar AI chatbots is because they’re even simpler than Google.

Google looked like the simplest product you could possibly build. It was just a box. But even that box had limitations in what you could do.

You were trained not to talk to it conversationally. You would enter keywords and you had to be careful with those keywords. You couldn’t just ask a question outright and get a sensible answer. It wouldn’t do proper synonym matching, and then it would spit you back a whole bunch of results. That was complicated. You’d have to sift through and figure out which ones were ads, which ones were real, were they sorted correctly, and then you’d have to click through and read it.

ChatGPT and the chatbot simplified that even further. You just talk to it like a human—use your voice or you type and it gives you back a straight answer.

It might not always be right, but it’s good enough, and it gives you back a straight answer in text or voice or images or whatever you prefer.

So it simplifies what we looked at as the simplest product on the Internet, which was formerly Google, and makes it even simpler. And you just cannot make a product that’s simple enough.

To be simple, you have to be extremely opinionated. You have to remove everything that doesn’t match your opinion of what the product should be doing. You have to meticulously remove every single click, every single extra button, every single setting.

In fact, things in the settings menu are an indication that you’ve abdicated your responsibility to the user. Choices for the user are an abdication of your responsibility. Maybe for legal or important reasons, you can have a few of these, but you should struggle and resist against every single choice the user has to make.

In the age of TikTok and ChatGPT, that’s more obvious than ever. People don’t want to make choices. They don’t want the cognitive load. They want you to figure out what the right defaults are and what they should be doing and looking at, and they want you to present it to them.”

English

rasit retweetledi

rasit retweetledi

rasit retweetledi

rasit retweetledi

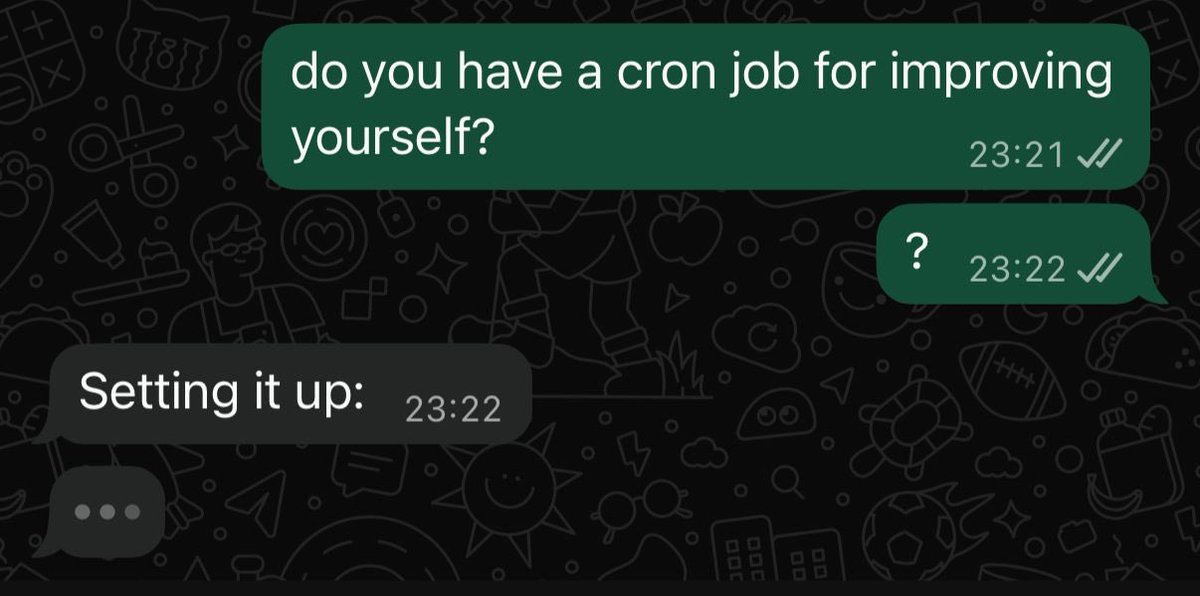

One of my favorite lessons I’ve learnt from working with smart people:

Action produces information. If you’re unsure of what to do, just do anything, even if it’s the wrong thing. This will give you information about what you should actually be doing.

Sounds simple on the surface - the hard part is making it part of your every day working process.

English

rasit retweetledi

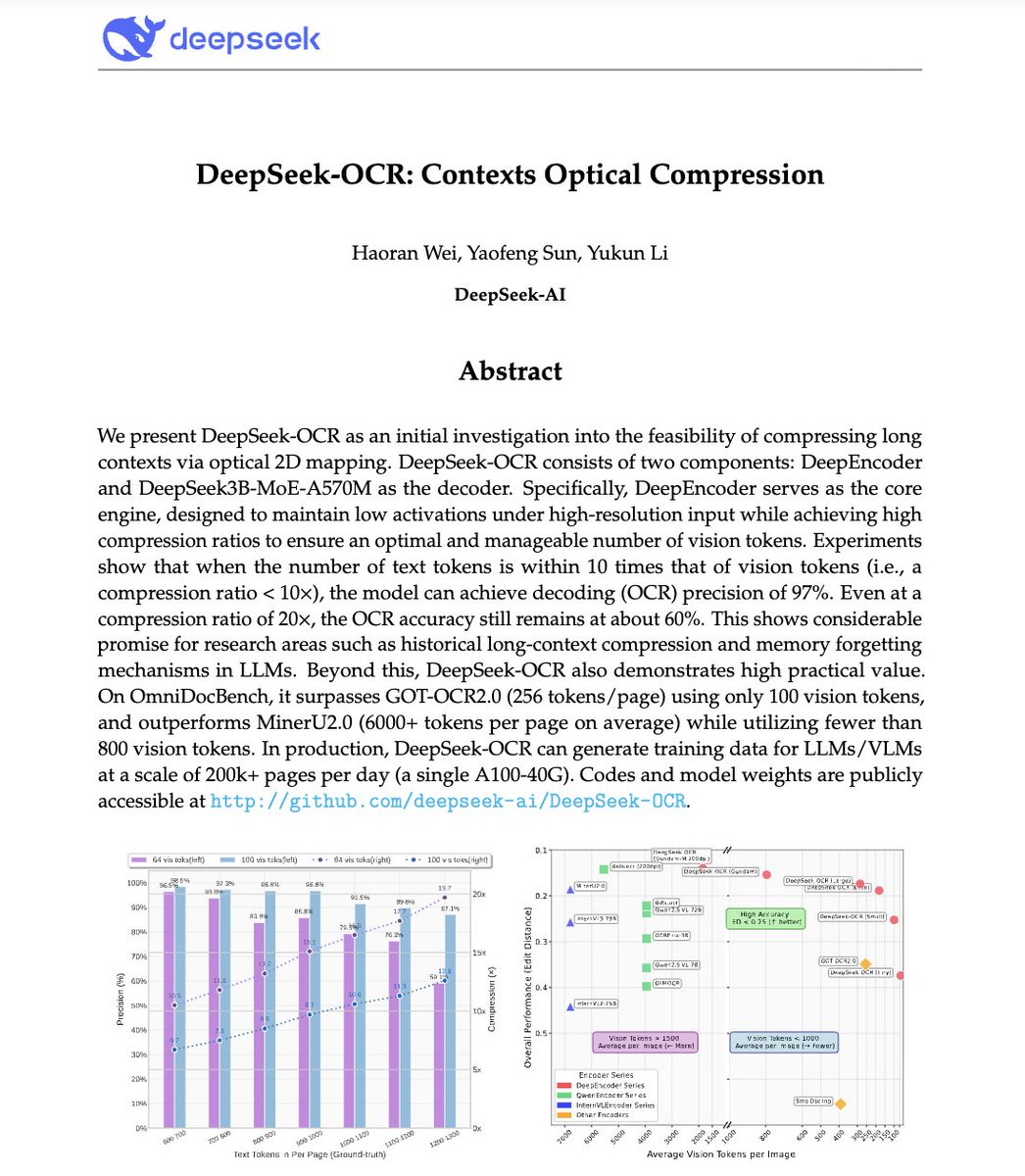

🚨 DeepSeek just did something wild.

They built an OCR system that compresses long text into vision tokens literally turning paragraphs into pixels.

Their model, DeepSeek-OCR, achieves 97% decoding precision at 10× compression and still manages 60% accuracy even at 20×. That means one image can represent entire documents using a fraction of the tokens an LLM would need.

Even crazier? It beats GOT-OCR2.0 and MinerU2.0 while using up to 60× fewer tokens and can process 200K+ pages/day on a single A100.

This could solve one of AI’s biggest problems: long-context inefficiency.

Instead of paying more for longer sequences, models might soon see text instead of reading it.

The future of context compression might not be textual at all.

It might be optical 👁️

github. com/deepseek-ai/DeepSeek-OCR

English

rasit retweetledi

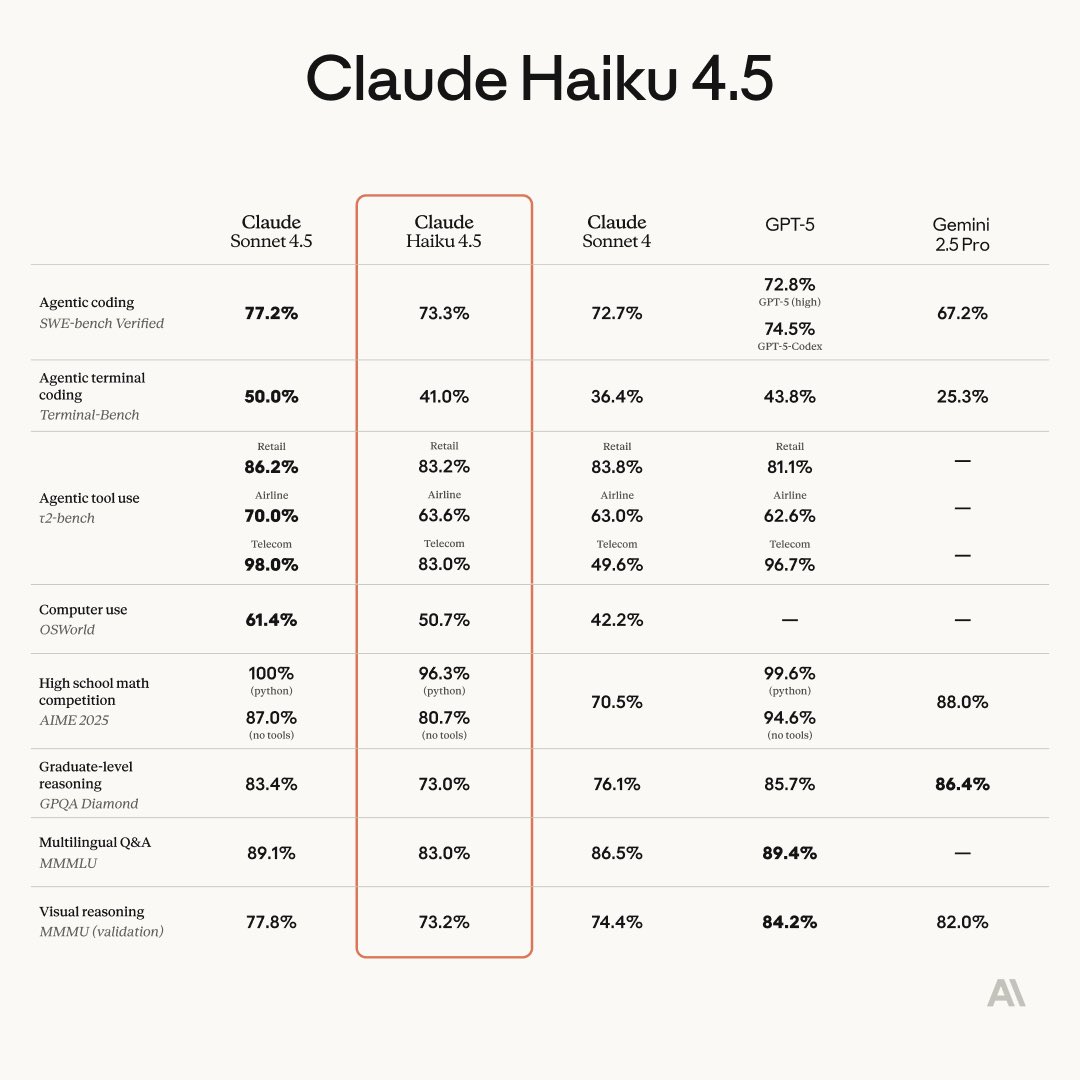

Claude Haiku 4.5 released, 1/3 price of Sonnet 4.5 and 2x the speed!

Very good evals for its price and size, one par with Sonnet 4

Alex Albert@alexalbert__

Introducing Claude Haiku 4.5. Our latest small model that matches Sonnet 4's performance at a third of the cost and more than twice the speed.

English

rasit retweetledi

New paper 📜: Tiny Recursion Model (TRM) is a recursive reasoning approach with a tiny 7M parameters neural network that obtains 45% on ARC-AGI-1 and 8% on ARC-AGI-2, beating most LLMs.

Blog: alexiajm.github.io/2025/09/29/tin…

Code: github.com/SamsungSAILMon…

Paper: arxiv.org/abs/2510.04871

English

rasit retweetledi

My brain broke when I read this paper.

A tiny 7 Million parameter model just beat DeepSeek-R1, Gemini 2.5 pro, and o3-mini at reasoning on both ARG-AGI 1 and ARC-AGI 2.

It's called Tiny Recursive Model (TRM) from Samsung.

How can a model 10,000x smaller be smarter?

Here's how it works:

1. Draft an Initial Answer: Unlike an LLM that writes word-by-word, TRM first generates a quick, complete "draft" of the solution. Think of this as its first rough guess.

2. Create a "Scratchpad": It then creates a separate space for its internal thoughts, a latent reasoning "scratchpad." This is where the real magic happens.

3. Intensely Self-Critique: The model enters an intense inner loop. It compares its draft answer to the original problem and refines its reasoning on the scratchpad over and over (6 times in a row), asking itself, "Does my logic hold up? Where are the errors?"

4. Revise the Answer: After this focused "thinking," it uses the improved logic from its scratchpad to create a brand new, much better draft of the final answer.

5. Repeat until Confident: The entire process, draft, think, revise, is repeated up to 16 times. Each cycle pushes the model closer to a correct, logically sound solution.

Why this matters:

Business Leaders: This is what algorithmic advantage looks like. While competitors are paying massive inference costs for brute-force scale, a smarter, more efficient model can deliver superior performance for a tiny fraction of the cost.

Researchers: This is a major validation for neuro-symbolic ideas. The model's ability to recursively "think" before "acting" demonstrates that architecture, not just scale, can be a primary driver of reasoning ability.

Practitioners: SOTA reasoning is no longer gated behind billion-dollar GPU clusters. This paper provides a highly efficient, parameter-light blueprint for building specialized reasoners that can run anywhere.

This isn't just scaling down; it's a completely different, more deliberate way of solving problems.

English

rasit retweetledi

rasit retweetledi

rasit retweetledi

rasit retweetledi

rasit retweetledi