4ldo Malkinson

293 posts

4ldo Malkinson

@4ldo9

Tres Comas Boom ! Sarcasm, CS & Tequila

Advanced Machine Intelligence (AMI) is building a new breed of AI systems that understand the world, have persistent memory, can reason and plan, and are controllable and safe. We’ve raised a $1.03B (~€890M) round from global investors who believe in our vision of universally intelligent systems centered on world models. This round is co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions, along with other investors and angels across the world. We are a growing team of researchers and builders, operating in Paris, New York, Montreal and Singapore from day one. Read more: amilabs.xyz AMI - Real world. Real intelligence.

@TrueFactsStated I sometimes think the people from abroad, now living in France, appreciate the country more than the french, as we can compare. The health systems for example…French vs NHS…

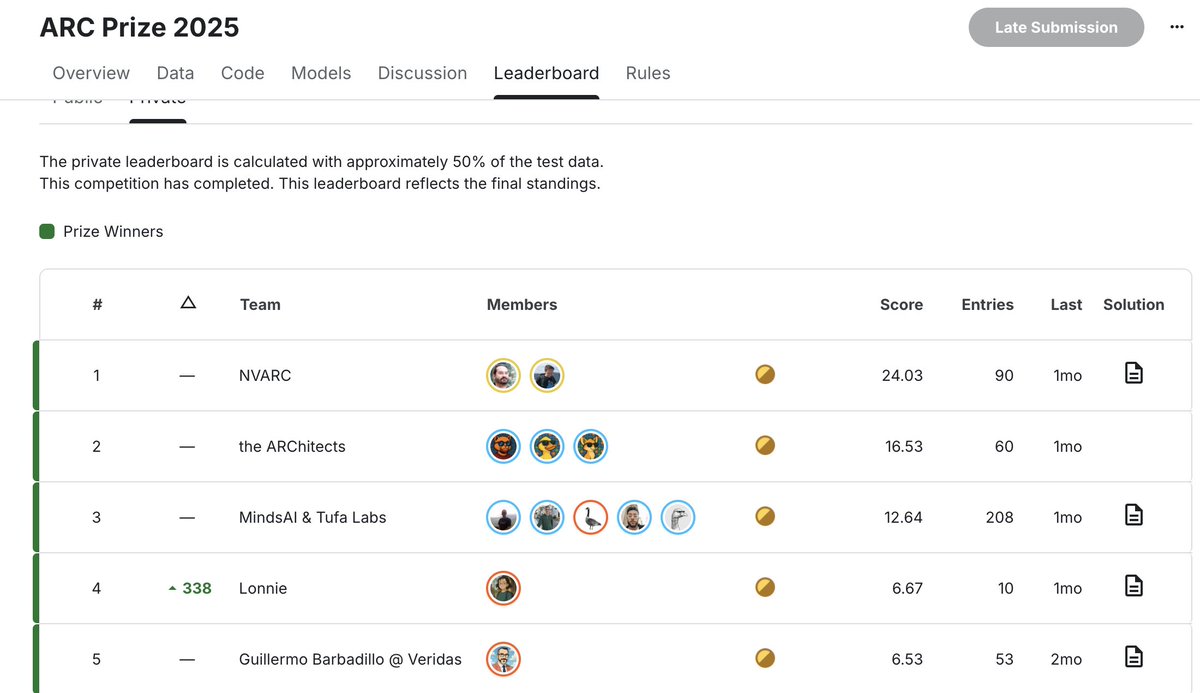

Gemini 3 Deep Think (2/26) Semi Private Eval - ARC-AGI-1: 96.0%, $7.17/task - ARC-AGI-2: 84.6% $13.62/task New ARC-AGI SOTA model from @GoogleDeepMind

Claude Opus 4.6 (120K Thinking) on ARC-AGI Semi-Private Eval Max Effort: - ARC-AGI-1: 93.0%, $1.88/task - ARC-AGI-2: 68.8% $3.64/task New ARC-AGI SOTA model from @AnthropicAI