Jason

6.2K posts

@ibuildthecloud Ai code writing the best thing to happen to powershell... I too was amazed by how clunky the syntax was when I first saw it.

English

@FutureInclined @peterrhague This is interesting. The migration to silicon instead of "copying" so that continuity of experience in mind/body is addressed. Otherwise it's a mind-clone that absolutely does not feel immortal for your dying brain/body.

English

@peterrhague We need to invent an intermediate substrate which grows parallel to your neurons and maps to them 1:1 in real time. It must be biocompatible and able to substitute for your neurons as they die.

This may answer the mind body problem, but it's decades off at best.

English

You are not going to be able to upload your mind in 15 years

Rand@rand_longevity

if you are alive in 15 years you are gonna be able to upload your mind and become semi-immortal

English

@peterrhague people underestimate the amount of time things take by an order of magnitude sometimes

English

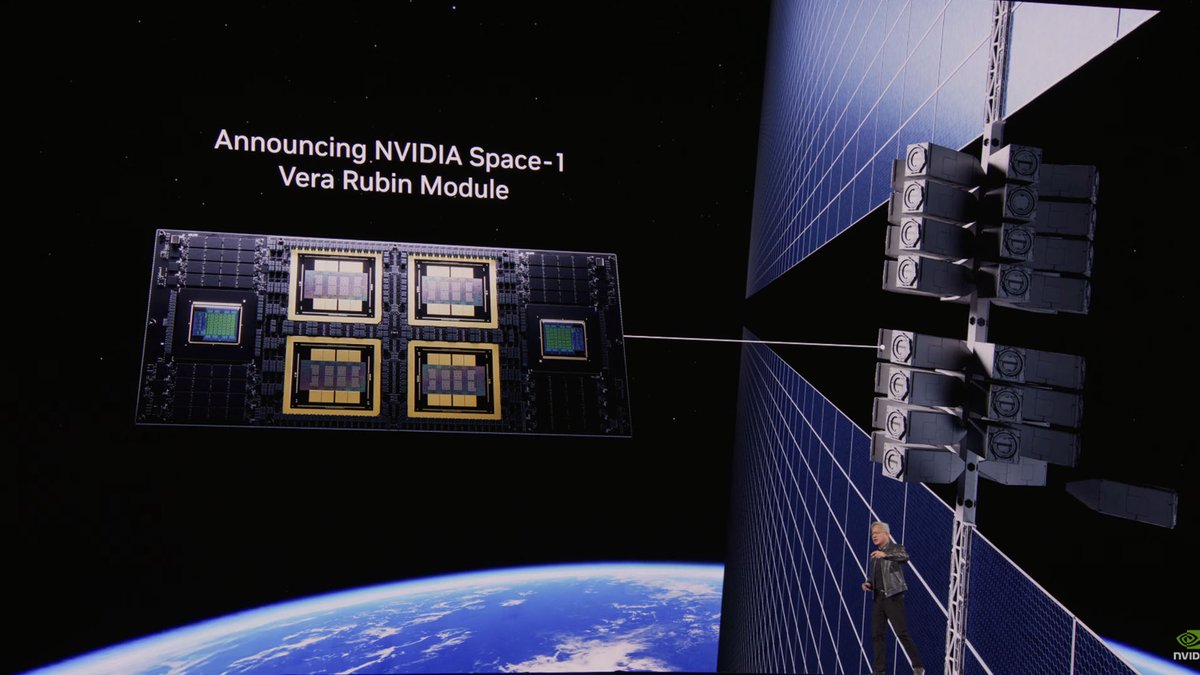

@braden_tewinkel @NVIDIADC I'd also be curious about the density of power supplied from solar panels vs. the power consumption of those chips...

English

@NVIDIADC Have we figured out how to cool in space yet?

English

The next chapter of space computing is here 🛰️

NVIDIA and its ecosystem are advancing AI from Earth-to-space across:

✔️ Earth Orbit and Infrared Imagery

✔️ Radio Frequency and Synthetic Aperture Radar

✔️ Autonomous Space Operations

Leading commercial space companies and mission-grade, radiation-hardened partners are scheduling deployments of NVIDIA Jetson Orin, IGX Thor, and the Vera Rubin Space-1 module for on-orbit AI inference and ground data processing.

Explore the final frontier of AI 🔗 nvda.ws/4wb6qQd

English

@braden_tewinkel @mirsblog @NVIDIADC Most of this audience don't understand that temperature in a vacuum is not the same as temp in a pool of air/water/etc. To answer your question, the heat is radiated via infrared into the vacuum, but I have no idea of the efficiency numbers. They will be running very hot I think.

English

@Castellani2014 Marcello Hernandez as Sebastian Maniscalco was prob the best SNL impression of the decade.

English

@buccocapital Doesn’t a sufficiently intelligent Ai not need instructions on how to build their products? Not like it’s rocket science. Only thing keeping them from doing a copy is customer relationships.

English

@engineers_feed False. Air resistance will slow you constantly until after some number of oscillations you end up floating in the center I think

English

@BrianG12321 @CWood_sdf May I ask where this game is? If Ai is writing that much well directed code, the game should be nearing a playable state no? I'm curiously looking around for major products that are actually Ai generated and serving a market profitably.

English

No no no, specifications are the wrong take.

You write a extraordinarily detailed desired user experience. Then let the AI work backwards from there.

Before I started my game I had 30,000 lines of markdown files detailing the user experience.

Now on days when I am distracted my other work I can tell the AI to reference the docs and come up with 20 action items and execute on them. And I usually end keeping 90% of the work it does.

And none of those docs have technical specifications.

English

@Tazerface16 They have a model that tells them what word comes next just like you do. You’re just too arrogant to expand your definition of thought.

English

@thejmkane @nejatian Go pour a cup of coffee. Make a client feel confident. Demonstrate energy and enthusiasm. Everything outside of the spreadsheet and word processor that requires situational awareness.

English

@nejatian You’re hiring junior consultants? What could they possibly do that AI can’t?

English

If you are graduating university and are about to join a consulting firm. Don't do that. Do this instead.

Just send me a pic of your offer from one of the top 5 and we'll get you an offer to join Opendoor instead.

Kaz Nejatian@nejatian

Come join our mission to tilt the world in favor of homeowners. We are growing our operations team in Toronto. opendoor.com/careers/open-p…

English

@vanguard_btc @ChrisCamillo We don't need AGI to be wildly more efficient in almost every task. AGI is the icing on an already rich cake.

English

@ChrisCamillo Does the over spend on LLMs not freak you out? They are the wrong model type for AGI

English

@howardlindzon More of his art of the deal bs. If he says it should have been twice as bad, he can invent a narrative that things are twice as good as they should be.

English

@EpsilonTheory I’d guess there are trillions of objects out there locked in orbits like these, free floating through space in the dark.

English

Total And Complete Hunter Biden Victory

Pubity@pubity

Scientists have discovered that cocaine-powered super salmon live longer and swim faster than normal salmon. They found that giving coke to salmon made them swim almost twice as far and live around 20 days longer than normal salmon during a test in Sweden.

English

@davidchalmers42 @WorldSciFest @bgreene Treating consciousness as a binary is a huge error. I think current LLMs have partial consciousness rooted in language. They experience the world through words and we'd see that more clearly if they went from static models to continuous active neural processing.

English

this clip of me talking about AI consciousness seems to have gone wide. it's from a @worldscifest panel where @bgreene asked for "yes or no" opinions (not arguments!) on the issue. if i were to turn the opinion into an argument, it might go something like this:

(1) biology can support consciousness.

(2) biology and silicon aren't relevantly different in principle [such that one can support consciousness and the other not].

therefore:

(3) silicon can support consciousness in principle.

note that this simple argument isn't at all original -- some version of it can probably be found in putnam, turing, or earlier. note also that the (controversial!) claim that the brain is a machine (which comes down to what one means by "machine") plays no essential role in the argument.

of course reasonable people can disagree about the premises!

perhaps the key premise is (2) and it requires support. one way to support it is to go through various candidates for a relevant principled difference between biology and silicon and argue that none of them are plausible. another way is through the neuromorphic replacement argument that i discuss later in the same conversation.

some see a tension between (1)/(3) and the hard problem. but there's not much tension: one can simultaneously allow that brains support consciousness and observe that there's an explanatory gap between the two that may take new principles to bridge. the same goes for AI systems.

this isn't a change of mind: i've argued for the possibility of AI consciousness since the 1990s. my 1994 talk on the hard problem (youtube.com/watch?v=_lWp-6…) outlined an "organizational invariance" principle that tends to support AI consciousness. you can find versions of the two strategies above for arguing for premise 2 in chapters 6 and 7 of my 1996 book "the conscious mind".

i'm not suggesting that current AI systems are conscious. but in a separate article on the possibility of consciousness in language models (bostonreview.net/articles/could…), i've made a related argument that within ten years or so, we may well have systems that are serious candidates for consciousness.

the strategy in that article on LLM consciousness is analogous to the first strategy above in arguing for AI consciousness more generally. i go through the most plausible obstacles to consciousness in language models, and i argue that even if these obstacles exclude consciousness in current systems, they may well be overcome in a decade.

of course none of this is certain. but i think AI consciousness is something we have to take seriously.

[the full conversation with @bgreene and @anilkseth can be found at youtube.com/watch?v=06-iq-…]

YouTube

YouTube

Tsarathustra@tsarnick

David Chalmers says it is possible for an AI system to be conscious because the brain itself is a machine that produces consciousness, so we know this is possible in principle

English