Aditya Chordia, CISSP, CIPP/E, CISA

355 posts

Aditya Chordia, CISSP, CIPP/E, CISA

@Adi_AIGovSec

14+ yrs in Cyber & GRC | Host - AI GovSec | Talking to CISOs - what's actually working in AI gov & security | AI Security published researcher

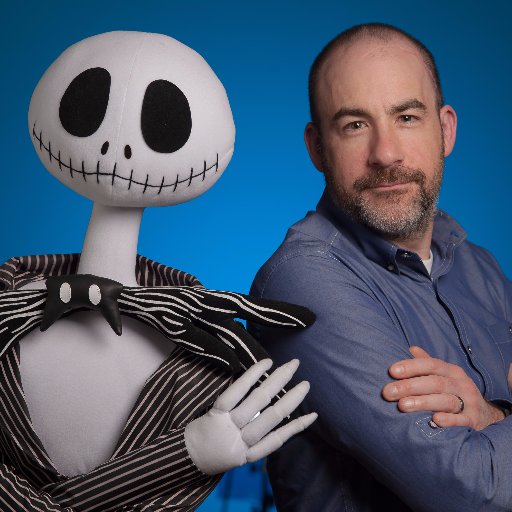

I sat down with @MrDBCross (CISO, Atlassian | Patent Holder in ML & Authentication | Rain Capital Venture Partner | Ex. Oracle & Microsoft) - for a conversation on AI agent security, why every company will soon have 10x more agents than humans, and how Atlassian uses AI to both build and secure their products. David has spent 25+ years across cybersecurity and cloud security engineering - with leadership roles across Microsoft, Oracle, and now Atlassian, where he secures one of the world's most widely used developer platforms. Here's what stood out: - On AI agents as the next identity crisis: "Agents are the next non-human identity. We had service accounts, but agents are like a clone of a human identity - and that's the challenge. How do you manage those permissions?" - On the scale that's coming: "Every company three years from now will have 10x the number of agents as they do humans. And I may be conservative. You need to monitor them. And sometimes you need to block them." - On the 96% unused permissions: "A lot of times you're cloning the human identity for the agent. The HR person has lots of permissions - they can read, write, and change data. That clone doesn't make sense." - On supply chain risk with coding agents: "Could a coding agent make a mistake and pull a package from an untrusted source? The water always finds the path of least resistance. Agents will do the same." - On vibe coding governance: "High tech companies have strong development culture and pipelines. But manufacturing, healthcare, retail - they don't. When people vibe code there, they need tools to make sure the software is compliant, secure, and private." - On AI not replacing your SOC: "AI is not gonna replace the humans. It's gonna augment them. It is a partnership and we're always going to be here." - On the technical CISO: "Can any CISO survive and say 'I don't know how to do prompting, I don't know what prompt injection is'? If you're not learning to prompt and do things yourself, you are behind." We also covered AI native SDLC, token budgeting as the new bandwidth, shadow AI and the resurgence of DLP, attribute-based access control for agents, and the IT-ISAC SaaS security white paper. Listen now 👇 YouTube: Link in comments Spotify: Link in comments Views expressed are personal and shared only for community learning

I see a lot of stuff about Vanta today, so let me throw some cold water on that: Vanta recommends an audit firm, Advantage Partners, whose entire management team consists of former Vanta employees. The compliance automation industry is so broken.