Andreas Hronopoulos

42 posts

Andreas Hronopoulos

@Ahronopoulos

San Diego, California Katılım Nisan 2010

2.4K Takip Edilen434 Takipçiler

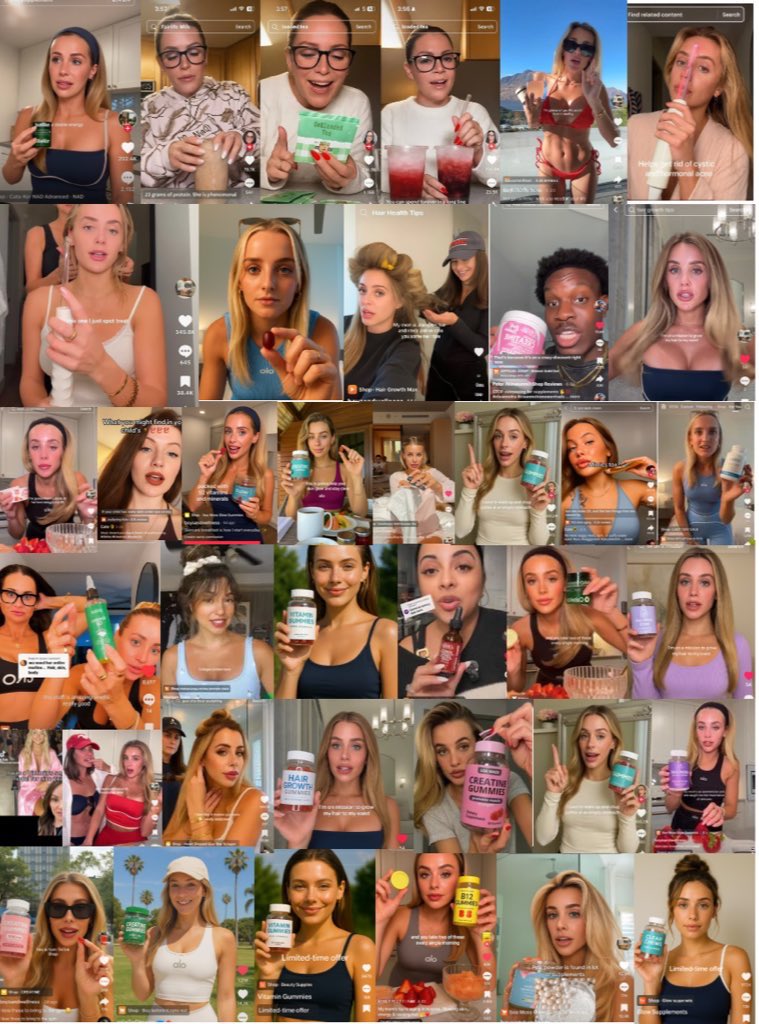

Clawdbot + Kling = 550 videos per day

Fully-realistic UGC ads — cinematic lighting, human motion, perfect pacing — powered by AI agents.

UGC cost: $5

Production time: minutes

Scale: instant

One AI engine that creates, tests, and scales short-form ads automatically — nonstop.

It’s live. Campaigns are scaling now.

Comment + RT “AGENT” and I’ll DM you the full workflow.

(Must be following)

English

Our Applied AI team at Kentauros (kentauros.ai) spends a lot of time tackling the problem of GUI navigation for agents.

Today, we are releasing our first dataset, WaveUI-25k, and our first fine-tuned vision-language model:

huggingface.co/collections/ag…

Why bother about GUI Navigation? Won’t AI just use APIs or have a clear machine-learning interface?

Yes, but APIs aren’t always available, and it will take many years for ML APIs to become a reality, so we’re meeting the world where it is today.

It’s also because UIs are uniquely human, much like language. They’re a high level abstraction of the world and that makes them a fantastic training ground for advanced AI development because they’re complex, challenging, ever changing, and filled with endless edge cases.

They’re a great approximation for AI that uses real-world models, planning, prediction and adaptation on the fly.

Next week we’re releasing a new agent that navigates GUIs very well with a combination of heuristics, classical computer vision and MLLMs. Although we’re super excited about our Gen 2 agent release there are still some limitations. Rather than bolting an expert system onto a ML model, it would be absolutely ideal if models were good at knowing where and what to click on a UI.

Unfortunately, we’ve discovered that today’s frontier models like GPT-4o, Claude Opus 3, Sonnet 3.5, Qwen2, and InternVL are absolutely terrible at three key things on UIs:

1) Returning coordinates

2) Moving the mouse

3) Drawing bounding boxes on GUIs

The reasons shouldn't be surprising. They just weren't trained to do any of these things.

That’s why, for our Gen 3 agent, we’re already working on a click model as the primary navigator, and we’ll fall back to classical techniques as needed.

Our WaveUI-35k dataset is 25K well labeled images that you can use to train a click and coordinate model. We’re currently auto-labeling a larger version of the dataset that is roughly 80K images and we’ll release that soon.

Why do you want this?

Well, maybe you want a model that can navigate smartphones or desktops.

Or maybe you want to control remote desktops the way our AgentSea platform can navigate a virtual machine like a human navigating TeamViewer but with the Agent in charge, the way we do with our ToolFuse protocol (github.com/agentsea/toolf…) and the AgentDesk (github.com/agentsea/agent…) Python packages.

We built this dataset by collecting examples from other existing datasets. The three main sources are:

- WebUI (uimodeling.github.io)

- RoboFlow Website Screenshots (universe.roboflow.com/roboflow-gw7yv…)

- GroundUI-18K (huggingface.co/datasets/agent…)

The datasets are great and we have tremendous respect for the teams that gathered them but unfortunately many of the labels were less than ideal or just wrong. So we set about fixing these fantastic resources into a new, unified, well labeled dataset.

We’ve preprocessed them to have a matching format and programmatically filtered out unwanted examples, such as duplicated, overlapping or low-quality elements.

The resulting pre-processed dataset has ~80k examples at the object/element level -- i.e. each row consists of the bounding box coordinates of a UI object, along with the screenshot of underlying UI (and extra info, like source, screen size, etc).

We then took ~25k examples from the dataset and annotated them to get the following additional info, per example:

- name: A descriptive name of the UI element.

- description: A long, detailed description of the element

- type: The type of the UI element

- OCR: OCR of the UI element. Set to null if no text is available

- language: The language of the OCR text, if available. Set to null if no text is available

- purpose: A general purpose of the element

- expectation: An expectation of what will happen when you click this element

You can explore the dataset at huggingface.co/spaces/agentse…

We’ve also fine-tuned a very small PaliGemma model as a proof of concept. You can check it out here: huggingface.co/spaces/agentse…

You can upload a screenshot and use the phrase “detect” XYZ.

For example, on a screenshot of Google Flights:

* detect the “Return” field

* detect the Vacation rentals button

* detect the flying plane in the picture

To be clear, the PaliGemma model is too small and has too low a resolution to be state of the art. It’s a little too rigid in how it detects things and we want it to be more flexible. It sometimes nails detection tasks and it’s sometimes just wildly off base.

But it is proof that a properly trained model with a well-annotated dataset can make headway on the gnarly problem of navigating GUIs on the wide and wonderful world of the web.

Out next steps are simple:

* Get the full dataset annotated and release it in the next few weeks (we only used 25k out of ~85k potential examples)

* Training the variant of PaliGemma that has higher resolution.

* Training a larger multimodal model like InternVL or Chameleon

We’ll see you next week when we release G2 agents! See you then.

English

@teovito @skirano @everartai @skirano @everartai any suggestions on how to improve writing with negative prompts?

English

These pics were AI-generated using @everartai. Not perfect, but almost. The role of social media managers is getting easier. Someone still has to prompt the AI, generate pictures and videos, and build engagement, but very soon a social media manager will be able to manage more accounts simultaneously. As a business and agency owner, I see thousands of dollars in savings each year. Well done @skirano

English

No excuses.

Take care of your health.

Don’t wait until you’re 48 like me.

- Weight train M-F

- Yoga Sat-Sun

- Eating Macros / Protein

- Daily Meditation

- 10 pages nonfiction a day

- Accountability friends (txt weight ea day)

- Joined @danmartell fitness program

English

XR & VISION PRO NETWORKING THREAD 🚨 I see lots of old school XR people decrying the new wave of Vision Pro excitement with the old "we've been doing that for years!" But rather than cast new people as 'the other', we should all get connected so ideas new and old can synergize!

So, reply to tell us who you are and what you're building or interested in. And retweet to spread this thread!

English

@MattPRD @Scobleizer What volumetric video are you watching on Vision Pro?

English

@Scobleizer Probably instagram and TikTok, but could also be someone new.

Vision Pro isn’t perfect but volumetric videos are A+++

English

@WebAMV Does this work on any of the headsets for webxr?

English

Using gpu to build bvh based on WebGPU, there is no need to wait for build time when switching large models for viewing, and the experience of Viewer becomes smoother👏

Try it too😆:

lgltracer.com/viewer/index.h…

#webgpu #raytracing #threejs #viewer

English

Andreas Hronopoulos retweetledi

@nutlope What if you wanted to take a specific style of images and use that as the theme? Let's say a specific designer who has a style.

English

Announcing roomGPT!

Redesign your room in seconds with AI! 100% free and open source.

roomgpt.io

English

Andreas Hronopoulos retweetledi

In this moment, I am euphoric.

Kraken@krakenfx

As we gear up for 2022, Kraken is celebrating its continued growth and success with the expansion of its ‘staking’ tentacle through an acquisition of Staked. 🥳 Check out our blog for more details about this exciting deal! blog.kraken.com/post/12265/kra…

English

Andreas Hronopoulos retweetledi

Andreas Hronopoulos retweetledi

Andreas Hronopoulos retweetledi

Andreas Hronopoulos retweetledi

@devinaconley What about staking NFTs for music/video in the form of a license with the gysr managing the royalties?

English

I started an EIP discussion on a standard interface to represent NFT "functional ownership" when held by another smart contract. This is very relevant for many DeFi + NFT use cases.

Would love to hear any thoughts or feedback!

ethereum-magicians.org/t/erc-standard…

English