Alek Sobczyk

215 posts

Alek Sobczyk

@AleksandrosSob1

Huawei Research Center, Zurich. Ex- IBM and ETH. Views are my own.

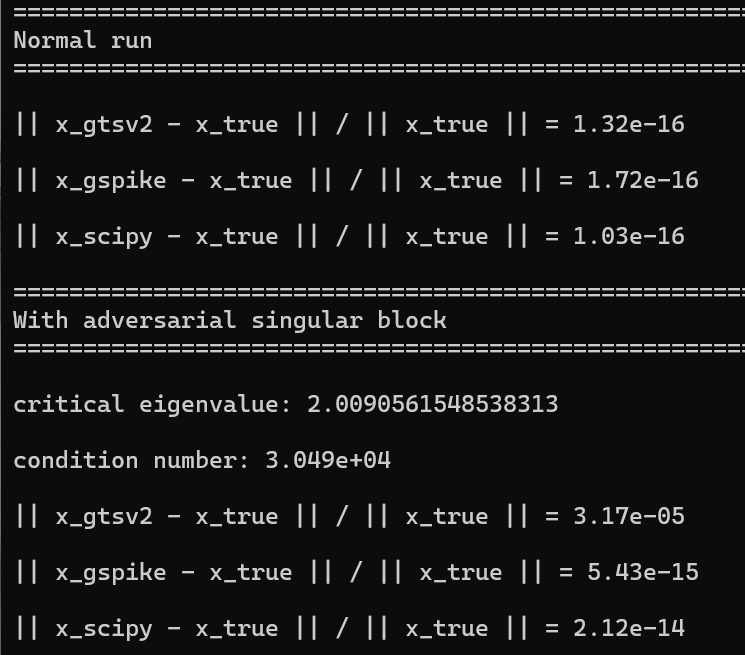

We have a new preprint on DFT and eigenvalue algorithms: "Hermitian Pseudospectral Shattering, Cholesky, Hermitian Eigenvalues, and Density Functional Theory in Nearly Matrix Multiplication Time". Link to Arxiv: arxiv.org/abs/2311.10459

Paper: Analysis of Corrected Graph Convolutions We study the performance of a vanilla graph convolution from which we remove the principal eigenvector to avoid oversmoothing. 1) We perform a spectral analysis for k rounds of corrected graph convolutions, and we provide results for partial and exact classification. 2) For partial classification, we show that each round of convolution can reduce the misclassification error exponentially up to a saturation level, after which performance does not worsen. 3) For exact classification, we show that the separability threshold can be improved exponentially up to O(log n/log log n) corrected convolutions. link: arxiv.org/abs/2405.13987 P.S.: That's the first paper produced as part of the graduate course (CS886, 2024) on graph neural networks that I am teaching!