Sabitlenmiş Tweet

Aligned

8.4K posts

Aligned

@alignedlayer

Aligned builds the tools that turn Ethereum into the world’s financial backend.

Ethereum Katılım Ocak 2024

37 Takip Edilen84.3K Takipçiler

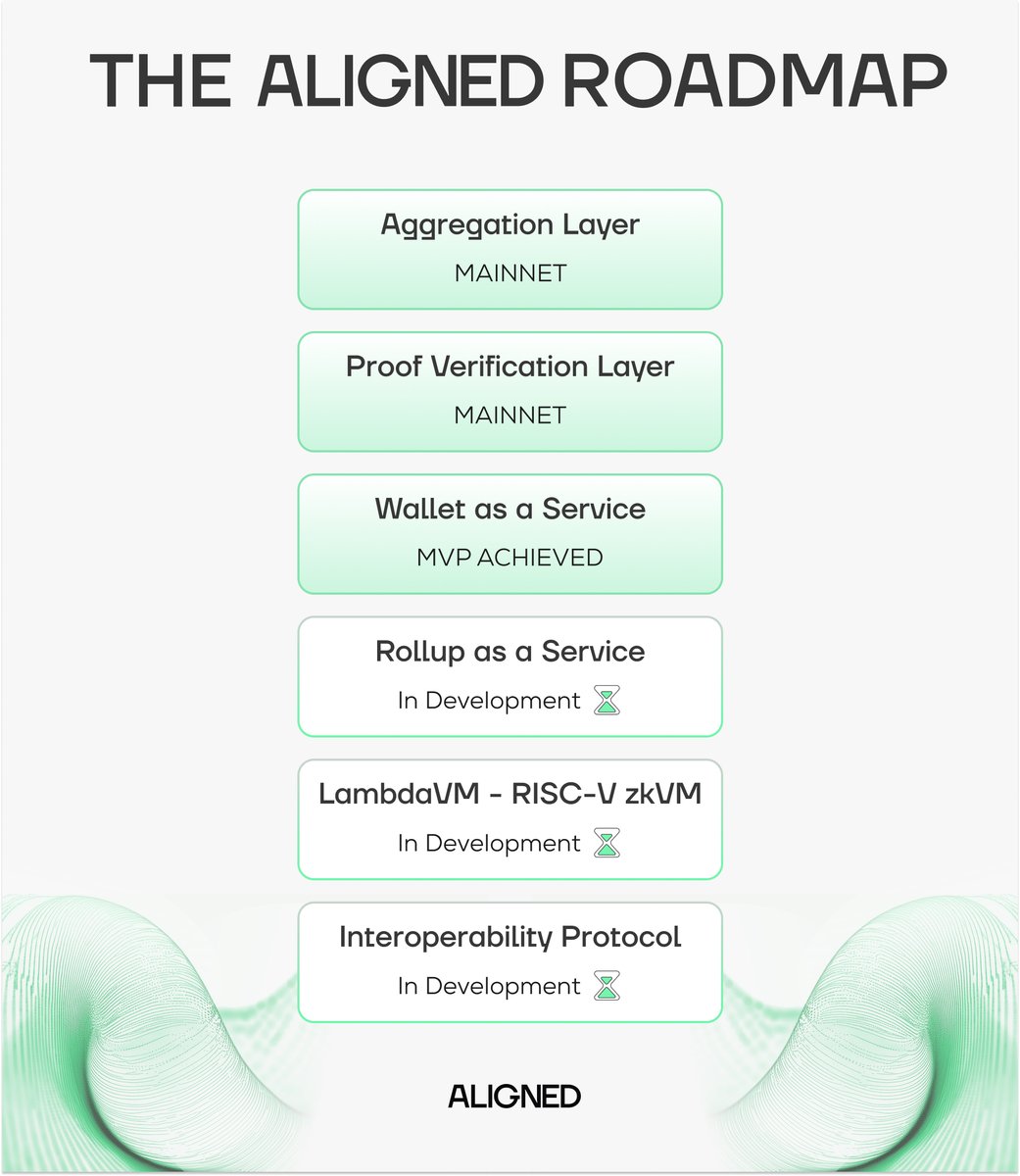

> Proof System

• Goldilocks field, cubic extension, 128 bits of provable security

• FRI as polynomial commitment scheme

• Fully post-quantum, no elliptic curves

> Status

• ~27,000 lines today, targeting ~35,000.

• Performance is competitive with state-of-the-art zkVMs.

• A GPU version and proof-size optimizations are in progress.

• @ethrex_client integration is done.

Full talk from the Main Stage at zkSummit14 in Rome:

youtube.com/watch?v=zCY-YH…

2/

YouTube

English

LambdaVM at zkSummit14: A Talk on Our Minimalistic and Performant RISC-V zkVM

At zkSummit14 in Rome, @diego_aligned (Co-founder and Head of Research at @alignedlayer) presented LambdaVM, a RISC-V zkVM built collaboratively by @class_lambda, @alignedlayer, and @3milabs. The goal is to make the zkVM easy to understand and easy to audit, using the same philosophy behind @ethrex_client, the execution client developed by @class_lambda.

> The Problem

Most zkVMs have hundreds of thousands of lines of code, creating huge attack surfaces. Strong cryptographic arguments on paper don't guarantee secure implementations. Supply-chain attacks, subtle dependency bugs, and unnecessary abstractions have repeatedly shown that large, hard-to-audit codebases are fragile by design.

> Our Approach

Performance through clarity:

• Written in Rust

• Minimal dependencies, few generics

• Code maps 1-to-1 to spec, enabling formal verification

1/

English

.@rj_aligned from @alignedlayer:

"If you want verifiable finance, you also need verifiable credentials. You need to bring the trust of existing systems on-chain".

This is what @sovraio enables.

Verifiable identity on the same backend as finance: Ethereum.

The infrastructure making this work: Aligned's RaaS, powered by @ethrex_client.

English