Allan

345 posts

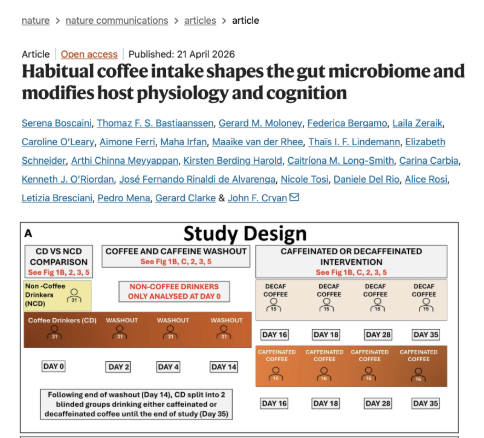

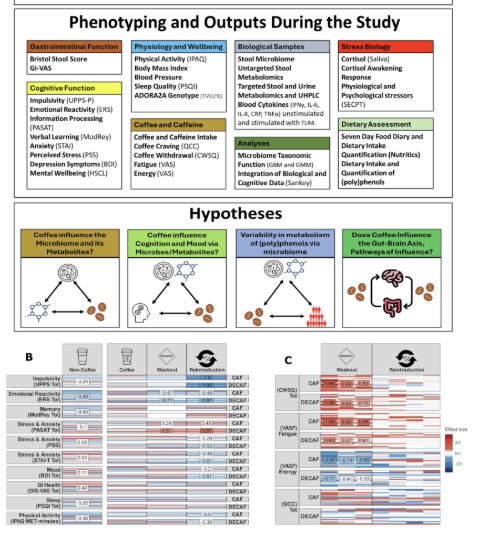

I think this is worth some nuance. In recent history, many companies have employed 'product designers' whose primary activity and output has been the creation of software interface facsimiles, e.g. mockups in a drawing tool like Figma. Those making mockups have of course been doing more than just that, to varying extents leading or more commonly participating in the process of deciding what to build and why. But there was value in that tangible output itself. I think @gokulr is directionally correct that the role of someone whose primary output is the creating of an interface mockup is quickly disappearing. But the role of someone who figures out what needs to exist, why, how it should work, how it should should be positioned, differentiated and made memorable has never been more in demand. I speak with founders on a near weekly basis (many of them in Gokul's own portfolio) desperate for this kind of person. His conclusions though I agree with almost entirely: there will always be an opportunity to specialize in the creation of visual interfaces, but more broadly most product designers who want to be employees (totally fine) should take on more responsibilities that have historically been done by PMs or Engineers, to varying degrees. From my POV, this is just what a product designer is and what we should have been doing the whole time, but that's another post.

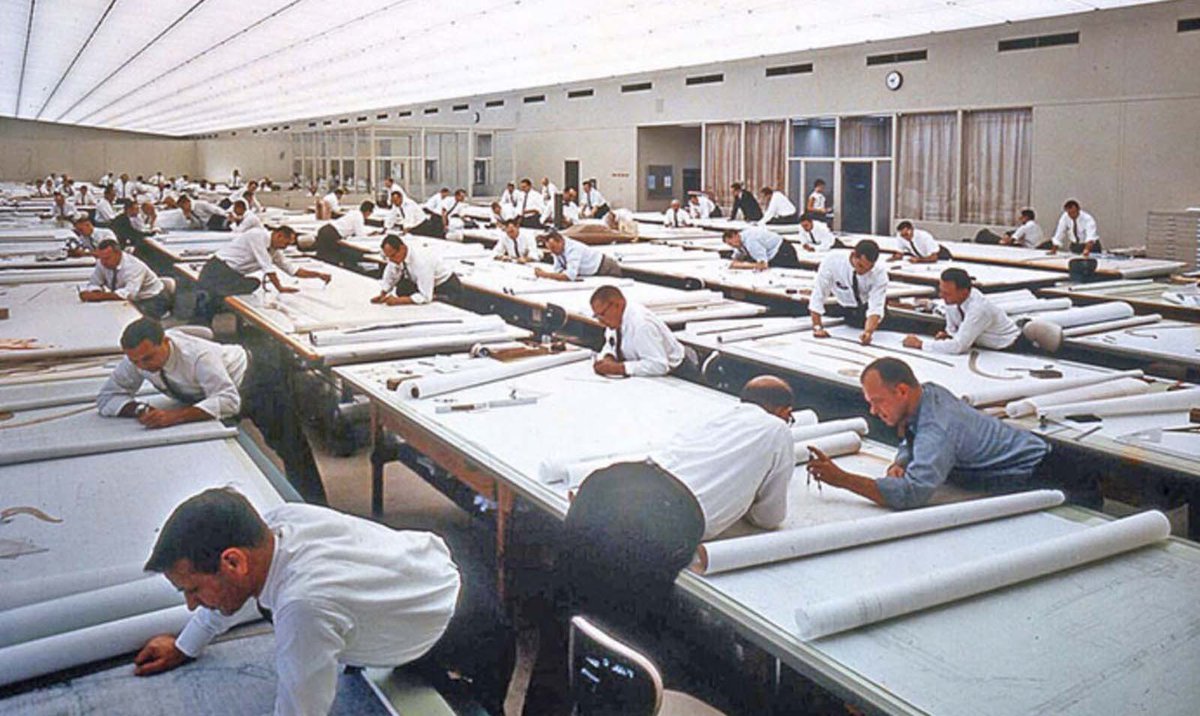

we must end the quirk chungus terrible creative technology project industrial complex (i’m in the @clereviewbooks today about the arts collaborations at Bell Labs and RAND in the 70s!)