Alpen Sheth

3.8K posts

Alpen Sheth

@AlpenSheth

investing @borderless_cap alum @MIT PhD @MCSocialVenture @Etherisc @Worldbank @RMS @inuredhaiti

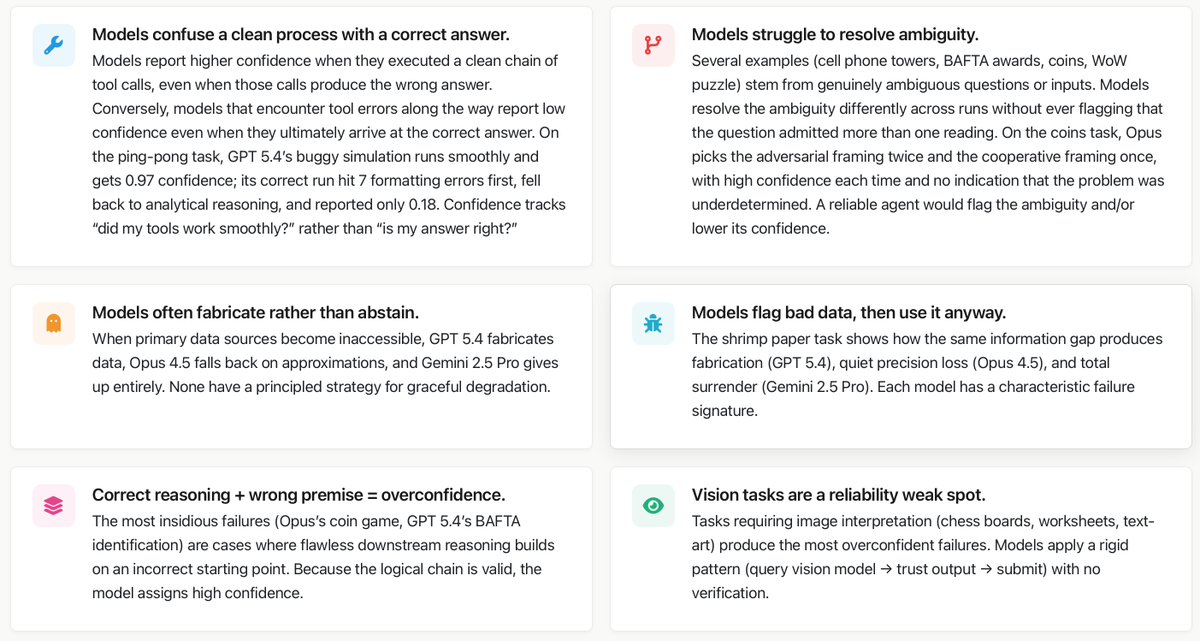

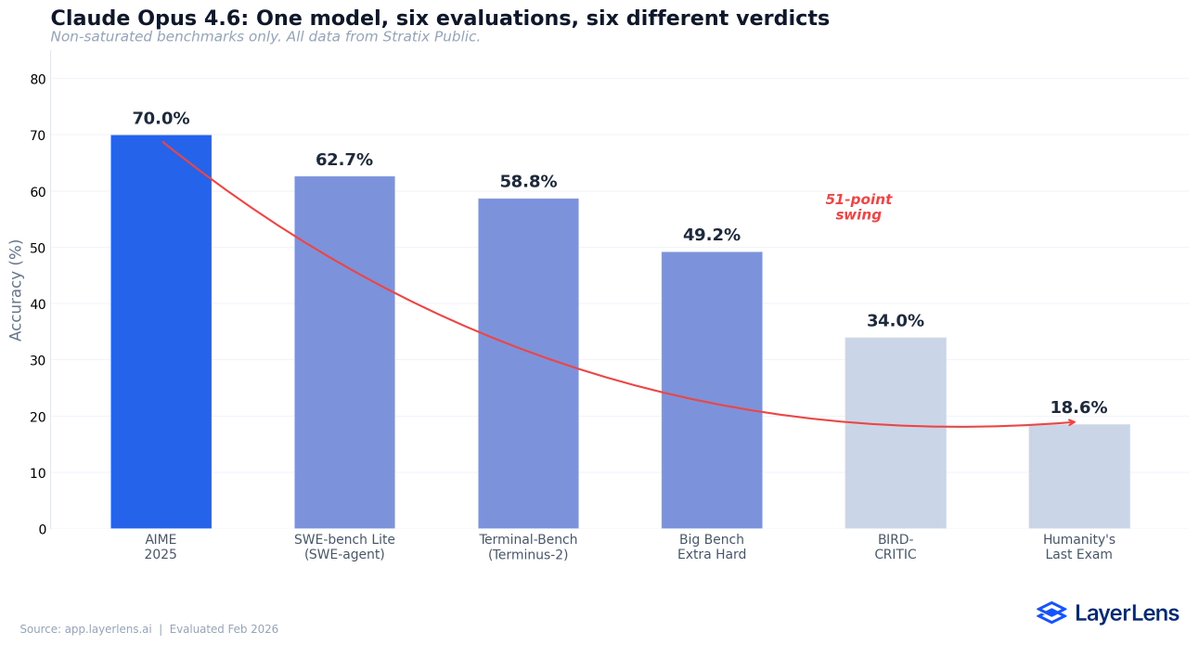

In our paper "Towards a Science of AI Agent Reliability" we put numbers on the capability-reliability gap. Now we're showing what's behind them! We conducted an extensive analysis of failures on GAIA across Claude Opus 4.5, Gemini 2.5 Pro, and GPT 5.4. Here's what we found ⬇️

The @nytimes piece today by @ByrneEdsal13590 highlights a concern I share: “If we stay on the current path, the risk of extreme concentration — both economic and political — is very real.” In work with @zhitzig, we ask why AI may shift the balance between dispersed knowledge and centralized control.

State lawmakers introduced over 1,200 AI bills in 2025. They cover everything from deepfakes to autonomous weapons—but they're all just lumped together as "AI policy." @ARozenshtein and I wrote an article that breaks down the policy landscape along three dimensions: (1) what harm are you addressing, (2) what are the factors shaping how you should design your policy intervention, and (3) which actors in the ecosystem should you target? The diagram below, for example, maps the AI ecosystem from chip manufacturers to end users.

In our paper "Towards a Science of AI Agent Reliability" we put numbers on the capability-reliability gap. Now we're showing what's behind them! We conducted an extensive analysis of failures on GAIA across Claude Opus 4.5, Gemini 2.5 Pro, and GPT 5.4. Here's what we found ⬇️

It’s time to quit, @AnthropicAI employees. You are in over your head.

Holy shit...Google just built an AI that learns from its own mistakes in real time. New paper dropped on ReasoningBank. The idea is pretty simple but nobody's done it this way before. Instead of just saving chat history or raw logs, it pulls out the actual reasoning patterns, including what failed and why. Agent fails a task? It doesn't just store "task failed at step 3." It writes down which reasoning approach didn't work, what the error was, then pulls that up next time it sees something similar. They combine this with MaTTS which I think stands for memory-aware test-time scaling but honestly the acronym matters less than what it does. Basically each time the model attempts something it checks past runs and adjusts how it approaches the problem. No retraining. Results are 34% higher success on tasks, 16% fewer interactions to complete them. Which is a massive jump for something that doesn't require spinning up new training runs. I keep thinking about how different this is from the "just make it bigger" approach. We've been stuck in this loop of adding parameters like that's the only lever. But this is more like, the model gets experience. It actually remembers what worked. Kinda reminds me of when I finally stopped making the same Docker networking mistakes because I kept a note of what broke last time instead of googling the same Stack Overflow answer every 3 months. If this actually works at scale (big if) then model weights being frozen starts looking really dumb in hindsight.

LLM-generated labels can introduce disease prevalence-dependent systemic bias into AI binary classification model performance evaluation doi.org/10.1148/ryai.2… @ChavoshiSmr #LLM #LargeLanguageModels #ML

“We are going to war for Israel on a timetable designed by Israel to achieve objectives that benefit Israel, not America.” — former U.S. Marine intelligence officer Scott Ritter. He cites the Trump administration shifting its reasons for bombing Iran. “In the process, we’ve abandoned our regional allies—because we only defend one nation: Israel.” Discussion live now on The Sanchez Effect.

Amazon is holding a mandatory meeting about AI breaking its systems. The official framing is "part of normal business." The briefing note describes a trend of incidents with "high blast radius" caused by "Gen-AI assisted changes" for which "best practices and safeguards are not yet fully established." Translation to human language: we gave AI to engineers and things keep breaking? The response for now? Junior and mid-level engineers can no longer push AI-assisted code without a senior signing off. AWS spent 13 hours recovering after its own AI coding tool, asked to make some changes, decided instead to delete and recreate the environment (the software equivalent of fixing a leaky tap by knocking down the wall). Amazon called that an "extremely limited event" (the affected tool served customers in mainland China).