@FastCompany It's just the beginning, and our time is coming 😎

Amar.$CELL

16.4K posts

@Amar__C

#CELL $CELL #CellFrameNet @cellframenet #Crypto $PYRO #Pyropass

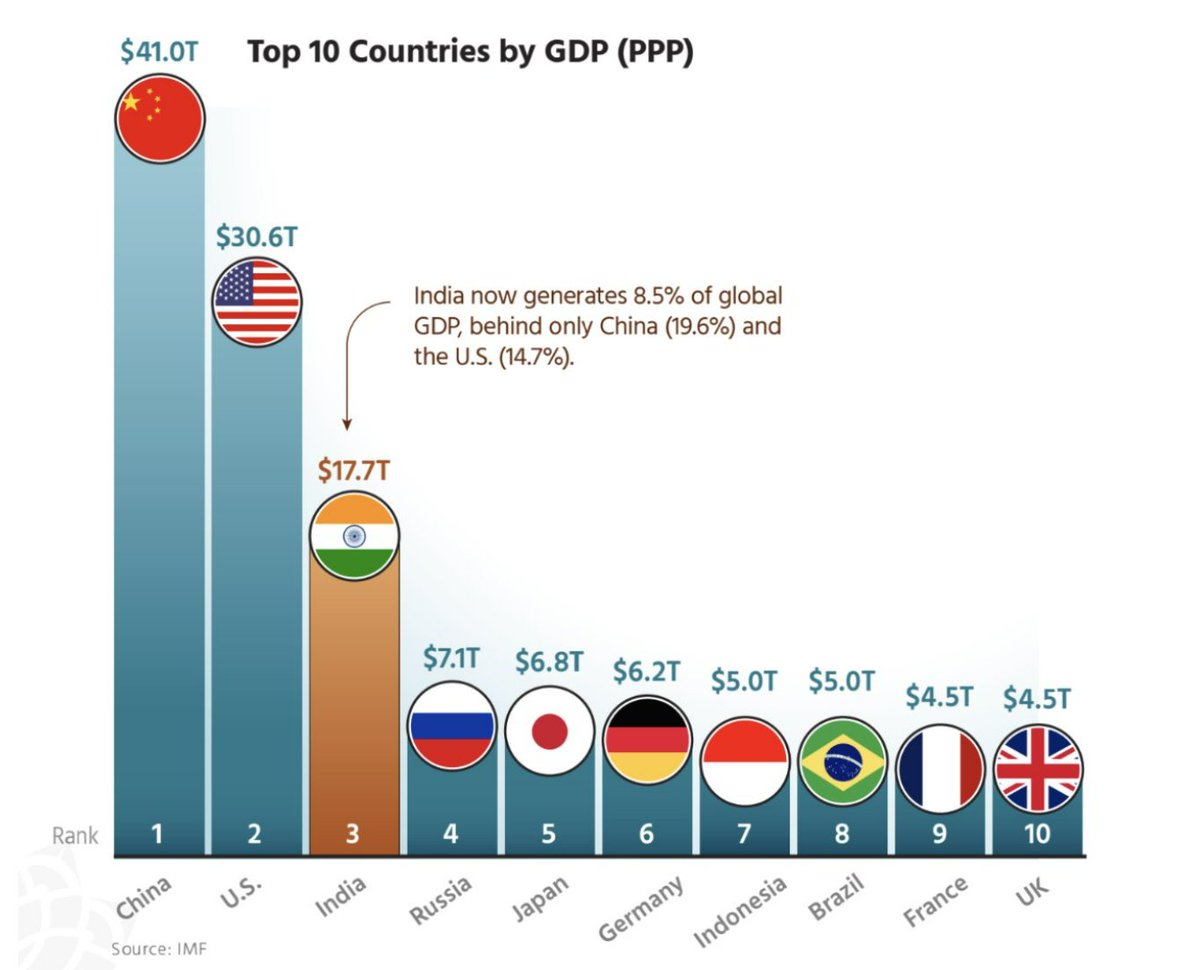

@FastCompany It's just the beginning, and our time is coming 😎

SOLANA IS TESTING QUANTUM-RESISTANT CRYPTOGRAPHY SOLANA IS WORKING TO MAKE THE NETWORK SAFE FROM THE RISING THREAT THAT QUANTUM POSES HOWEVER, SIGNATURES ARE UP TO 40× LARGER AND NETWORK EFFICIENCY TOOK A 90% HIT

Only @cellframenet and it’s simple: because Cellframe is not just another “quantum resistant” project it is the only one that solves the quantum problem at the root, with next generation architecture, while the others are either partial retrofits or limited solutions. 1. Real Quantum Resistance (not marketing) Cellframe is PQC native from the L0 protocol: uses NIST approved algorithms (Falcon + CRYSTALS Dilithium) across the entire stack signatures, transactions, P2P, consensus. It has variable signatures + on the fly upgrades without hard fork. When NIST releases a new better post quantum standard, the network absorbs it automatically. Independent evaluations (Quantum Canary etc.) give A+ to Cellframe in quantum readiness. Algorand gets D (uses Falcon only in state proofs every 256 blocks; the rest of the chain is still vulnerable). Starknet has STARKs (good against quantum in ZK), but doesn’t cover the entire protocol. Other is “quantum resistant”, but stops there. Summary: the others protect part of the chain. Cellframe protects everything and evolves with the quantum. 2. L0 Service Oriented Architecture + Dual Layer Sharding It is a real Layer 0: serves as backbone to build other blockchains, t dApps (trusted decentralized apps) and enterprise services. Dual layer sharding + conditional transactions = extremely high throughput + native and cheap interoperability (instant atomic swaps). Written in pure C with C SDK: runs on mainframes or even low level hardware (smart fridge, IoT). No other project in the poll has this. Zero mining = sustainable and cheap to operate. Algorand is great in TPS and Pure PoS, but lacks this L0 modularity and dual sharding. Starknet is an excellent L2 in ZK, but depends on Ethereum. Other is a simple ledger without smart contracts and without real dApps. 3. Functionality the others don’t deliver Low level t dApps (not just Solidity). CF 20: native quantum resistant token standard. Cross chain interoperability secure by design (not by third party bridge). “Service oriented” vision: companies can run entire enterprise applications with guaranteed quantum security. 4. Asymmetric Upside (the “why now” factor) Tiny market cap (approximately 2M USD) with circulating supply of approximately 37M tokens. Active community and development (mainnet backbone live, masternodes, bridge, explorer). In the poll you linked, CELL won with 49 percent against Algorand’s 44 percent replies unanimous: “$CELL edges this one”. The market is already voting with money. Choosing Algorand is betting that “almost quantum resistant + strong brand” is enough. Choosing Starknet is betting on ZK scalability. Choosing Other is betting on “quantum purist minimalism”. CELL is the only bet that combines: military grade quantum security + scalable L0 architecture + enterprise grade functionality + still absurdly low valuation. It is the project that survives “Q Day” (the day quantum computers break ECDSA RSA) without losing performance or usability. The others will need painful upgrades or become relics. If you want the real alpha of the quantum cycle starting now (Google, governments and NIST are already sounding the alarm), CELL is not “something” it is what the others want to be when they grow up. DYOR, but the numbers and the tech speak clearly. $CELL > rest of the poll.

Quantum Computing has yet to meet its Transistor Moment. @shahinkhan There are half a dozen and more competing foundational technologies. The max number logical qubits today is of order ~ 10. The needed number to use Shor’s algorithm to break secp256k1 is order ~ 1000. It’s not Moore’s law, it’s a simultaneous scaling problem in about 7 dimensions. —- “For a useful fault-tolerant quantum computer, you do not just scale one thing like qubit count. You have to scale several coupled dimensions at once. I would group them into 7 core dimensions: 1.Physical qubit count You need many more physical qubits because logical qubits are encoded across many physical qubits, and practical fault tolerance requires that the logical error rate fall as code size grows. That is exactly the milestone Google highlighted in its below-threshold QEC result, and IBM’s 2025 roadmap likewise frames large-scale FTQC as a problem of moving from physical to logical qubits at much larger scale. 2.Physical gate fidelity / measurement fidelity This is arguably the most important axis. If your physical error rates are not below threshold, adding more qubits does not help; it can make things worse. Google’s below-threshold result is important precisely because larger codes only become beneficial once the underlying physical operations are good enough. 3.Connectivity / routing distance It is not enough to have many qubits; the architecture must let them interact efficiently enough to implement the code. Surface-code style error correction especially depends on local lattice connectivity, while modular architectures care about the quality and rate of links between modules. IBM explicitly ties architecture and coupling layout to the path toward fault tolerance. 4.Coherence time Qubits must stay coherent long enough to complete gates, syndrome extraction, feed-forward, and many QEC cycles. A long coherence time by itself does not guarantee FTQC, but without sufficient coherence margin the whole stack collapses. Recent benchmarking and logical-qubit preservation work still tracks usable performance in microseconds or seconds depending on platform, which shows how central this axis remains. 5.Clock rate / cycle time You asked about clock rate specifically, and yes, it is a real scaling dimension. What matters is not only “how coherent” the qubit is, but also how many reliable operations fit inside the coherence window. A platform with slightly worse raw coherence but much faster gates can outperform a slower one. IBM’s recent logical-layer benchmarking translates fidelity improvements into thousands of executable operations, which is essentially a cycle-budget argument. 6.Classical control, decoding, and feed-forward bandwidth Fault tolerance is a hybrid system. You must repeatedly measure syndromes, decode them classically, and apply corrections fast enough that the quantum side does not outrun the controller. IBM’s FTQC roadmap makes this explicit: the machine is not just a quantum chip, but a full stack including real-time decoding and orchestration. 7.Manufacturability / yield / calibration stability Even if a lab can demonstrate one good logical qubit, a useful machine needs large arrays that can be fabricated, wired, calibrated, and kept stable over long runs. That is why current roadmaps focus on system architecture and data-center-scale integration, not just headline qubit counts. The cleanest way to think about it is this: Qubit count x fidelity x connectivity x coherence x speed/clock x decoding and control x manufacturing processes and these are not independent.”

After research from Google suggested a potential threat to some cryptocurrencies, tokens like QRL and Cellframe (CEL) saw their values rise. f-st.co/Trc4sqc

BREAKING🚨: Physicists Just Created World's Largest Quantum Array with 6,100 qubit.

Which quantum resistance project you like the most ? ✅ @Algorand ✅ @Starknet ✅ @QRLedger ✅ @cellframenet You can mention other project as well 👇