Every ERP modernization conversation right now ends in the same question: where do AI agents actually fit? Bolted onto the ERP roadmap? A separate platform? An overlay? And how do you get value in quarters, not years?

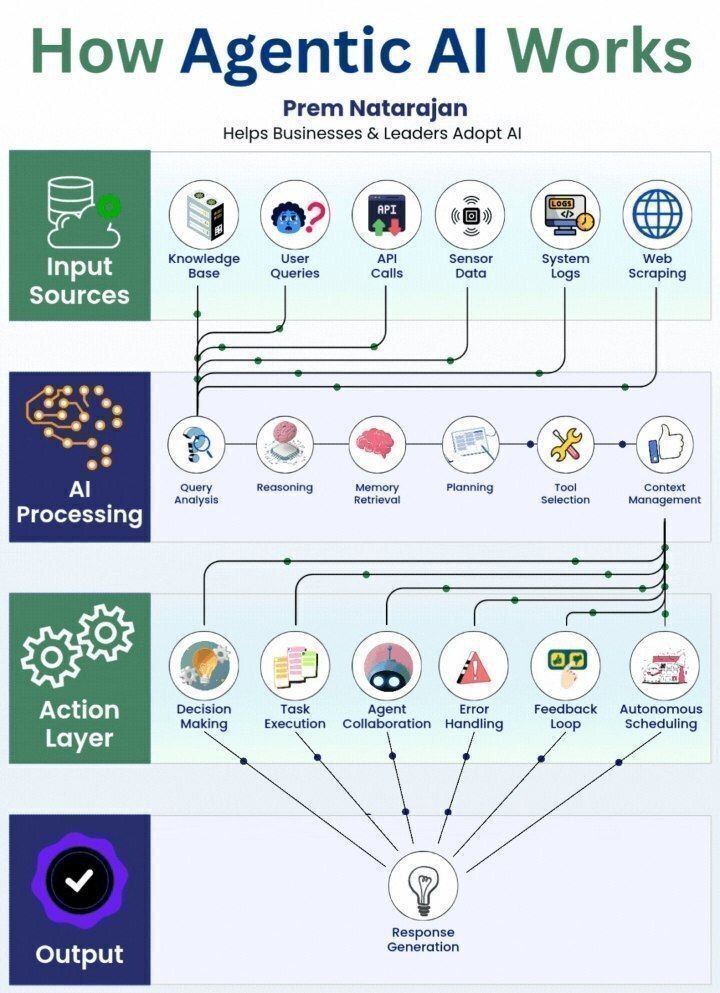

This webinar cuts through the noise for executives evaluating AI on top of Epicor and other supply chain ERPs. We'll unpack the "decision execution gap" — why even strong ERPs leave hours and days between insight and action — and walk through how agentic AI closes it without rip-and-replace. You'll leave with a clearer framework for what to build, what to buy, and what to expect from your team and your stack over the next 12 months.

Reserve Your Spot: elevatiq.com/events-and-web…

#Databricks #OTIF #DemandForecasting #InventoryOptimization #CIO #COO #CSCO #CDO #SupplyChainLeaders

English